Every post, comment, or Wiki edit I authored is hereby licensed under a Creative Commons Attribution 4.0 International License.

Pablo

This is what ACE’s “overview” lists as Anima’s weaknesses:

We think Anima International’s leadership has a limited understanding of racial equity and that this has impacted some of the spaces they contribute to as an international animal advocacy group—such as coalitions, conferences, and online forums. We also think including non-staff members in Anima International’s governing board would increase the board’s capacity to oversee the organization from a more independent and objective perspective.

Their “comprehensive review” doesn’t mention the firing of the CEO as a consideration behind their low rating. The primary reason for their negative evaluation seems to be captured in the following excerpt:

According to our culture survey, Anima International is diverse along the lines of gender identity and sexual identity, however, they are not diverse on racial identity. This is not surprising, as most of the countries in which their member organizations operate are very racially homogenous; in practice, we think it would be particularly difficult for them to successfully attract and hire advocates who are Black, Indigenous, or of the global majority49 (BIPGM) in those countries. Our impression, however, is that the racial homogeneity at the organization has resulted in a limited understanding of racial issues, which has presented itself in some of the public and private communications50 we’ve witnessed from Anima International’s staff in the last year. In particular, we think leadership staff publicly engaging in conversations about the relevance of racial equity to the animal advocacy movement may have had a negative impact on the progress of racial equity in the movement.51 While we think this issue is less salient in the more racially homogenous countries in which they operate, for their work in more racially diverse countries, we think it is particularly important that they prioritize developing an understanding of racial equity. Additionally, for any organization working on an international scale, there are spaces that all staff may encounter that are more racially diverse—such as coalitions, conferences, and online forums—in which it is again important to have an understanding of racial equity. This is particularly important so as to ensure the safety of BIPGM and to not impede—and to eventually contribute to—work on racial equity in the broader animal advocacy movement, which we believe will be crucial to its long-term success.52 Note: Our concern here is specifically about their understanding of racial issues and not issues relating to ethnicity, of which they report frequently encountering in their work in Eastern Europe and Russia—we have no reason to doubt their handling of those situations.53

In our culture survey, some respondents mentioned that leadership could offer training to be more inclusive or to better support staff who are members of marginalized groups.

Anima International supports R/DEI through their human resources activities. Anima International has a workplace code of ethics/conduct and a written statement that they do not tolerate discrimination on the basis of race, gender, sexual orientation, disability status, or other characteristics. Anima International has a written procedure for filing complaints, as well as explicit protocols for addressing concerns or allegations of harassment54 or discrimination.55 In our culture survey, 96% of respondents agreed that Anima International protects staff, interns, and volunteers from harassment and discrimination in the workplace, and 98% agreed that they have someone to go to in case of harassment or discrimination. However, our culture survey suggests that Anima International’s staff experienced or witnessed some harassment or discrimination in the workplace during the past year, more than the average charity under review. Some respondents mentioned that they witnessed troubling behavior from the former CEO but that they were satisfied with leadership’s handling of the situation, i.e., suspending and removing the former CEO. Because staff feel overall protected from harassment and discrimination, and Anima International seems to have in place systems to prevent and handle harassment and discrimination in the workplace, we are not highly concerned about this finding.

Anima International does not offer regular trainings on topics such as harassment and discrimination in the workplace. In our culture survey, 75% of staff agree that they and their colleagues have been sufficiently trained in matters of R/DEI. Respondents mentioned that training has not taken place, or they are not needed. We believe that the opportunities for the team to learn about R/DEI at Anima International should be increased.

Overall, we believe that Anima International is less diverse, equitable, and inclusive than the average charity we evaluated this year.

---

Although it isn’t relevant to this particular thread, I’d like to urge all participants to consider Will Bradshaw’s comment and try to “hav[e] this discussion in a more productive and conciliatory way, which has less of a chance of ending in an acrimonious split”, insofar as this is compatible with maintaining our standards of intellectual rigor.

Many people are tired of being constantly exposed to posts that trigger strong emotional reactions but do not help us make intellectual progress on how to solve the world’s most pressing problems. I have personally decided to visit the Forum increasingly less frequently to avoid exposing myself to such posts, and know several other EAs for whom this is also the case. I think you should consider the hypothesis that the phenomenon I’m describing, or something like it, motivated the Forum team’s decision, rather than the sinister motive of “attemp[ting] to sweep a serious issue under the rug”.

Retrospective grant evaluations

Research That Can Help Us Improve

EA funders allocate over a hundred million dollars per year to longtermist causes, but a very small fraction of this money is spent evaluating past grantmaking decisions. We are excited to fund efforts to conduct retrospective evaluations to examine which of these decisions have stood the test of time. He hope that these evaluations will help us better score a grantmaker’s track record and generally make grantmaking more meritocratic and, in turn, more effective. We are interested in funding evaluations not just of our own grantmaking decisions (including decisions by regrantors in our regranting program), but also of decisions made by other grantmaking organizations in the longtermist EA community.

It seems that half of these examples are from 15+ years ago, from a period for which Eliezer has explicitly disavowed his opinions

Just to note that the boldfaced part has no relevance in this context. The post is not attributing these views to present-day Yudkowsky. Rather, it is arguing that Yudkowsky’s track record is less flattering than some people appear to believe. You can disavow an opinion that you once held, but this disavowal doesn’t erase a bad prediction from your track record.

Like him, I only know about this particular essay from Torres, so I will limit my comments to that.

I personally do not think it is appropriate to include an essay in a syllabus or engage with it in a forum post when (1) this essay characterizes the views it argues against using terms like ‘white supremacy’ and in a way that suggests (without explicitly asserting it, to retain plausible deniability) that their proponents—including eminently sensible and reasonable people such as Nick Beckstead and others— are white supremacists, and when (2) its author has shown repeatedly in previous publications, social media posts and other behavior that he is not writing in good faith and that he is unwilling to engage in honest discussion.

(To be clear: I think the syllabus is otherwise great, and kudos for creating it!)

EDIT: See Seán’s comment for further elaboration on points (1) and (2) above.

This was a very interesting post. Thank you for writing it.

I think it’s worth emphasizing that Rotblat’s decision to leave the Manhattan Project was based on information available to all other scientists in Los Alamos. As he recounts in 1985:

the growing evidence that the war in Europe would be over before the bomb project was completed, made my participation in it pointless. If it took the Americans such a long time, then my fear of the Germans being first was groundless.

When it became evident, toward the end of 1944, that the Germans had abandoned their bomb project, the whole purpose of my being in Los Alamos ceased to be, and I asked for permission to leave and return to Britain.

That so many scientists who agreed to become involved in the development of the atomic bomb cited the need to do so before the Germans did, and yet so few chose to terminate their involvement when it had become reasonably clear that the Germans would not develop the bomb provides an additional, separate cautionary tale besides the one your post focuses on. Misperceiving a technological race can, as you note, make people more likely to embark on ambitious projects aimed at accelerating the development of dangerous technology. But a second risk is that, once people have embarked on these projects and have become heavily invested in them, they will be much less likely to abandon them even after sufficient evidence against the existence of a technological race becomes available.

Great post, thank you for compiling this list, and especially for the pointers for further reading.

In addition to Tobias’s proposed additions, which I endorse, I’d like to suggest protecting effective altruism as a very high priority problem area. Especially in the current political climate, but also in light of base rates from related movements as well as other considerations, I think there’s a serious risk (perhaps 15%) that EA will either cease to exist or lose most of its value within the next decade. Reducing such risks is not only obviously important, but also surprisingly neglected. To my knowledge, this issue has only been the primary focus of an EA Forum post by Rebecca Baron, a Leaders’ Forum talk by Roxanne Heston, an unpublished document by Kerry Vaughan, and an essay by Leverage Research (no longer online). (Risks to EA are also sometimes discussed tangentially in writings about movement building, but not as a primary focus.)

Have you considered doing a joint standup comedy show with Nick Bostrom?

As a result of corporate due diligence, as well as the latest news reports regarding mishandled customer funds and alleged US agency investigations, we have decided that we will not pursue the potential acquisition of http://FTX.com.

Some concrete problems I see with your choice:

The name of an organization should ideally consist of two or three main words, perhaps four if there are strong enough reasons. Yours has six.

The acronym formed by the name should ideally be pronounceable and aesthetically pleasing. I’m not sure CEEALAR is pronounceable. I don’t think it’s pleasing.

The rules for generating the acronym should ideally be consistent. Either all articles and prepositions are included (e.g. CFAR) or none are (e.g. CEA). In ‘Centre for Enabling EA Learning and Research’, CEEALAR includes ‘and’ but excludes ‘for’.

[Note: Greg tells me that the name needs to be intelligible to the Charity Commission, so I retract this bullet point] The name need not provide a full description of the nature of the organization, or even be intelligible to newcomers. Those are desiderata, but may be trumped by other considerations. Consider, e.g., 80,000 Hours: no one would ever guess what they do just from the name alone, but it is still adequate, and much better than, say, Career and Coaching Services for Young Effective Altruists (CACSYEA).

I’ll try to think of some concrete suggestions later, but all of Jonas’s proposals look superior to CEEALAR, in my opinion. If you don’t like the word ‘Hotel’ because of its for-profit connotations, how about replacing it with ‘House’?

You may also want to consider creating a poll on an EA Facebook group, just like other EA orgs which went through a process of rebranding did in the past (e.g. Stefan Torges created one such poll a couple of months ago asking for alternatives to ‘Foundational Research Institute’).

I hope this doesn’t come across as overly critical. Congratulations for putting in all the time and effort required to get the (former) EA Hotel registered as a proper charity!

EDIT: See also Ryan’s comment.

I agree with your assessment. It is interesting to note that Singer’s comments are in response to Holden, who used to hold a similar view but no longer does (I believe).

The other part I found surprising was Singer’s comparison of longtermism with past harmful ideologies. At least in principle, I do think that, when evaluating moral views, we should take into consideration not only the contents of those views but also the consequences of publicizing them. But:

These two types of evaluation should be clearly distinguished and done separately, both for conceptual clarity and because they may require different responses. If the problem with a view is not that it is false but that it is dangerous, the appropriate response is probably not to reject the view, but to instead be strategic about how one discusses it publicly (e.g. give preference to less public contexts, frame the discussion in ways that reduce the view’s dangers, etc.)

As Richard Chappell pointed out recently, if one is going to consider the consequences of publicizing a view when evaluating it, one should also consider the consequences of publicizing objections to that view. And it seems like objections of the form “we should reject X because publicizing X will have bad consequences” have often had bad consequences historically.

The moral evaluation of the consequences expected to result from public discussion of a view should not beg the question against the view under consideration! Longtermists believe that people in the future, no matter how removed from us, are moral patients whom we should help. So in evaluating longtermism, one cannot ignore that, from a longtermist perspective, publicly demonizing this view—by comparing it to the Third Reich, Soviet communism, or white supremacy—will likely have very bad consequences (e.g. by making society less willing to help far-future people). (Note that this is very different from the usual arguments for utilitarianism being self-effacing: those arguments purport to establish that publicizing utilitarianism has bad consequences, as evaluated by utilitarianism itself. Here, by contrast, a non-longtermist moral standard is assumed when evaluating the consequences of publicizing longtermism.)

Picking reference classes is tricky. Perhaps it’s plausible to put longtermism in the reference class of “utopian ideology with considerable abuse potential”. But it also seems plausible to put longtermism in the reference class of “enlightened worldview that seeks to expand the circle of moral concern” (cf. Holden’s “Radical empathy”). In considering the consequences of publicizing longtermism, it seems objectionable to highlight one reference class, which suggests bad consequences, and ignore the other reference class, which suggests good consequences.

I’d expect people to react very different to memes, so I’m very open to being persuaded that having memes on the Forum is in fact a good idea. But my independent impression is that memes to some degree trivialize our message, and that insofar as there’s a place for them in the larger EA ecosystem, it is not on the Forum, which I see primarily as a place for conducting high-quality, high-fidelity intellectual discussion.

There is now a dedicated FHI website with lists of selected publications and resources about the institute. (Thanks to @Stefan_Schubert for bringing this to my attention.)

Retaliation is bad.

People seem to be using “retaliation” in two different senses: (1) punishing someone merely in response to their having previously acted against the retaliator’s interests, and (2) defecting against someone who has previously defected in a social interaction analogous to a prisoner’s dilemma, or in a social context in which there is a reasonable expectation of reciprocity. I agree that retaliation is bad in the first sense, but Will appears to be using ‘retaliation’ in the second sense, and I do not agree that retaliation is bad in this sense.

(I haven’t followed this thread closely and I do not have object-level views about the Nonlinear dispute. Sharing just in case it helps clear unnecessary misunderstandings.)

I second the suggestion to put at least a bit of thinking into coming up with more memorable, pronounceable and authoritative alternatives, if it’s not too late already. Really, this is an acronym that will last for years or decades, will be written and uttered thousands of times, and will often be the very first thing someone will see or hear when exposed to the organization.

Talking with some physicist friends helped me debunk the many worlds thing Yud has going.

Yudkowsky may be criticized for being overconfident in the many-worlds interpretation, but to feel that you have “debunked” it after talking to some physicist friends shows excessive confidence in the opposite direction. Have you considered how your views about this question would have changed if e.g. David Wallace had been among the physicists you talked to?

Also, my sense is that “Yud” was a nickname popularized by members of the SneerClub subreddit (one of the most intellectually dishonest communities I have ever encountered). Given its origin, using that nickname seems disrespectful toward Yudkowsky.

See also Anders’s more personal reflections:

I have reached the age when I have seen a few lifecycles of organizations and movements I have followed. One lesson is that they don’t last: even successful movements have their moment and then become something else, sclerotize into something stable but useless, or peter out. This is fine. Not in some fatalistic “death is natural” sense, but in the sense that social organizations are dynamic, ideas evolve, and there is an ecological succession of things. 1990s transhumanism begat rationalism that begat effective altruism, and to a large degree the later movements suck up many people who would otherwise have been recruited by the transhumanists.

FHI did close before its time, but it is nice to know it did not become something pointlessly self-perpetuating. As we noted when summing up, 19 years is not bad for a 3-year project. Indeed, a friend remarked that maybe all organisations should have a 20-year time limit. After that, they need to be closed down and recreated if they are still useful, shedding some of the accumulated dross.

The ecological succession of organizations and movements is not all driven by good forces. A fresh structure driven by interested and motivated people is often gradually invaded by poseurs, parasites and imitators, gradually pushing away the original people (or worse, they mutate into curators, gatekeepers and administrators). Many ideas develop, flourish, become explored and then forgotten once a hype peak is passed – even if they still have merit. People burn out, lose interest, form families and have to change priorities, or the surrounding context make the movement change in nature. Dwindling activist movements may suffer “core collapse” as moderate members drift off while the hard core get more radical and pursue ever more extreme activism in order to impress each other rather than the world outside.

FHI did not do any of that. If we had a memetic failure, it was likely more along the lines of developing a shared model of the world and future that may have been in need for more constant challenge. That is one reason why I hope there will be more organizations like FHI but not thinking alike – places like CSER, Mimir, FLI, SERI, GCRI, and many others. We need the focus of a strongly connected organization to build thoughts and systems of substance but separate organizations to get mutual critique and diversity in approaches. Plus, hopefully, metapopulation resilience against individual organizational failures.

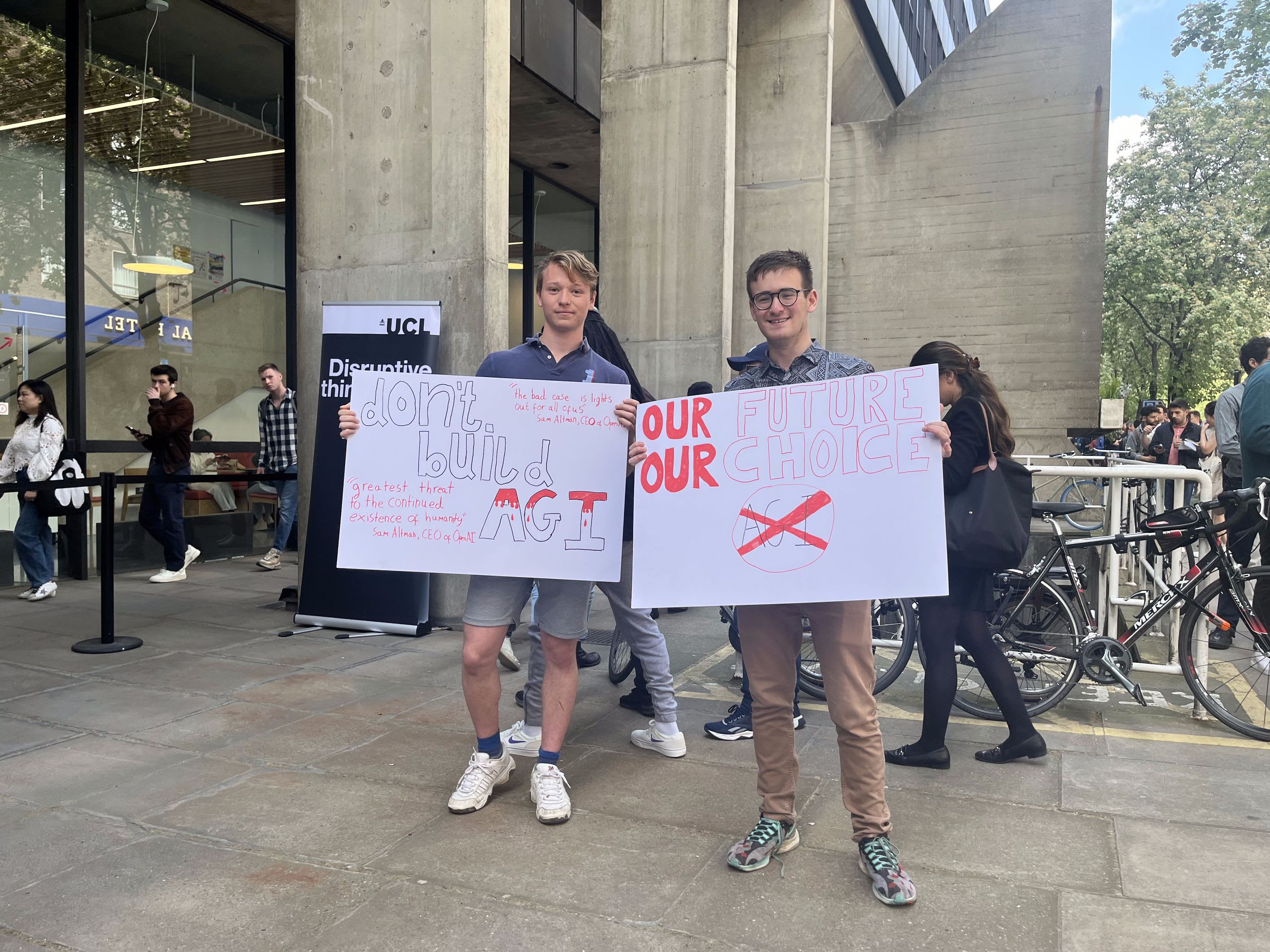

Protesting at leading AI labs may be significantly more effective than most protests, even ignoring the object-level arguments for the importance of AI safety as a cause area. The impact per protester is likely unusually big, since early protests involve only a handful of people and impact probably scales sublinearly with size. And very early protests are unprecedented and hence more likely (for their size) to attract attention, shape future protests, and have other effects that boost their impact.

FWIW, I think that the qualification was very appropriate and I didn’t see the author as intending to start a “bravery debate”. Instead, the purpose appears to have been to emphasize that the concerns were raised in good faith and with limited information. Clarifications of this sort seem very relevant and useful, and quite unlike the phenomenon described in Scott’s post.

It’s probably worth noting that Holden has been pretty open about this incident. Indeed, in a talk at a Leaders Forum around 2017, he mentioned it precisely as an example of “end justify the means”-type reasoning.