Astrobiologist @ Imperial College London, Mars2020

Partnerships Coordinator @ SGAC Space Safety and Sustainability Project Group

Interested in: Space Governance, Great Power Conflict, Existential Risk, Cosmic threats, Academia, International policy

Astrobiologist @ Imperial College London, Mars2020

Partnerships Coordinator @ SGAC Space Safety and Sustainability Project Group

Interested in: Space Governance, Great Power Conflict, Existential Risk, Cosmic threats, Academia, International policy

Thank you for these updates! They are super useful for me as someone who is just starting to get more involved with EA. The updates are really helping me get a good overview of what EA’s priorities are and what measurable differences the movement is making. I come out of the post with a list of things to look further into :D

I’m thinking about organising a seminar series on space and existential risk. Mostly because it’s something I would really like to see. The webinar series would cover a wide range of topics:

Asteroid Impacts

Building International Collaborations

Monitoring Nuclear Weapons Testing

Monitoring Climate Change Impacts

Planetary Protection from Mars Sample Return

Space Colonisation

Cosmic Threats (supernovae, gamma-ray bursts, solar flares)

The Overview Effect

Astrobiology and Longtermism

I think this would be an online webinar series. Would this be something people would be interested in?

Hello! I’m just here to introduce myself as I think I’m a bit of an unusual effective altruist. I am an astrobiologist and my research focuses on the search for life on Mars. Before discovering effective altruism I was always very interested in the long term future of life in the context of looming existential risks. I thought the best thing to do was to send life to other planets so that it would survive in a worst case scenario. But a masters degree later, I got into effective altruism and decided that this cause was a 10⁄10 on importance, 10⁄10 on neglectedness, and 1⁄10 on tractability.

So my focus has changed more recently as I progress through my PhD. I’m interested in the moral implications of astrobiology as it plays a really important role at the core of longtermism. There are a few moral implications that depend on astrobiology research:

Research conclusion: The universe and our Solar System are full of habitable celestial bodies. Moral implication: The number of potential future humans is huge in the long term future, so we ought to protect these people through research into existential risks.

Research conclusion: The universe seems to be empty of life. Moral Implication: Life on Earth is extremely valuable, so ensuring its survival should be the highest moral priority.

Research conclusion: Planets like Earth are extremely rare and far away. Moral implication: “there is no planet B”—we ought to protect our Earth for the next ~1000 years as there is no backup plan

I plan to investigate these ideas further and see where they lead me. I think that as I progress in my career, I can equip philosophy and outreach to help people understand the long term perspective and inspire action towards tackling existential risks. Great to meet you all!

Yeah basically that was my reasoning. I’m super sceptical about this risk. The virus may destroy one ecosystem in an extreme environment or be a very effective pathogen in specific circumstances but would be unlikely to be a pervasive threat.

This theoretical microbe would have invested so many stat points in adaptations like extreme UV radiation resistance, resistance to toxins in Mars soil like perchlorates and H2O2, and totally unseen levels of desiccation, salinity, and ionic strength resistance that would be useless on Earth. And it would have to power all of these useless abilities on a food source that it is likely not suited to metabolising, and definitely not under the conditions it is used to. I just can’t imagine how it would be a huge threat around the World. But in a worst case scenario, it could kill a lot of people or damage an ecosystem we rely on heavily with massive global implications, so 7⁄10.

I have written this post introducing space and existential risk and this post on cosmic threats, and I’ve come up with some ideas for stuff I could do that might be impactful. So, inspired by this post, I am sharing a list of ideas for impactful projects I could work on in the area of space and existential risk. If anyone working on anything related to impact evaluation, policy, or existential risk feels like ranking these in order of what sounds the most promising, please do that in the comments. It would be super useful! Thank you! :)

(a) Policy report on the role of the space community in tackling existential risk: Put together a team of people working in different areas related to space and existential risk (cosmic threats, international collaborations, nuclear weapons monitoring, etc.). Conduct research and come together to write a policy report with recommendations for international space organisations to help tackle existential risk more effectively.

(b) Anthology of articles on space and existential risk: Ask researchers to write articles about topics related to space and existential risk and put them all together into an anthology. Publish it somewhere.

(c) Webinar series on space and existential risk: Build a community of people in the space sector working on areas related to existential risk by organising a series of webinars. Each webinar will be available virtually.

(d) Series of EA forum posts on space and existential risk: This should help guide people to an impactful career in the space sector, build a community in EA, and better integrate space into the EA community.

(e) Policy adaptation exercise SMPAG > AI safety: Use a mechanism mapping policy adaptation exercise to build on the success of the space sector in tackling asteroid impact risks (through the SMPAG) to figure out how organisations working on AI safety can be more effective.

(f) White paper on Russia and international space organisations: Russia’s involvement in international space missions and organisations following its invasion of Ukraine could be a good case study for building robust international organisations. E.g. Russia was ousted from ESA, is still actively participating on the International Space Station, and is still a member of SMPAG but not participating. Figuring out why Russia stayed involved or didn’t with each organisation could be useful.

(g) Organise an in-person event on impactful careers in the space sector: This would be aimed at effective altruists and would help gauge interest and provide value.

Thank you, and very good question! The short answer is not really. I think that building momentum on existential risk reduction from the space sector could be tractable. One way to do this would be to found organisations that tackle some of the cosmic threats with unknown severity and probability. But to be honest I’m not sure if that’s necessary, maybe the LTTF or other governments and organisations should just fund some more research into these threats.

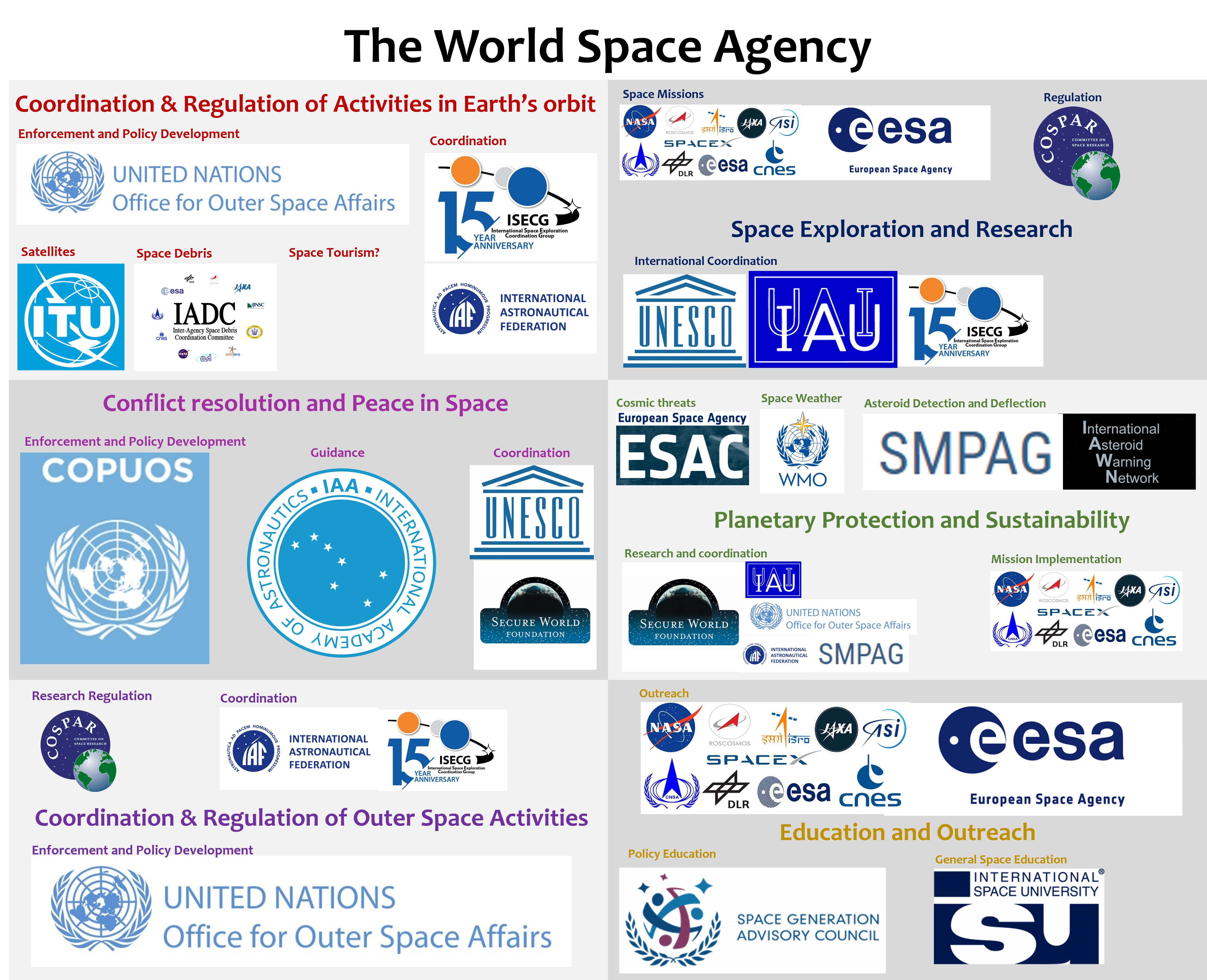

I think the main area in which EAs can have an impact is by developing existing organisations, with the aim of increasing their power to enforce policy, developing their interconnectedness, and increasing their prevalence. By doing this, we may be able to increase great power collaboration, build up institutions that will naturally evolve into space governance structures for the long term, while helping to tackle natural existential risks directly.

I’m making a post about this strategy at the moment, so happy to elaborate, but I don’t want to write the whole post in one comment! Here’s a diagram from the post draft to show how well covered most areas in space are:

Plugging this into EAometer....

We can propose a project to “direct charitable donations to popular but low-impact causes to the charities with the highest impact within each low-impact cause”

We can score this project on importance, tractability, and neglectdness to help decide if it’s worth working on.

Importance: Probably a 3⁄10 as this project is directed at low-impact causes. But the causes may be fairly important as lots of people care about them/are impacted by them enough to donate.

Tractability: I think 5⁄10. Charities like Cancer Research and WWF have monopolies over giving to these causes, and dominate advertising. So I’m not sure how we could peel people away from that. But the fact that lots of people donate to these causes would probably make it easier to get donations to grant funds on these cause areas—but maybe they wont attract the type of people who give through GWWC/EA.

Neglectedness: Not sure, I’d have to do some research. But I would guess it’s low because these are popular causes, so they would be very busy with researchers to trying to increase impact.

So to conclude, I would say it would be hard to implement this project and compete in such busy and giant cause areas that invest a lot of money in advertising. The change in impact is most likely not as great as just directing people to more effective cause areas. Popular cause areas are so over crowded that probably everything gets funded anyway.

Greetings! I’m a doctoral candidate and I have spent three years working as a freelance creator, specializing in crafting visual aids, particularly of a scientific nature. However, I’m enthusiastic about contributing my time to generate visuals that effectively support EA causes.

Typically, my work involves producing diagrams for academic grant applications, academic publications, and presentations. Nevertheless, I’m open to assisting with outreach illustrations or social media visuals as well. If you find yourself in need of such assistance, please don’t hesitate to get in touch! I’m happy to hop on a zoom chat

Just curious, why did you decide not to tackle AI risks? This seems like it would be more of a natural flow based on your interest in existential risk and experience with programming.

I am a researcher in the space community and I recently wrote a post introducing the links between outer space and existential risk. I’m thinking about developing this into a sequence of posts on the topic. I plan to cover:

Cosmic threats—what are they, how are they currently managed, and what work is needed in this area. Cosmic threats include asteroid impacts, solar flares, supernovae, gamma-ray bursts, aliens, rogue planets, pulsar beams, and the Kessler Syndrome. I think it would be useful to provide a summary of how cosmic threats are handled, and determine their importance relative to other existential threats.

Lessons learned from the space community. The space community has been very open with data sharing—the utility of this for tackling climate change, nuclear threats, ecological collapse, animal welfare, and global health and development cannot be understated. I may include perspective shifts here, provided by views of Earth from above and the limitless potential that space shows us.

How to access the space community’s expertise, technology, and resources to tackle existential threats.

The role of the space community in global politics. Space has a big role in preventing great power conflicts and building international institutions and connections. With the space community growing a lot recently, I’d like to provide a briefing on the role of space internationally to help people who are working on policy and war.

Would a sequence of posts on space and existential risk be something that people would be interested in? (please agree or disagree to the post) I haven’t seen much on space on the forum (apart from on space governance), so it would be something new.

Very interesting post, I haven’t thought about animal welfare in Africa before. I guess the first thing I (and most others) think of when I imagine philanthropy in Africa is human poverty and disease. With this perspective in mind, I wonder how this impacts funding sources from the western world… Getting money out of the “animal welfare budget” for this will probably work! But getting money out of the “philanthropy in Africa” budget I imagine will be very challenging.

This event is now open to virtual attendees! It is happening today at 6:30PM BST. The discussion will focus on how the space sector can overcome international conflicts, inspired by the great power conflict and space governance 80K problem profiles.

I’m not sure I agree with your appreciation of upper and lower cased EA. The article refers to “lower case” effective altruism as:

“A philosophy that advocates using evidence and reasoning to try to do the most good possible with a given amount of resources.”

That is EA and all of our cause areas and strategies have come out of that.

But the article seems to insinuate that some cause areas are somehow different to this philosophy of EA, being injected into us by people like SBF. The article defines “Upper case” effective altruism as “assigning numerical values to human suffering and the “worth” of current and future human beings”, which includes longtermism and earning to give. I don’t think this distinction makes sense, and I don’t think it’s good for the movement to oust unpopular areas of EA onto a different boat, like a plague that’s unjustified by the core of EA.

Near-termist vs longtermist EA is a neat distinction that helps when talking about the EA community and funding areas. But honestly I have no idea how the author of this article is drawing a line between “lower case” and “upper case” EA other than how the general public has responded to the movement. In my view of EA, everything is occurring in the same arena of debate under the umbrella of EA’s core principles—so it’s all “lower case”.

Woah, a really nice article that identified the most common criticisms of EA that I’ve come across, namely, cause prioritization, earning to give, billionaire philanthropy, and longtermism. Funnily enough, I’ve come across these criticisms on the EA forum more than anywhere else!

But it’s nice to see a well-researched, external, and in-depth review of EA’s philosophy, and as a non-philosopher, I found it really accessible too. I would like to see an article of a similar style arguing against EA principles though. Does anyone know where I can find something like that? A search for EA criticism on the web brings up angry journalists and media articles that often miss the point.