Goodmaxxing

(Crosspost).

If you’re young and online, you’re probably maxxing something.

Maybe you’re looksmaxxing: trying to maximize your hotness (e.g. by hitting yourself in the face with a hammer). Maybe, like Clavicular, you do it just to mog other people—to look better than they do.

But good looks reach diminishing marginal returns. You get a reasonable boost from going to the gym occasionally, but by the time you’re smashing yourself in the face with a hammer nightly, the additional gains might no longer seem worth the grind. One might even suggest that at that point you’re jestermaxxing.

Fortunately, there’s another, better kind of maxxing: goodmaxxing. Goodmaxxers maximize how much good they do, using reason and evidence to guide their actions. Why should you goodmaxx? Because goodmaxxing mogs looksmaxxing. As long as you stay in the goodmaxxing grindset, you can mog all the moneymaxxers, statusmaxxers and rizzmaxxers by doing hundreds of times more than they will to make the world a better place.

Ok, but if you’re goodmaxxing, what exactly is the “good” you’re maxxing? Well, that’s ultimately up to you to figure out. But, undeniably (proven beyond five sigma), there’s one clear sigma morality, and it’s utilitarianism:

It’s right there in the definition! Contrast this with the standard beta approach to doing good, where people simply go along with the conventions of society and never think hard about how they can do the most good. It is easy to goodmog such people.

Most people don’t try that hard to do good effectively. They’d rather go along with what’s socially conventional than do what’s best. Goodmaxxers, in contrast, aim to do as much good as possible, even in ways that sound weird (e.g. promoting shrimp welfare). Rather than only valuing the welfare of those for whom we feel immediate sympathy, goodmaxxing is about doing as much good as you can. It’s better to be a based empathy-pilled goodmaxxer rather than a cringe mecel.

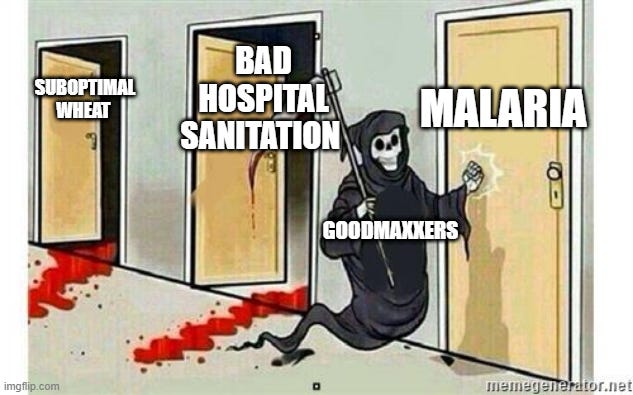

Lots of people historically have goodmaxxed to great effect. Norman Borlaug saved about a billion lives by optimizing wheat cultivation. This didn’t come with glamor or high-status—he did it just to moralmog.

Florence Nightingale saved millions of lives by making sanitation mainstream. Like Borlaug, she was motivated directly by having a positive impact on the world. Through careful thinking and high-quality data gathering, she convinced the world of the importance of sanitation. By evidencemaxxing, she was able to both goodmaxx and mog.

You can do incredible things by goodmaxxing. By giving away 10% of your income, you can save someone’s life every year if you have the median income of an American working full-time. And if you’re ambitious, you can do way more good. A number of people have started companies aiming for major positive impact that ended up being valued at many billions of dollars. If you give away a million dollars, you’ll save around 200 people’s lives. If you give away a billion dollars, you can save nearer to 200,000 lives.

There are other pressing global problems that you can work on. This can make a huge difference: meaningfully lowering the odds of a pandemic, AI takeover, coup, or more. Because so few people goodmaxx, if you are motivated by having an impact, you can often be one of a few dozen or a few hundred people working on the world’s biggest problems. You can make a big counterfactual difference, thus displaying enormous mog differential.

Take AI alignment, as an example, which is about trying to make AI accord with human values. There are only a few hundred people working on AI alignment, even though experts tend to think odds are non-trivial that misaligned AI will kill or disempower humanity. That means if you work on alignment, you can be one of a few hundred people working to address a risk with a meaningful chance of wiping out the species. Same with pandemic preparedness.

And some issues are even more neglected. For example, there are almost no people working on preventing AI-enabled coups specifically, even though people who have researched this possibility tend to think odds of it occurring are somewhere between .1% and 10%.

Or consider AI behavioural design. The AIs of the future will be hugely influential. There are reasons to think we’re entering a world where AI would have world-transforming effects, doing more labor than all humans combined. Despite that, the number of people thinking through the alignment target for the AI—working to give it better values and fit better into human society—is extremely small. We are building miniature gods, whose values are ours to shape, and only a few people are thinking deeply about what those values should be.

So if you’re ambitious, you can be one of just a few people working on some of the most important global priorities. This would be like being one of just a few people in the world working on sanitation or cancer research. Again, gotta up that mog differential.

If you’re goodmaxxing then you could consider futuremaxxing, too. If the future lasts a long time, it could have many times more people than the present does. Thus, actions that make the future better (e.g. by lowering the odds of an existential catastrophe) can affect trillions of people in expectation. The case for futuremaxxing (formerly known as longtermism) rests on the very simple ethical premise: future people matter, so because they could exist in such great numbers, we have strong moral reasons to promote their interests.

Now, why should you care so much about goodness so as to reorient your life to bring it about? We sometimes err by thinking about goodness as some vague, nebulous abstraction. But the bad is composed of many individual evil things: children dying, animals suffering in cages, whole generations being extinguished due to the reckless actions of those before them. Good, similarly, is not some nebulous force in the universe, but is composed of individuals experiencing joy and love and achievement.

Insofar as each of those particular things matter, then it is worth pursuing the good which is composed of them. You can bring about the things that matter in the world in incredible quantities. As Scott Alexander put it:

This doesn’t mean concern must be virtue signaling or luxury beliefs. It just means that it requires principle rather than raw emotion. One death is a tragedy, a million deaths is a statistic. But if you’re interested in having the dignity of a rational animal (a perfectly acceptable hobby! no worse than trying to get good at Fortnite or whatever!) then eventually you notice that a million is made out of a million ones and try to act accordingly.

And this ethical commitment—that it’s better to do more good rather than less—is very commonsensical. It’s better to save 100 people than just one. The good is good, so you should bring about more of it.

A final reason to goodmaxx is because doing good tends to make people happier. Having a meaningful existence, where you aim for something, rather than aimlessly drifting through life, makes your life better. It makes you happier in a deeper way, makes your day to day moments anchored by something more substantive. By aiming for the good, you will likely benefit in the long run. By goodmaxxing, you end up joymaxxing and meaningmaxxing, too.

Because most of society isn’t goodmaxxing, goodmogging is easy. You can join a community of smart and talented people doing incredible amounts of good. You can be like Borlaug and Nightingale. Don’t go along with conventional moral mediocrity: goodmaxx!

I’m 31. Not very young anymore. But I’m definitely eyerollmaxxing. I can’t count how many times my eyes have rolled at ridiculousness these days.

Clavicular makes me feel like his parents don’t care about him. Looksmaxxing should be about looking from within and outside to improve one’s self. Not to destroy one’s mental health for looking attractive on the outside. But that’s not the ideal timeline I’m in right now.

I like the idea of goodmaxxing but feel like I am wayyy to old to know how seriously the sort of youngster that watches videos with maxxing in the title would take this blog :)

Might change my linkedin to “Based, empathy-pilled Goodmaxxer”

On a more serious note, I do wonder if there might be some genuine memetic potential with this, a few influencers get hold of it, maybe a small news channel. I’m far too uncool to really know, but I do wonder.