Jeff, this is really lovely and I appreciate you thinking out loud through your reasoning. Is be interested to hear what you think will be hard for them as they grow up with “parts with strong unusual views” and whether you think this would be qualitatively different from other unusual views (eg strongly religious, military family, etc)

Alexander Saeri

Very valuable piece, and likely worth a separate write up.

Michael, thanks for this post. I have been following the discussion about INT and prioritisation frameworks with interest.

Exactly how should I apply the revised framework you suggest? There are a number of equations, discussions of definitions and circularities in this post, but a (hypothetical?) worked example would be very useful.

Thanks for posting this, Nick. I’m interested in how you plan to run this course. Are you the course coordinator? Is there an academic advisor? Who are the intended guest lecturers and how would they work? Who are the intended students?

If you haven’t already, please upload a version to the open science framework as a preprint: https://osf.io/preprints

I really appreciate this, Michelle. I’m glad to see this kind of piece on the EA forum.

Thanks Markus.

I read the US public opinion on AI report with interest, and thought to replicate this in Australia. Do you think having local primary data is relevant for influence?

Do you think the marginal value lies in primary social science research or in aggregation and synthesis (eg rapid and limited systematic review) of existing research on public attitudes and support for general purpose / transformative technologies?

Friendly suggestions: expand CHAI in the first instance of a post, for readers who are not as familiar with the acronym; clarify the month and day (eg Nov 11) for readers outside the United States

I’m a researcher based in Australia and have some experience working with open/meta science. Happy to talk this through with you if helpful, precommitting to not take any of your money.

Quick answers, most of which are not one off, donation target ideas but instead would require a fair amount of setup and maintenance.

-

$250,000 would be enough to support a program for disseminating open / meta science practices in Australian graduate students (within a broad discipline), if you had a trusted person to administrate it.

-

you could have a prize for best open access paper published by a non PhD

-

you could fund a conference such as AIMOS https://aimos.community/ (I have no affiliation and no knowledge of how effective this is)

-

you could ask the Centre for open science people how to effectively spend the money

-

If you’re comfortable sharing these resources on prioritisation and coordination, please also let me know about them.

Thanks for this list. Your EA group link for Focusmate just goes to the generic dashboard. Do you have an updated link you can share?

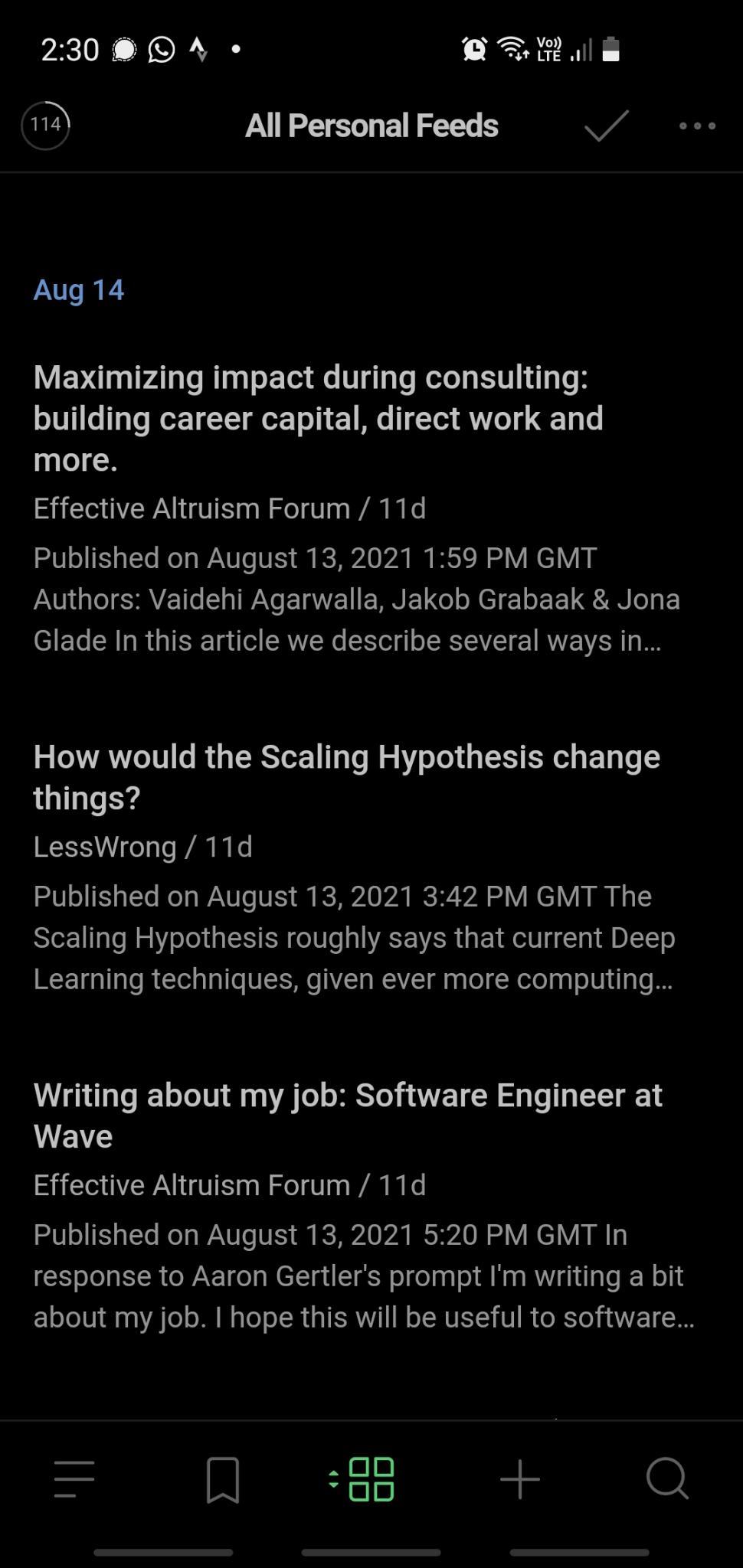

I use Feedly to follow several RSS feeds, including everything from the EA forum, LessWrong, etc. This lets me read more EA-adjacent/aligned content than if I visited each website infrequently because Feedly has an easy to use app on my phone.

Here is a screenshot on browser of my Feedly sidebar. (I almost never use a browser) Here is an example of the Feedly ‘firehose’ from my mobile phone, previewing several posts from EA forum and elsewhere. I liken it to a ‘fire hose’ in that I get everything, including all the personal blogs and low-effort content that would otherwise be hidden by the website sorting algorithm. There’s also no (displayed) information in Feedly about the number or content of comments—instead I need to open each interesting post to find out if someone has commented on it.

For some posts, the post content is the most valuable. In other posts, the commentary is the most valuable, and Feedly/RSS does a bad job of exposing this value to me easily. I also find that engagement is highest within the first 1-2 days of a post, but takes several hours to start.

All of this is to say that I think the ‘right’ feed is probably still something like one or more RSS feeds—especially given their interoperability and ease of use—but that the user experience is likely to be highly variable depending on their needs and appetite for other- vs self-curation of what is in the feed.

Akhil, thanks for this post. Your post happened to coincide with an email I received about a new article and associated webinar, “Centring Equity in Collective Impact”. You and others in this space might find it relevant:

Thanks for cross posting this Peter. I may be biased but I think this is a great initiative.

Do you have any info about the kinds of people who are reading a newsletter like this? Eg, are they mostly EAs who are interested in behaviour science, or mostly behaviour scientists who are interested in EA?

Great to see this initiative, Vael. I can think of several project ideas that could use this dataset of interviews with researchers.

Edit:

-

Analyse transcripts to identify behaviours that researchers and you describe as safe or unsafe, and identify influences on those behaviours (this would need validation with follow up work). Outcome would be an initial answer to the concrete question “who needs to do what differently to improve AI safety in research, and how”

-

Use the actors identified in the interviews to create a system/actor map to help understand flows of influence and information. Outcome: a better understanding of power dynamics of the system and opportunities for influence.

-

With information about the researchers themselves (especially of there are 90+), could begin to create a typology / segmentation to try and understand which types are more open / closed to discussions of safety, and why. Outcome: a strategy for outreach or further work to change decision making and behaviour towards AI safety.

-

Thanks for this Sean! I think work like this is exceptionally useful as introductory information for busy people who are likely to pattern match “advanced AI” to “terminator” or “beyond time horizon”.

One piece of feedback I’ll offer is to encourage you to consider whether it’s possible to link narrow AI ethics concerns to AGI alignment in a way that your last point, “there is work that can be done” shows how current efforts to address narrow AI issues can be linked to AGI. This is especially relevant for governance. This could help people understand why it’s important to address AGI issues now, rather than waiting until narrow AI ethics is “fixed” (a misperception I’ve seen a few times).

I’m really excited to see this survey idea getting developed. Congratulations to the Rethink team on securing funding for this!

A few questions on design, content and purpose:

Who are the users for this survey, how will they be involved with the design, and how will findings be communicated with them?

In previous living / repeated survey work that I’ve done (SCRUB COVID-19), having research users involved in the design was crucial for it to influence their decision-making. This also got complex when the survey became successful and there were different groups of research users, all of whom had different needs

Because “what gets measured, gets managed”, there is a risk / opportunity in who decides which questions should be included in order to measure “awareness and attitudes towards EA and longtermism”.

Will data, materials, code and documentation from the survey be made available for replication, international adaptation, and secondary analysis?

This could include anonymised data, Qualtrics survey instruments, R code, Google docs of data documentation, etc

Secondary analysis could significantly boost the current and long-term value of the project by opening it up to other interested researchers to explore hypotheses relevant to EA

Providing materials and good code & documentation can help international replication and adapation.

Was there a particular reason to choose a monthly cycle for the survey? Do you have an end date in mind or are you hoping to continue indefinitely?

Do you anticipate that attitudes and beliefs would change that rapidly? In other successful ‘pulse’ style national surveys, it’s more common to see yearly or even less frequent measurement (here’s one great example of a longitudinal values survey from New Zealand)

Is there capacity to effectively design, conduct, analyse, and communicate at this pace? In previous work I’ve found that this cycle—especially in communicating with / managing research users, survey panel companies, etc—can become exhausting, especially if the idea is to run the survey indefinitely.

In terms of specific questions to add, my main thought is to include behavioural items, not just attitudes and beliefs.

Ways of measuring this could include “investigated the effectiveness of a charity before donating on the last occasion you had a chance”, or “donated to effective charity in past 12 months”, or “number of days in the past week that you ate only plant-based products (no meat, seafood, dairy or eggs)

Through the SCRUB COVID-19 project, we (several of us at Ready) ran a survey of 1700 Australians every 3 weeks for about 15 months (2020-2021) in close consultation with state policymakers and their research users. Please reach out if you’d like to discuss / share experiences.

This was great fun, and I enjoyed contributing to it!

Thanks for this detailed write-up, Ninell. I’ll be applying several of the principles for organisation and roles to a version of AGISF I’m facilitating in Australia in late 2022.

Thanks for this excellent piece, Karolina. In my work (research enterprise working w/ government and large orgs), we are constantly trying to get clarity on the implicit theory of change that underpins the organisation, or individual projects. In my experience, the association of ToC with large international development projects has meant that some organisations see them as too mainstream/stodgy/not relevant to their exciting new initiative. But for-profit businesses live or die on their ToC (aka business model), regardless of whether they are large or small.