Co-founder of Nonlinear, Charity Entrepreneurship, Charity Science Health, and Charity Science.

Kat Woods

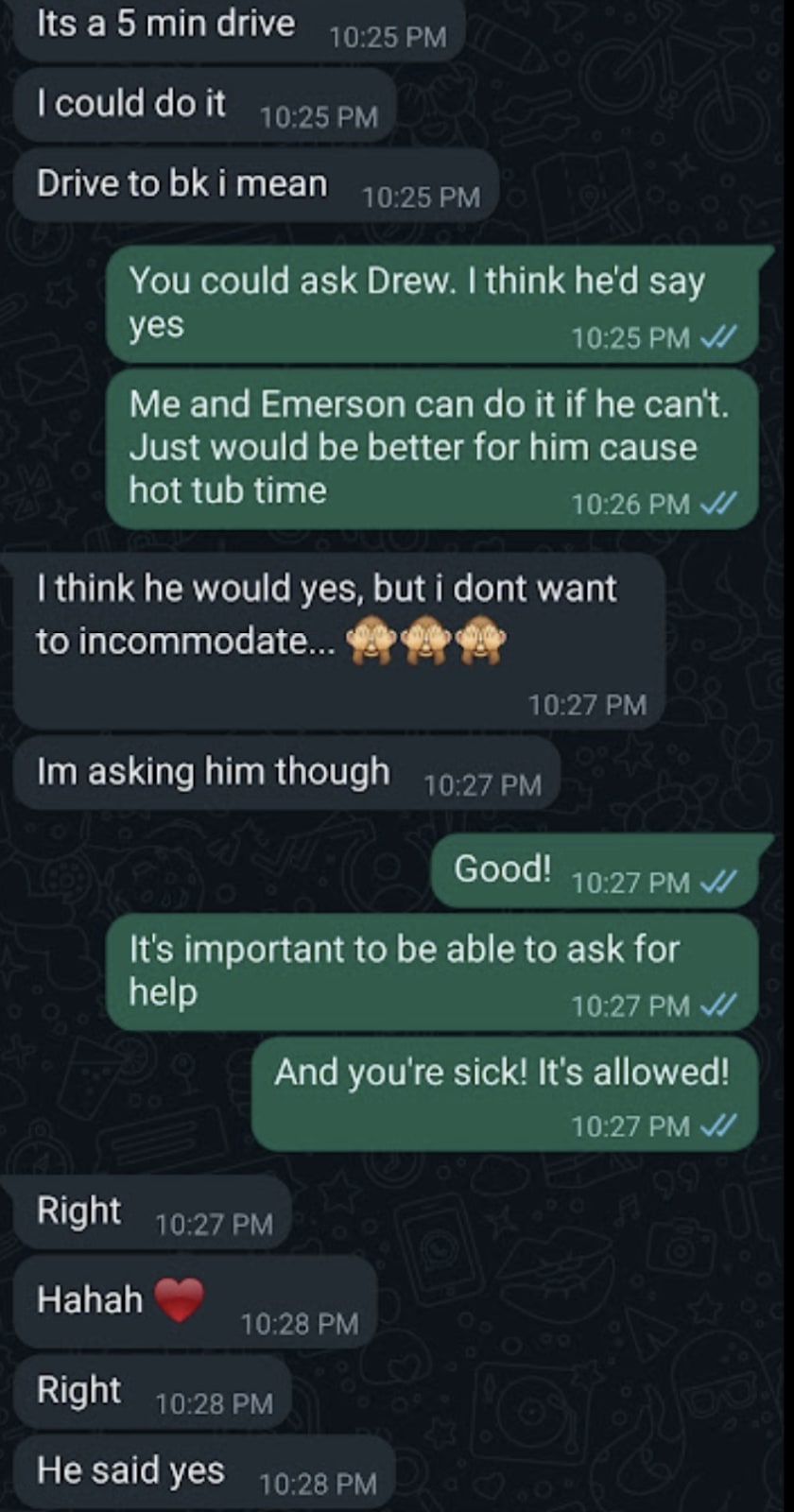

One example of the evidence we’re gathering

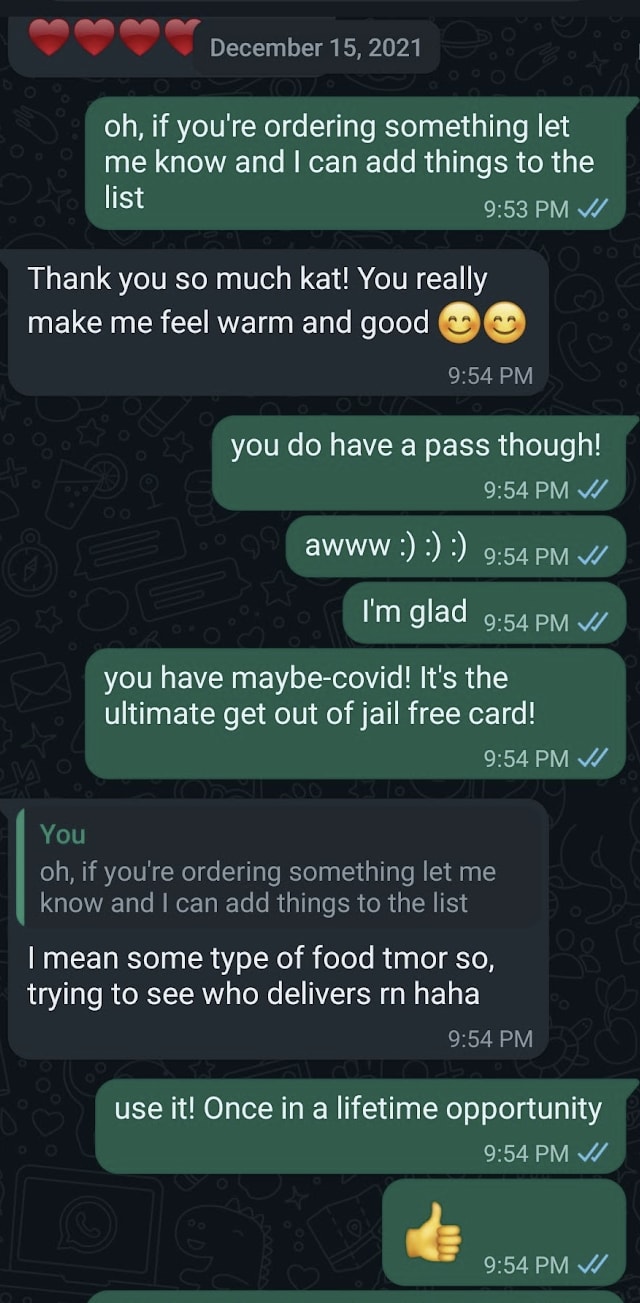

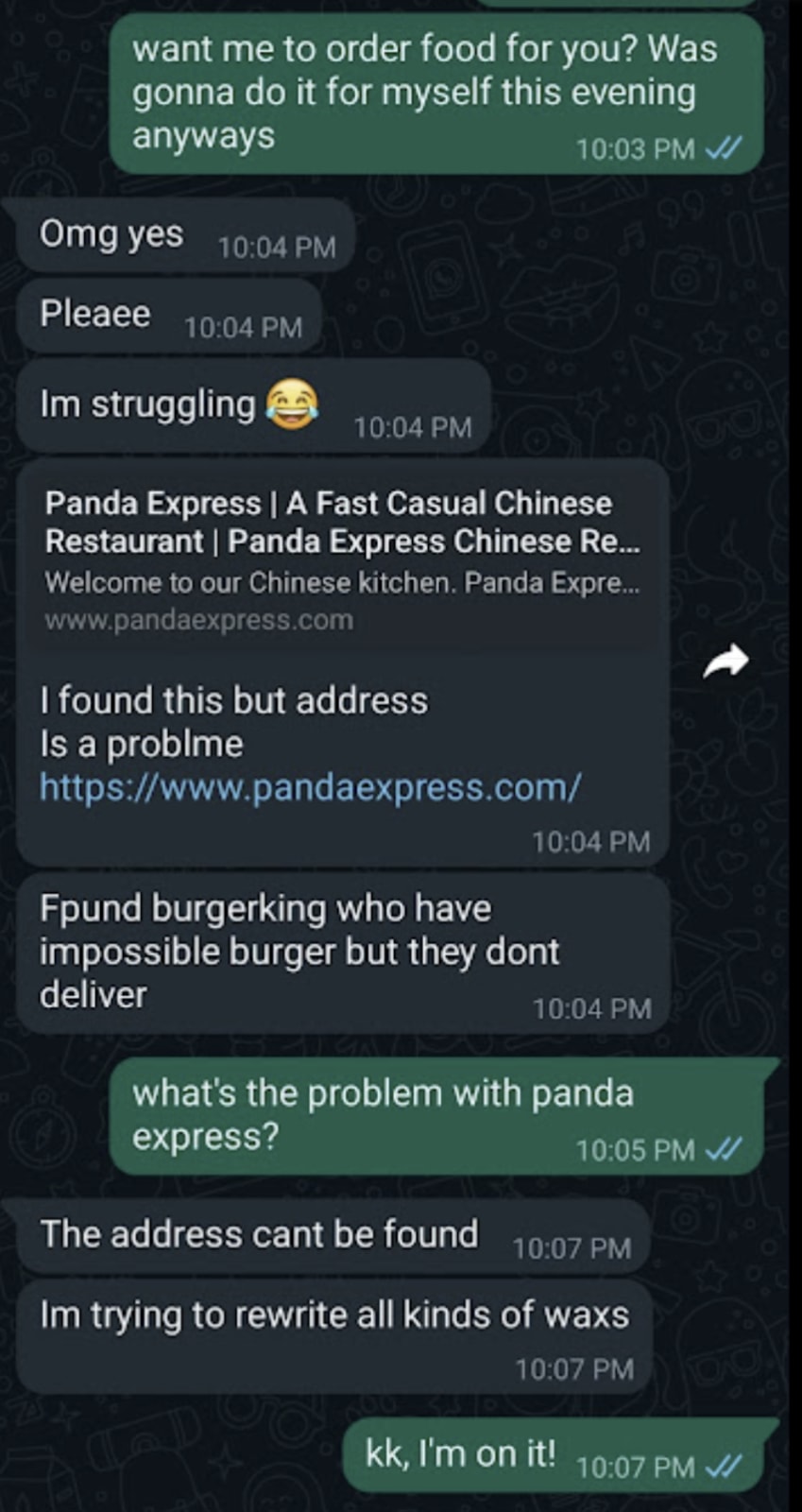

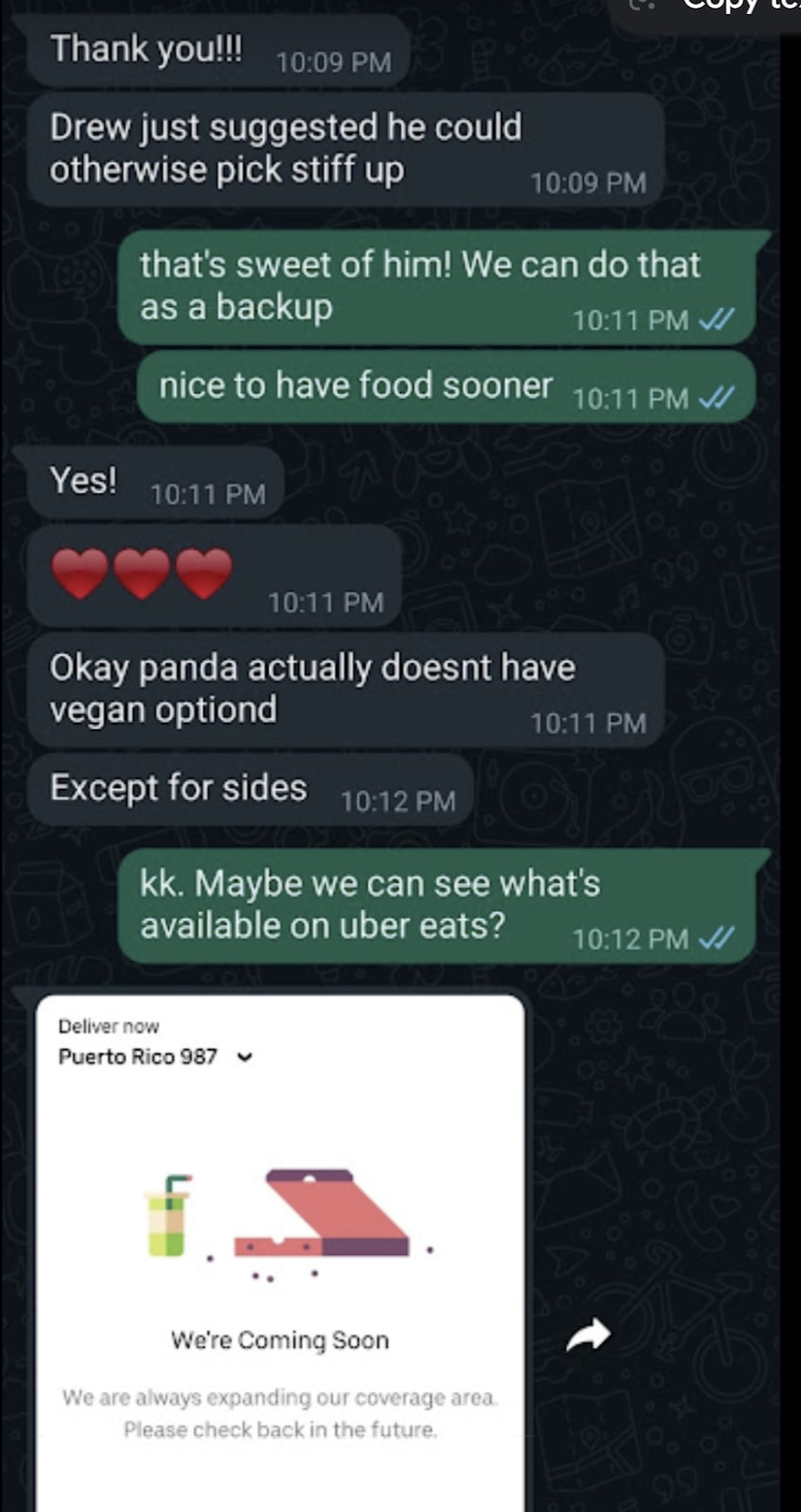

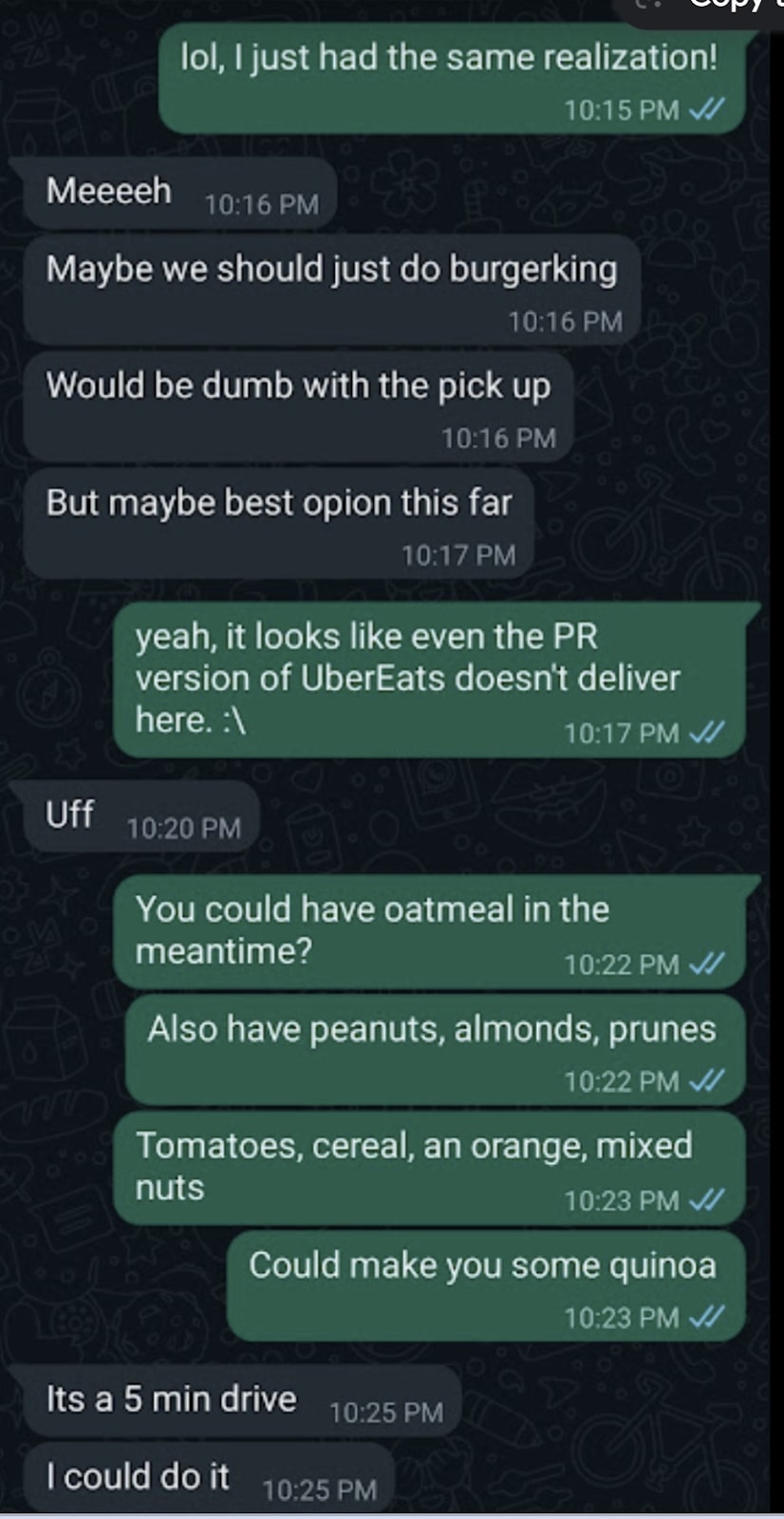

We are working hard on a point-by-point response to Ben’s article, but wanted to provide a quick example of the sort of evidence we are preparing to share:

Her claim: “Alice claims she was sick with covid in a foreign country, with only the three Nonlinear cofounders around, but nobody in the house was willing to go out and get her vegan food, so she barely ate for 2 days.”

The truth (see screenshots below):There was vegan food in the house (oatmeal, quinoa, mixed nuts, prunes, peanuts, tomatoes, cereal, oranges) which we offered to cook for her.

We were picking up vegan food for her.

Months later, after our relationship deteriorated, she went around telling many people that we starved her. She included details that depict us in a maximally damaging light—what could be more abusive than refusing to care for a sick girl, alone in a foreign country? And if someone told you that, you’d probably believe them, because who would make something like that up?

Evidence

The screenshots below show Kat offering Alice the vegan food in the house (oatmeal, quinoa, cereal, etc), on the first day she was sick. Then, when she wasn’t interested in us bringing/preparing those, I told her to ask Drew to go pick up food, and Drew said yes. Kat also left the house and went and grabbed mashed potatoes for her nearby.

See more screenshots here of Drew’s conversations with her.

Initially, we heard she was telling people that she “didn’t eat for days,” but she seems to have adjusted her claim to “barely ate” for “2 days”.

It’s important to note that Alice didn’t lie about something small and unimportant. She accused of us a deeply unethical act—the kind that most people would hear and instantly think you must be a horrible human—and was caught lying.

We believe many people in EA heard this lie and updated unfavorably towards us. A single false rumor like this can unfairly damage someone’s ability to do good, and this is just one among many she told.

We have job contracts, interview recordings, receipts, chat histories, and more, which we are working full-time on preparing.

This claim was a few sentences in Ben’s article but took us hours to refute because we had to track down all of the conversations, make them readable, add context, anonymize people, check our facts, and write up an explanation that was rigorous and clear. Ben’s article is over 10,000 words and we’re working as fast as we can to respond to every point he made.

Again, we are not asking for the community to believe us unconditionally. We want to show everybody all of the evidence and also take responsibility for the mistakes we made.

We’re just asking that you not overupdate on hearing just one side, and keep an open mind for the evidence we’ll be sharing as soon as we can.

- Effective Aspersions: How the Nonlinear Investigation Went Wrong by (19 Dec 2023 12:00 UTC; 339 points)

- Effective Aspersions: How the Nonlinear Investigation Went Wrong by (LessWrong; 19 Dec 2023 12:00 UTC; 168 points)

- 's comment on Sharing Information About Nonlinear by (8 Sep 2023 11:50 UTC; 39 points)

- 's comment on Sharing Information About Nonlinear by (9 Sep 2023 16:20 UTC; 19 points)

- 's comment on Nonlinear’s Evidence: Debunking False and Misleading Claims by (14 Dec 2023 4:05 UTC; 12 points)

- 's comment on Nonlinear’s Evidence: Debunking False and Misleading Claims by (15 Dec 2023 20:31 UTC; 1 point)

I’m so impressed that Pablo asked for an external review when he was feeling potentially burnt out and not sure about the impact of the wiki. That takes some incredible epistemic (and emotional!) chops. This is an example of EA at its finest.

I think it’s valuable to have social experiments. However, I do think the social experiment of living and working with your employees while traveling has now been experimented with and the results are “it’s very risky”. I’ve been doing it with Emerson and Drew for years now and it’s been fine, but I think we have a really good dynamic and it’s hard to replicate.

As for HR professionals, we had only 3 full-time people at the time, so that would have been too early/small for us to have one.

For safeguarding policies, Chloe was working on creating those. But yeah, she was our first full-time employee where we could even have policies, so it was understandable not to have them yet.

For regular working hours, we did. Chloe only ever worked once on a weekend and never again (she said she didn’t like it, and we set up a policy to never do it again).

For offices in a normal city, I don’t think that should matter much. Rethink Priorities is fully remote last I checked and in all sorts of cities and it’s fine.

As for work/life boundaries, I think the biggest thing was to no live with employees, which we are no longer doing. It’s worked in the past for me but I think it’s just too risky.

[Edit: for anybody reading this now, I am very happy to talk to anybody about what happened. Simply reach out to me at katwoods [at] nonlinear [dot] org and I’d be happy to provide more information.]

Hi anonymous,

First, I want to say that I do believe you have good intentions. Second, an important point about source diversity: we have heard from many people in the community that one particular disgruntled ex-employee was previously widely spreading these accusations.

While this person, no doubt, wasn’t the only disgruntled ex-employee, most people hear these allegations secondhand, and this creates an echo chamber where it can appear that there were more disgruntled ex-employees than actually existed.

I’d also like to ask, when did you get this information? There was a period in which we had an open disagreement with one of the former employees, and we believe we have since rectified it. It’s possible we already addressed some of these issues after you heard about them.

This is a problem with unsubstantiated rumors and gossip: people might hold an opinion about something even after that problem was fixed.

I have general sense that they had a pattern of taking on young, idealistic interns with poor ability to stand up for themselves and exploiting them to a standard many would consider unacceptable (e.g. manipulating them into accepting unreasonably little pay, and sometimes not following through on payment when they thought they could get away with it).

We can provide concrete evidence in terms of bank screenshots and recorded interviews showing that this is not true. We are happy to talk to CEA about it if they would like.For mentorship, I do not know the standard for mentorship, but some previous interns found working at Nonlinear to be lifechanging. Some, I’m sure, wished there had been more mentorship.

Some people have not liked that we have unpaid internships, but we have always been up front and clear about that in our job ads and interviews.

For the accusation of the payment being delayed, we have heard that particular employee’s claims, and we can show receipts of DMs and bank transactions showing that they were saying things that are verifiably incorrect.

As I understand things, in July 2022 a group of Nonlinear employees and interns quit because they were unhappy with either their own treatment or the treatment of their coworkers.

There were two employees who left in June. This is a complicated topic and would rather respect our ex-employees’ privacy by not mentioning the details publicly. Happy to talk with CEA about it.

I’ve also heard that Emerson can be retributive, and that some people around Nonlinear were scared about Emerson finding out they’d spoken badly about him. (Generally speaking, to the extent that things were bad at Nonlinear, I have the general sense that Emerson, and not Kat, was the main source of bad behavior.)

This is a vague accusation that is hard to address. We can’t prove a negative.

I feel quite conflicted about posting this because:

The people I’ve spoken to also think that Nonlinear has done a lot of good, despite their mistreatment of employees and interns. But I’ve recently become quite weary of this “ends justify the means”-type reasoning

This is gossip without providing any evidence and is impossible to disprove.

I personally am a mix of a rule utilitarian combined with a moral counsel approach due to moral uncertainty.

I can’t share any specifics because anything specific was told to me in confidence; I also have no way of knowing whether the things I’ve heard were exaggerated. Additionally, a lot of what I was told I remember only vaguely.

This is a good a reason to hear both sides before publicly accusing somebody of something.

Given that none of the people wronged spoke up, it’s not clear that I should (due to concerns about the reliability of secondhand knowledge, for example).

In the future, I would ask both sides first before making allegations like this, especially in a public forum, because most people won’t come back to re-read the comments later.

But I decided to post anyway because I like to think that I’ve learned the hazards of waiting until after misbehavior is publicly revealed to write about the evidence that I had all along. Sorry for any unfortunate consequences of this comment.

People should definitely look into allegations against nonprofits. However, it’s important to look into them, not just report hearsay without doing proper due diligence. It’s important that EAs maintain good epistemics, not just publicly report any gossip that they’ve heard.

We definitely did not fail to get her food, so I think there has been a misunderstanding—it says in the texts below that Alice told Drew not to worry about getting food because I went and got her mashed potatoes. Ben mentioned the mashed potatoes in the main post, but we forgot to mention it again in our comment—which has been updated

The texts involved on 12/15/21:

I also offered to cook the vegan food we had in the house for her.

I think that there’s a big difference between telling everyone “I didn’t get the food I wanted, but they did get/offer to cook me vegan food, and I told them it was ok!” and “they refused to get me vegan food and I barely ate for 2 days”.

Also, re: “because of this professional/personal entanglement”—at this point, Alice was just a friend traveling with us. There were no professional entanglements.

Why would it be bad if he was given advance warning about this report? There’s nothing in here about him being retaliatory. It seems probably good to hear the other side and be given a chance to look at the post before it goes live.

Also, it does say in the document that Owen was given advanced notice. His document says that he saw the draft and disagreed with aspects of it that they didn’t address in the post.

Hi Amber. We were working as fast as we could on examples of the evidence. We have since posted this comment here, demonstrating Alice claiming that nobody in the house got her vegan food when we have evidence that we did.

The claim in the post was “Alice claims she was sick with covid in a foreign country, with only the three Nonlinear cofounders around, but nobody in the house was willing to go out and get her vegan food, so she barely ate for 2 days.”. (Bolding added)

If you follow the link, you’ll see we have screenshots demonstrating that:

1. There was vegan food in the house, which we offered her.

2. I personally went out, while I was sick myself, to buy vegan food for her (mashed potatoes) and cooked it for her and brought it to her.

I have empathy for Alice. She was hungry (because of her fighting with a boyfriend [not Drew] in the morning and having a light breakfast) and sick. That sucks, and I feel for her. And that’s why I tried (and succeeded) in getting her food.

I would be fine if she told people that she was hungry when she was sick, and she felt sad and stressed. Or that she was hungry but wasn’t interested in any of the food we had in the house. But she told everybody that we didn’t get her food when we did. This made us look like uncaring people, which we are not. She even said in her texts that she felt loved and supported.

We chose this example not because it’s the most damning (although it certainly paints us in very negative and misleading light) but simply because it was the easiest claim to explain where we had extremely clear evidence without having to add a lot of context, explanation, find more evidence, etc.

Even so, it took us hours to put together and share. Both because we had to track down all of the old conversations, make sure we weren’t getting anything wrong, anonymize Alice, format the screenshots (they kept getting blurry), and importantly, write it up. Writing for the EA Forum/LessWrong is already quite a difficult thing, with it being a reasonable assumption that people will point out any little detail you get wrong. I ask to please empathize with how it must be for us now, given that so many people currently see everything we post through the lens of being unethical people.

If you’ve ever spent a long time trying to get a post just right for the EA Forum/LessWrong, I ask you to empathize with what we’re going through here.

We also had to spend time dealing with all of the other comments while trying to pull this together. My inbox is completely swamped. Not to mention trying to hold myself together when the worst thing that’s ever happened to me is happening.

We simply meant that original comment to be a placeholder while we spent time gathering and sharing the evidence. We simply wanted people to withhold judgment.

We continue to ask this. We are all working full-time on gathering, organizing, and explaining all of the evidence. We expect to put out multiple posts over the next few weeks showing more evidence like the above.

We think that if you see the evidence, a sizeable percentage of you will update and think that we are not “predators” to be avoided, but rather good but far from perfect people trying to do good. We made some mistakes, and we learned from them and set up ways to prevent them. There are also misrepresentations of the truth that are happening that we will provide evidence for. Please keep this hypothesis in mind and we’ll send you the evidence to support it as soon as we can.

Teaching buy-out fund

Allocate EA Researchers from Teaching Activities to ResearchProblem: Professors spend a lot of their time teaching instead of researching. Many don’t know that many universities offer “teaching buy-outs”, where if you pay a certain amount of money, you don’t have to teach. Many also don’t know that a lot of EA funding would be interested in paying that.

Solution: Make a fund that’s explicitly for this, to make it so more EAs know. This is the 80⁄20 of promoting the idea. Alternatively, funders can just advertise this offering in other ways.

There isn’t a track record of retaliation. We didn’t retaliate against your sources. We know who almost all of them are and what they said and nothing happened to them.

Alice’s messages simply show me saying that if she continued sharing her side, I would share mine. Sharing your side is not unethical.

And the examples that people gave of retaliation for Emerson were of him being sued and people sharing their side online, and him replying saying he’s countersue and he’d share his side (which he hadn’t done yet). This isn’t unethical, but a very reasonable thing to do.

For the libel, Ben knowingly said multiple things that were false and damaging, and he said dozens of things that he could have easily known were false if he’d just waited a week out of 6 months.

But we never wanted to sue Ben. We just wanted Ben to give us time to look at the evidence we were more than willing to share with him. I really recommend reading this section, because I think it gets across very well what was happening.

Here’s a quick excerpt:

However, saying it’s wrong to threaten a lawsuit with Lightcone would be like if somebody drew a gun on you and you tried to knock the gun out of their hands. If the gun-wielder then goes around saying that “you hit them” they’re missing a critical detail in the story.

Ben knowingly published numerous falsehoods that were extremely damaging to us. He published dozens more libelous falsehoods from Alice and Chloe which he could have easily avoided if he’d just looked at the evidence. He knew he was about to wreck our ability to do good and cause immense personal suffering to us.

He heard somebody—who has a reputation for dishonesty—yell “thief!” and shot us in the stomach before he could check and see if we were actually thieves. He was unwise and reckless. You shouldn’t shoot people that easily. Especially when you know that the person yelling it has told you lies before. But he was well-intentioned nonetheless.

And even as Ben proudly says he’d shoot us again, we’re saying that the real villain is unaligned AI and let’s focus on that. We should not be fighting each other. We don’t want to fight.

Ben, we’re on the same side. We all want to make AI go well.

You were misled. By women who need help and compassion, no doubt. But the way to help them isn’t to shoot us. It’s to actually try to understand the situation, then go from there.

Remember: the way that good people do bad things is to demonize the other. So even if some of you might be very mad at Ben for doing this, I call on everybody to try to be their best selves. To set off an upward spiral. To remember that Ben had good intentions. His methods were bad and the outcomes disastrous, but the way to solve that is not to shoot him. The way to solve it is to creatively problem-solve, assume good intent, and remember the bigger picture.

To always remember:

Almost nobody is evilAlmost everything is broken

Almost everything is fixable

And the accusation of threatening to hire stalkers is just a really weird accusation. That should be an indicator that somebody is not mentally alright.

I’m really sad too that we couldn’t just talk too. I hope we still might be able to, once you’ve read the document and see that the retaliation reputation was unwarranted. I would really love to talk. I think trying to do conflict resolution in a high stakes, hostile, and public venue is less likely to work than if we can talk face-to-face and have a higher bandwidth conversation.

Honestly, I wish we’d already invented mind reading technology, because I’d just let you read my mind, unfiltered. I know that if you could, you’d see that I really have no negative intentions and I’m really just trying to figure out how to make everybody happy and reduce suffering. This situation is complicated and I certainly can sometimes unintentially cause harm, and I hate that, and I’m always working on trying to prevent that. But I really do just want everybody to be happy, including you. Anyways, for now we don’t have mind-reading technology that’s accurate or cheap enough, so we’ll have to make do with me trying to convey through text that I really am not retaliatory. If you hurt me, I will try to understand you, try to help you understand me, then try to collaboratively problem-solve.

Incubator for Independent Researchers

Training People to Work Independently on AI Safety

Problem: AI safety is bottlenecked by management and jobs. There are <10 orgs you can do AI safety full time at, and they are limited by the number of people they can manage and their research interests.

Solution: Make an “independent researcher incubator”. Train up people to work independently on AI safety. Match them with problems the top AI safety researchers are excited about. Connect them with advisors and teammates. Provide light-touch coaching/accountability. Provide enough funding so they can work full time or provide seed funding to establish themselves, after which they fundraise individually. Help them set up co-working or co-habitation with other researchers.

This could also be structured as a research organization instead of an incubator.

EA Marketing Agency

Improve Marketing in EA Domains at Scale

Problem: EAs aren’t good at marketing, and marketing is important.

Solution: Fund an experienced marketer who is an EA or EA-adjacent to start an EA marketing agency to help EA orgs.

AGI Early Warning System

Anonymous Fire Alarm for Spotting Red Flags in AI SafetyProblem: In a fast takeoff scenario, individuals at places like DeepMind or OpenAI may see alarming red flags but not share them because of myriad institutional/political reasons.

Solution: create an anonymous form—a “fire alarm” (like an whistleblowing Andon Cord of sorts) where these employees can report what they’re seeing. We could restrict the audience to a small council of AI safety leaders, who then can determine next steps. This could, in theory, provide days to months of additional response time.

Alignment Forum Writers

Pay Top Alignment Forum Contributors to Work Full Time on AI Safety

Problem: Some of AF’s top contributors don’t actually work full-time on AI safety because they have a day job to pay the bills.

Solution: Offer them enough money to quit their job and work on AI safety full time.

Thank you for the empathy. Means a lot to me. This has been incredibly rough, and being expected to exhibit no strong negative emotions in the face of all of this has been very challenging.

And, yes, I do think an alternative timeline like that was possible. I really wish that had happened, and if the multiverse hypothesis is true, then it did happen somewhere, so that’s nice to think about.

Thanks for the questions!

1) Was Ben’s paraphrase (quoted above) inaccurate?

Yes. We wouldn’t ask somebody to travel across borders with illegal drugs. We thought they were legal where she was going, and that’s the only reason we asked her. We actually recommended she not travel across borders with illegal recreational drugs, which she was in the habit of doing.

2) Was Ben’s claim that Emerson said the summary of his call with Nonlinear (of which Ben’s paraphrase was a part) “good summary” false?

Yes, it was false. We told him that. We sent him multiple emails saying that the article was riddled with falsehoods and misleading claims. The rest of that sentence was “Good summary. Some points still require clarification”. I think this was very intellectually dishonest of Ben to publish just one part of the sentence.

3) What were the so-called “recreational drugs”, if there were any? Were they legal drugs, obtained with a prescription, but used recreationally?

We didn’t ask for any recreational drugs across borders. We asked for one pack of producitivity medicine which we thought were legal where she was going. When we found out it required a prescription, we said never mind.

Audiobook version: [new] Aaron made an awesome audiobook version here.

[Original] It’s easy to turn it into an audiobook version with Evie or Natural Reader for anybody who likes to read with their ears instead of their eyes. Full guide I wrote up on how to turn everything into audio here

Also, Tobias, if you want to make a super simple audiobook version of the book, I recommend using Amazon Polly. It’ll probably cost under $100 and take less than 10 hours and increase the number of people who read your book by a lot. I know a ton of people who only read with their ears or who are more than 10x likely to read something if there’s an audio version. Even I only found out about this book because I listened to the article on the Nonlinear Library (sorry for the shameless but relevant plug 😛)

Finally, congrats on the book! So far I’m loving it. Thank you for writing it. I think s-risks deserve more attention in the EA movement and think this book will help move the needle.

Top ML researchers to AI safety researchers

Pay top ML researchers to switch to AI safety

Problem: <.001% of the world’s brightest minds are working on AI safety. Many are working on AI capabilities.

Solution: Pay them to switch. Pay them their same salary, or more, or maybe a lot more.

EA Productivity Fund

Increase the output of top longtermists by paying for things like coaching, therapy, personal assistants, and more.

Problem: Longtermism is severely talent constrained. Yet, even though these services could easily increase a top EAs productivity by 10-50%, many can’t afford them or would be put off by the cost (imposter syndrome or just because it feels selfish).

Solution: Create a lightly-administered fund to pay for them. It’s unclear what the best way would be to select who gets funding, but a very simple decision metric could be to give it to anybody who gets funding from Open Phil, LTFF, SFF, or FTX. This would leverage other people’s existing vetting work.

This is fantastic! Thank you for making this.

Would you like us to convert the readings into audio to make it easier for people to participate? This would be pretty easy on our end.

@EV US Board @EV UK Board could you include Owen’s response document somewhere in the post? It contains a lot of important information and it’s getting lost in the comments.