Nobody ever talks about this, but a cult infiltrated and took over EA.

People do talk about Leverage—that is how you know about them—but they did not take over EA, and nor does the comment you cite from Habryka claim this.

Nobody ever talks about this, but a cult infiltrated and took over EA.

People do talk about Leverage—that is how you know about them—but they did not take over EA, and nor does the comment you cite from Habryka claim this.

Why did the EA organizations find it disappointing? I’m afraid you’re going to say they didn’t like that peer reviewers didn’t agree with them

Nope. There have been a variety of issues. One is speed, and another is the difficulty of finding relevant experts. Thinking back to MIRI’s experience with the Damascus paper, my recollection (possibly incorrect) is their final conclusion was the getting published in a good journal took a lot of time, didn’t really improve the fundamental quality of the work much, and also didn’t yield a lot of prestige/outreach benefits.

Very few people outside of EA consider EA’s idiosyncratic ideas to be serious and credible. What is the strategy for gaining credibility outside the EA echo chamber? Right now, it seems to be a media strategy that counts on people not fact checking EA’s messaging.

Come on, I understand you have objections to METR’s methodology—though to my knowledge you have not published those objections in a peer-reviewed journal—but blithely accusing them of a deliberate strategy of misinformation seems low.

A number of EA orgs have invested considerable time and effort into the conventional peer review process and found it disappointing for a number of reasons. If you think it would be an effective method of persuasion it would be good to hear some evidence of this. My impression is that this is not the case; the power of institutional gatekeepers has fallen dramatically over time, and what matters is producing high quality work. (And the fact that your recommended provider appears to be a scam seems like evidence against as well!)

I’m curious as to your reasoning behind this example:

Let’s assume we take a talented Malawian worker earning $2000 a year (4x the national average) and help them to move to Germany:

Not only does their personal income increase by 25x, but if they send 10% of their income home in remittances ($5,500), they can double the incomes of ten of their family members.

My understanding is the sub-Saharan immigrants to Germany typically earn well below average German incomes; is your plan that you will be much more selective?

It seems like a significant part of the motivation here is you want to change the voting system to prevent a party you dislike from coming to power; this seems pretty anti-democratic to me. In general I think we should disapprove of efforts from incumbent governments to replace the rules they benefited from with a new set just in time to undermine their would-be successors.

The situation would be pretty different if Reform approved of this change, but I don’t get the impression they do?

You might want to explain what ‘CHW’ stands for?

Thanks for sharing your thoughts.

It strikes me that this implies it would be very useful if you were able to share some information with SFF about which bucket various applications fell into.

Thanks for replying!

I think you can just skip the submission step. There’s no need to require submissions at all. My submitting to the FDA or equivalent, you’re automatically deemed to have submitted.

Yes, there are some context-specific elements, but they seem relatively small. Notably, once the FDA approves a drug, it is legal to use for any indication, and for any patient, in the US—even those the FDA did not label it for. I understand that there are biological differences in drug responses between different ethnic groups, but these are typically not that large, and note that the FDA already has a large population of African Americans, with ancestry from all across the continent, within its jurisdiction. Supply chain limitations in Africa, heat-resiliency, disease ecology etc. are real issues. But do they really justify multi-year delays for ordinary treatments? I think it is very unlikely that the cost-benefit analysis would come out this way. The EU runs EU-M4all, but this is focused on products that are focused on non-EMA markets—it doesn’t mean than normal drugs approved for use in the EU are inappropriate for Africa.

To the extent that post-approval surveillance is a problem, it is actually good to outsource approval to developed countries, because this would free up scarce resources for surveillance.

The idea of an accountability seems more like a rhetorical / nationalist issue than a real one to me. The current degree of accountability national african regulators face seems pretty small in practice, and your preferred solution—an Africa-wide version—would also have accountability issues. I think ordinary Africans would be better served by a competent and (relatively!) swift international drug authority with no formal accountability to them over less-competent and slower national regulators with a veneer of accountability.

It really doesn’t seem that bad to me for African countries to indefinitely rely on others? Specialization, Division of Labour and Gains from Trade are some of the most important drivers of a prosperous modern world. But even if drug-approval-capabilities was something that autarky was desirable for, these countries should build the competence first, rather than imposing onerous restrictions on their citizens—and causing many premature deaths through denying safe and effective drugs. I would rather have them build capacity by focusing on surveillance, inspections, trial oversight, and procurement integrity.

Yes, sadly I think you’re right, and the fact that this would be a good policy for Western countries also probably makes little difference in the rhetorical calculus.

You should probably start by reading the existing posts on the subject.

Thanks for sharing this, it was a very interesting read!

I do want to question this claim though:

“But the alternative to a project like the AMA is that essential HIV treatments arrive half a decade late in places that needed them most.”

It seems to me that an attractive alternative would be for African countries to simply give up on doing their own drug approvals, and outsource the decision making entirely to the FDA, MHRA, PMDA, EMA etc. Why not simply say that any drug approved by any of these agencies is automatically approved? This way drugs would be approved swiftly and with almost zero cost to both government and corporation.

If you weren’t using a login, presumably you were using the lowest tier of models, which I don’t think is a very good test.

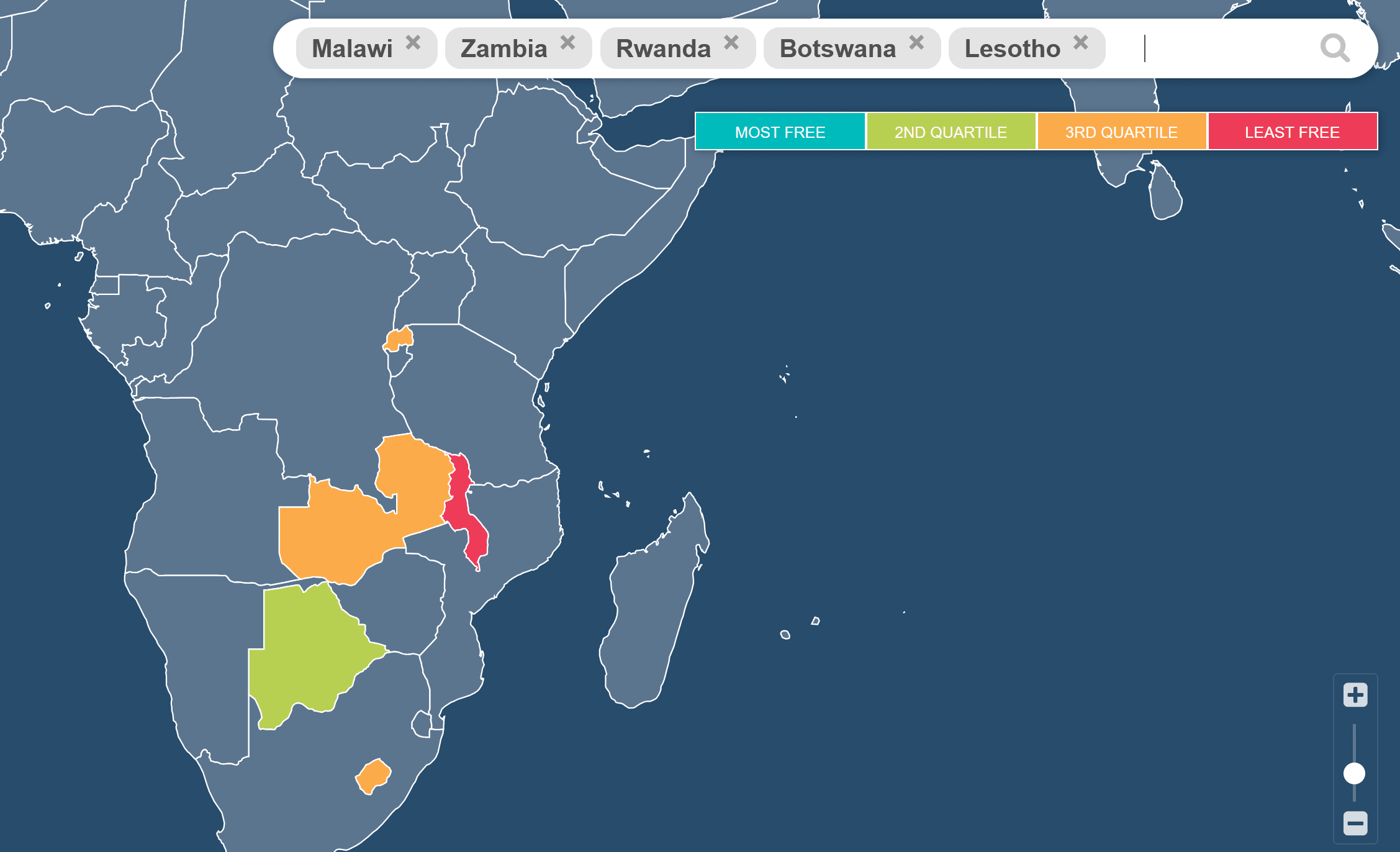

You discuss institutions, but I don’t think you discuss the right kind of institutions. If I am comparing Malawi to some of the other nearby landlocked African countries you mention, the first thing that jumps out to me is their dramatically worse economic freedom. Malawi has one of the worst scores in the entire world, ranked 147⁄165, a level more typical of central or saharan african countries.

(You didn’t mention Eswatini or Burundi, but they also score very badly—unfortunately the map tool above will only let me display 5 countries so I focused on those named in the text plus Zambia as it is neighbouring).

This doesn’t resolve the infinite-regress style question of what causes some countries to have more capitalist institutions than others, but when it comes to which institutions to investigate, I think it is their economic institutions we should focus on.

It seems very unlikely to me that there are AI safety projects that are both worth doing but also not worth doing if they spent $10k on compute. The human capital cost is typically far in excess of that.

If you don’t gate access to your research resources (grant funding, GPUs etc.), and you have a non-trivial amount to give away, then I would expect almost all of them will end up going to projects you would not approve of.

Note that UBI does gate access—it gates to a (small) finite quantity per person.

I realise I am arguing with ChatGPT here, but you are equivocating between EA and the US government. We (EA) are preparing for having more money, and this doesn’t in any way contradict the fact that we (USG) already have a lot and we (USG) have chosen absurd kidney market restrictions.

Thanks for this very interesting article.

One quick suggestion: assuming that 100% of distributed cups are used seems quite aggressive to me. Using a 7.5 year average life I think suggests you are implicitly selecting on very enthusiastic adopters; I would imagine a lot are distributed and then discarded.

To be honest it sounds like he doesn’t know what the phrase ‘death cult’ means.

Thanks for sharing! Could we see the full time series on a daily frequency over the whole interval? This would help us see if the effect is a true annual heartbeat, or if there are smaller spikes on July 1st and October 1st as well.

My first guess: could this be related to regulatory balance sheet constraints for companies with a offset fiscal year?

The GWWC weekends away in Wales were effectively the first EA conferences and they preceded EAG.