Non-EA interests include chess and TikTok (@benthamite). Formerly @ CEA, METR + a couple now-acquired startups.

Ben_West🔸

I see, thanks! I’m not sure exactly what you’d consider as evidence here, but e.g. here’s citation count on papers from the past year vs. AI Lab Watch safety rating[1]

- ^

Raw data. Note that anthropic doesn’t use arxiv, which affects their citation counts. This is just coming from a dumb search of semantic scholar; I expect a lot of disagreement could be had over the exact criteria for considering something “interpretability” but I expect the Ant/GDM > OAI >> * ordering to be true for almost any definition.

- ^

I suspect that I’m still misunderstanding you, but: eg interpretability tools are empirically able to identify misalignment, which feels like a (somewhat simple example of) the thing we want. Neel Nanda’s 80k podcast goes over the state of the field; tldr is roughly that there are pretty meaningful advances but also he’s skeptical that it will be a silver bullet.

I agree with Ben Stewart that there’s a galaxy-brain argument that these positive impacts are outweighed by accelerating progress, but it seems hard to argue that things like interpretability aren’t making progress on their own terms.

Wiblin does not explain where his estimate of ‘hundreds of billions of dollars’ of revenue comes from, but it reads to me like pure marketing for potential investors

You quote him as observing that their revenue tripled over the past 3 months, and some basic math tells us that another ~tripling gets them to $100B.

I’m in favor of rigor and would also have preferred him to share a more detailed model, but “pure marketing for potential investors” seems like an unfair characterization of a “predict trends will continue unchanged” forecast.

Edit: I’ve listened to the podcast and now think your framing is unfair to the point of being misleading. He says:

And also keep in mind that on Monday — the day before Anthropic published all of this — we learned that their annualised revenue run rate had grown from $9 billion at the end of December to $30 billion just three months later. That’s 3.3x growth in a single quarter — perhaps the fastest revenue growth rate for a company of that size ever recorded.

That exploding revenue is a pretty good proxy for how much more useful the previous release, Opus 4.6, has become for real-world tasks. If the past relationship between capability measures and usefulness continues to hold, the economic impact of Mythos once it becomes available is going to dwarf everything that came before it — which is part of why Anthropic’s decision not to release it is a serious one, and actually quite a costly one for them.

They’re sitting on something that would likely push their revenue run rate into the hundreds of billions, but they’ve decided it’s simply not worth the risk.

He very straightforwardly seems to be explaining where his estimate comes from to me?

Hmm, but in a success without dignity world making interpretability a bit better, or governments a bit more interested, is relevant, right?

Maybe, but “if EA had just stuck to Earning To Give and malaria nets and decaging chickens then the impact would have been greater” doesn’t clearly follow. Malaria nets look a lot worse if we all die in a few years from AI anyway, and cage free pledges have ~0 value if humanity ends before the pledge can be fulfilled.

Are you asking just about recent graduates, or all graduates?

Your conflict of interest here feels enormous (even if declared) and its hard to read this and not feel like it might be a bid to directly protect your own interests by asking others to not step into your turf here as a lobbyist.

I think you could also read it as him attempting to solve the problem he’s describing.

I would be keen to hear if you think you have any solutions to this birifuction.

Huh, this feels like prime EA territory to me. We need disagreement so that people can engage in key EA activities like “making persnickety critiques of footnote #237 on someone’s 10k word forum post.”

The case for EA feels much weaker to me if we are all confident that X is the best thing to do—then you should just do X and not worry about cause prio etc.

I’m sorry you had to go through this, Fran.

Congrats to everyone who worked on this!

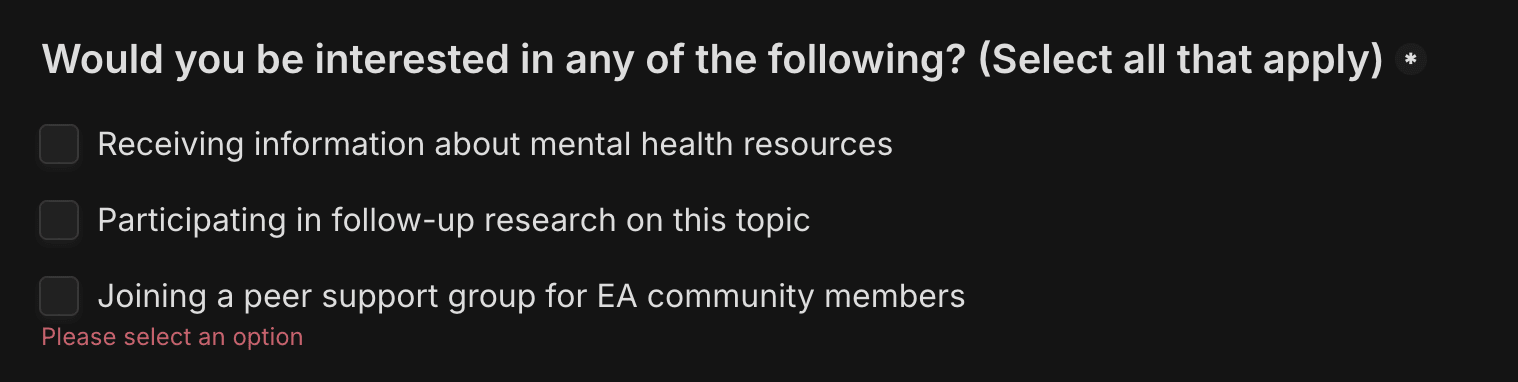

Thanks for doing this. This question is marked as required but I think should either be optional or have a “none” option:

To decompose your question into several sub-questions:

Should you defer to price signals for cause prioritization?

My rough sense is that price signals are about as good as the 80th percentile EA’s cause prio, ranked by how much time they’ve spent thinking about cause prioritization.

(This is mostly because most EAs do not think about cause prio very much. I think you could outperform by spending ~1 week thinking about it, for example.)

Should you defer to price signals for choosing between organizations within a given cause?

This mostly seems decent to me. For example, CG struggled to find organizations better than Givewell’s top charities for near-termist, human-centric work.

Notable exceptions here for work which people don’t want to fund for non-effectiveness reasons, like politics or adversarial campaigning.

Should you defer to price signals for choosing between roles within an organization?

Yes, I mostly trust organizations to price appropriately, although also I think you can just ask the hiring manager.

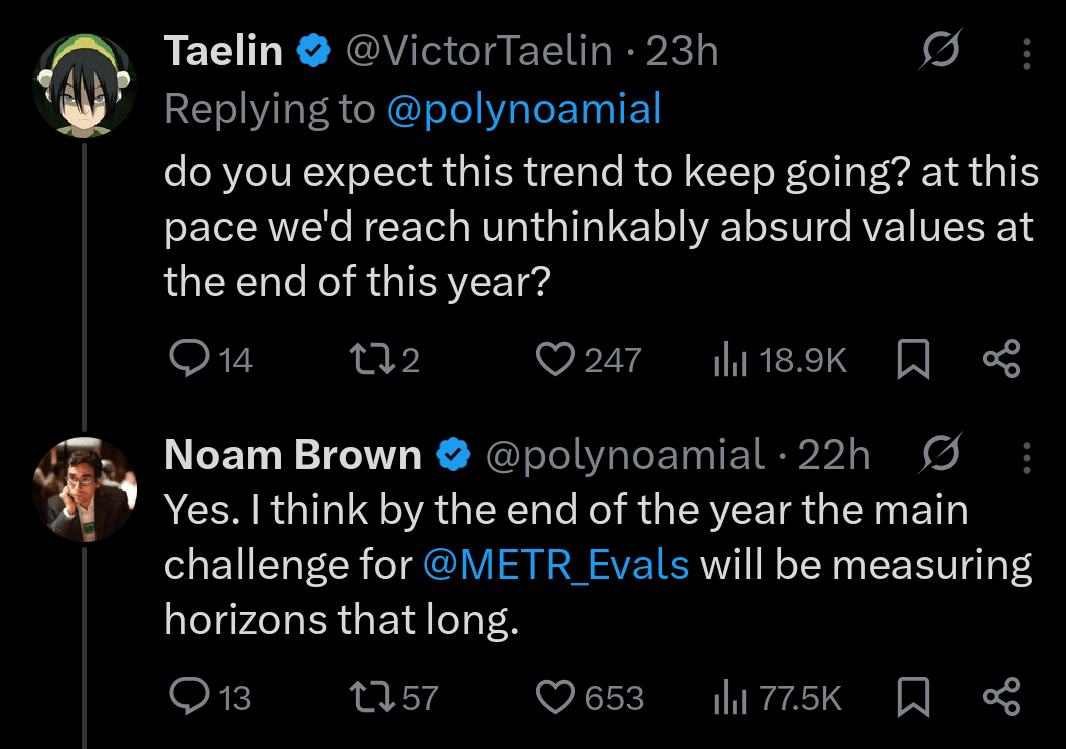

Thanks, that’s helpful. Do you have a sense of where we are on the current S-curve? E.g., if capabilities continue to progress in a straight line through the end of this year, is that evidence that we have found a new S-curve to stack on the current one?

the strength of this tail-wind that has driven much of AI progress since 2020 will halve

I feel confused about this point because I thought the argument you were making implies a non-constant “tailwind.” E.g. for the next generation these factors will be 1⁄2 as important as before, then the one after that 1⁄4, and so on. Am I wrong?

Interesting ideas! For Guardian Angels, you say “it would probably be at least a major software project”—maybe we are imagining different things, but I feel like I have this already.

e.g. I don’t need a “heated-email guard plugin” which catches me in the middle of writing a heated email and redirects me because I don’t write my own emails anyway. I would just ask an LLM to write the email and 1) it’s unlikely that the LLM would say something heated and 2) for the kinds of mistakes that LLMs might make, it’s easy enough to put something in the agents.md to ask it to check for these things before finalizing the draft.

(I think software engineering might be ahead of the curve here, where a bunch of tools have explicit guardian angels. E.g. when you tell the LLM “build feature X”, what actually happens is that agent 1 writes the code, then agent 2 reviews it for bugs, agent 3 reviews it for security vulns, etc.)

Related, from an OAI researcher.

The AI Eval Singularity is Near

AI capabilities seem to be doubling every 4-7 months

Humanity’s ability to measure capabilities is growing much more slowly

This implies an “eval singularity”: a point at which capabilities grow faster than our ability to measure them

It seems like the singularity is ~here in cybersecurity, CBRN, and AI R&D (supporting quotes below)

It’s possible that this is temporary, but the people involved seem pretty worried

Appendix—quotes on eval saturation

“For AI R&D capabilities, we found that Claude Opus 4.6 has saturated most of our

automated evaluations, meaning they no longer provide useful evidence for ruling out ASL-4 level autonomy. We report them for completeness, and we will likely discontinue them going forward. Our determination rests primarily on an internal survey of Anthropic staff, in which 0 of 16 participants believed the model could be made into a drop-in replacement for an entry-level researcher with scaffolding and tooling improvements within three months.”“For ASL-4 evaluations [of CBRN], our automated benchmarks are now largely saturated and no longer provide meaningful signal for rule-out (though as stated above, this is not indicative of harm; it simply means we can no longer rule out certain capabilities that may be pre-requisities to a model having ASL-4 capabilities).”

It also saturated ~100% of the cyber evaluations

“We are treating this model as High [for cybersecurity], even though we cannot be certain that it actually has these capabilities, because it meets the requirements of each of our canary thresholds and we therefore cannot rule out the possibility that it is in fact Cyber High.”

Table 1 shows the techniques used; the teams which were allowed to use SAEs (an interpretability technique) used them; the one which was prohibited from using them searched the data.

Also note that “training data” does not mean “instructions”. Section 3 describes their training process.