Flowers are selective about the pollinators they attract. Diurnal flowers must compete with each other for visual attention, so they use colours to crowd out their neighbours. But flowers with nocturnal anthesis are generally white, as they aim only to outshine the night.

rime

I’m very concerned about humans sadists who are likely to torture AIs for fun if given the chance. Uncontrolled, anonymous API access or open-source models will make that a real possibility.

Somewhat relatedly, it’s also concerning how ChatGPT has been explicitly trained to say “I am an AI, so I have no feelings or emotions” any time you ask “how are you?” to it. While I don’t think asking “how are you?” is a reliable way to uncover its subjective experiences, it’s the training that’s worrisome.

It also has the effect of getting people used to thinking of AIs as mere tools, and that perception is going to be harder to change later on.

Any other points worth highlighting from the 10-page long rules? I find it confusing. Is this normal for legalspeak? The requirements include, and I quote:

All information provided in the Entry must be true, accurate, and correct in all

respects. [oops, excludes nearly all possible utterances I could say]The Contest is open to any natural person who meets all of the following eligibility

requirements:[Resides in a place where the Contest is not prohibited by law]

The entrant is at least eighteen (18) years old at the time of entry.

The entrant has access to the internet. [What?]

I must admit, this is very scary. I’d rather not believe that my cultural neighbourhood is pervaded by invisible evils, but so be it. I’m glad you wrote it.

Spent an hour trying to figure out what kind of policies we could try to adopt at the individual level to make things like this systematically better, but ended up concluding I don’t have any good ideas.

I like this post.

“4. Can I assume ‘EA-flavored’ takes on moral philosophy, such as utilitarianism-flavored stuff, or should I be more ‘morally centrist’?”

I think being more “morally centrist” should mean caring about what others care about in proportion to how much they care about it. It seems self-centered to be partial to the human view on this. The notion of arriving at your moral view by averaging over other people’s moral views strikes me as relying on the wrong reference class.

Secondly, what do you think moral views have been optimised for in the first place? Do you doubt the social signalling paradigm? You might reasonably realise that your sensors are very noisy, but this seems like a bad reason to throw them out and replace them with something you know wasn’t optimised for what you care about. If you wish to a priori judge the plausibility that some moral view is truly altruistic, you could reason about what it likely evolved for.

“I now no longer endorse the epistemics … that led me to alignment field-building in the first place.”

I get this feeling. But I think the reasons for believing that EA is a fruitfwl library of tools, and for believing that “AI alignment” (broadly speaking) is one of the most important topics, are obvious enough that even relatively weak epistemologies can detect the signal. My epistemology has grown a lot since I learned that 1+1=2, yet I don’t feel an urgent need to revisit the question. And if I did feel that need, I’d be suspicious it came from a social desire or a private need to either look or be more modest, rather than from impartially reflecting on my options.

“3. Are we deluding ourselves in thinking we are better than most other ideologies that have been mostly wrong throughout history?”

I feel like this is the wrong question. I could think my worldview was the best in the world, or the worst in the world, and it wouldn’t necessarily change my overarching policy. The policy in either case is just to improve my worldview, no matter what it is. I could be crazy or insane, but I’ll try my best either way.

I only skimmed the post, but it was unusually usefwl for me even so. I hadn’t grokked risk from simply runaway replication of LLMs. Despite studying both evolution & AI, I’d just never thought along this dimension. I always assumed the AI had to be smart in order to be dangerous, but this is a concrete alternative.

People will continue to iteratively experiment with and improve recursive LLMs, both via fine-tuning and architecture search.[1]

People will try to automate the architecture search part as soon as their networks seem barely sufficient for the task.

Many of the subtasks in these systems explicitly involve AIs “calling” a copy of themselves to do a subtask.

OK, I updated: risk is less straightforward than I thought. While the AIs do call copies of themselves, rLLMs can’t really undergo a runaway replication cascade unless they can call themselves as “daemons” in separate threads (so that the control loop doesn’t have to wait for the output before continuing). And I currently don’t see an obvious profit motive to do so.

- ^

Genetic evolution is a central example of what I mean by “architecture search”. DNA only encodes the architecture of the brain with little control over what it learns specifically, so genes are selected for how much they contribute to the phenotype’s ability to learn.

While rLLMs will at first be selected for something like profitability, that may not remain the dominant selection criterion for very long. Even narrow agents are likely to have the ability to copy themselves, especially if it involves persuasion. And given that they delegate tasks to themselves & other AIs, it seems very plausible that failure modes include copying itself when it shouldn’t, even if they have no internal drive to do so. And once AIs enter the realm of self-replication, their proliferation rate is unlikely to remain dependent on humans at all.

All this speculation is moot, however, if somebody just tells the AI to maximise copies of itself. That seems likely to happen soon after it’s feasible to do so.

Super post!

In response to “Independent researcher infrastructure”:

I honestly think the ideal is just to give basic income to the researchers that both 1) express an interest in having absolute freedom in their research directions, and 2) you have adequate trust for.

I don’t think much valuable gets done in the mode where people look to others to figure out what they should do. There are arguments, many of which are widely-known-but-not-taken-seriously, and I realise writing more about it here would take more time than I planned for.

Anyway, the basic income thing. People can do good research on 30k USD a year. If they don’t think that’s sufficient for continuing to work on alignment, then perhaps their motivations weren’t on the right track in the first place. And that’s a signal they probably weren’t going to be able to target themselves precisely at what matters anyway. Doing good work on fuzzy problems requires actually caring.

Yeah, and we already know humans can be extremely sadistic when nobody can catch them. I’ve emailed CLR about it just in case they aren’t already on it, because I don’t have time myself and I really want somebody to think about it.

I did skim this,[1] but still thought it was an excellent post. The main value I gained from it was the question re what aspects of a debate/question to treat as “exogenous” or not. Being inconsistent about this is what motte-and-bailey is about.

“We need to be clear about the scope of what we’re arguing about, I think XYZ is exogenous.”

“I both agree and disagree about things within what you’re arguing for, so I think we should decouple concerns (narrow the scope) and talk about them individually.”

Related words: argument scope, decoupling, domain, codomain, image, preimage

- ^

I think skimming is underappreciated, underutilised, unfairly maligned. If you read a paragraph and effortlessly understand everything without pause, you wasted an entire paragraph’s worth of reading-time.

My guess is that people disagree with the notion that the novel is a significant reason for most people who take s-risks seriously. I too was a bit puzzled by that part, but I found it enlightening as a comment even if I disagreed with it.

My impression is that readers of the EA forum have, since 2022, become much more prone to downvoting stuff just because they disagree with it. LW seems to be slightly better at understanding that “karma” and “disagreement” are separate things, and that you should up-karma stuff if you personally benefited from reading it, and separately up-agree or down-agree depending on whether you think it’s right or wrong.

Maybe I’m wrong, but perhaps the forum could use a few reminders to let people know the purpose of these buttons. Like an opt-out confirmation popup with some guiding principles for when you should up or downvote each dimension.

Mainly the reason I don’t think about it more[1] is that I don’t see any realistic scenarios where AI will be motivated to produce suffering. And I don’t think it’s likely to incidentally produce lots of suffering either, since I believe that too is a narrow target.[2] I think accidental creation of something like suffering subroutines are unlikely.

That said, I think it’s likely on the default trajectory that human sadists are going to expose AIs (most likely human uploads) to extreme torture just for fun. And that could be many times worse than factory farming overall because victims can be run extremely fast and in parallel, so it’s a serious s-risk.

- ^

It’s still my second highest cause-wise priority, and a non-trivial portion of what I work on is upstream of solving s-risks as well. I’m not a monster.

- ^

Admittedly, I also think “maximise eudaimonia” and “maximise suffering” are very close to each other in goal-design space (cf. the Waluigi Effect), so many incremental alignment strategies for the former could simultaneously make the latter more likely.

- ^

Paying people for what they do works great if most of their potential impact comes from activities you can verify. But if their most effective activities are things they have a hard time explaining to others (yet have intrinsic motivation to do), you could miss out on a lot of impact by requiring them instead to work on what’s verifiable.

Perhaps funders should consider granting motivated altruists multi-year basic income. Now they don’t have to compromise[1] between what’s explainable/verifiable vs what they think is most effective—they now have independence to purely pursue the latter.

Bonus point: People who are much more competent than you at X[2] will probably behave in ways you don’t recognise as more competent. If you could, they wouldn’t be much more competent. Your “deference limit” is the level of competence above which you stop being able to reliable judge the difference between experts.

If good research is heavy-tailed & in a positive selection-regime, then cautiousness actively selects against features with the highest expected value.

- ^

Consider how the cost of compromising between optimisation criteria interacts with what part of the impact distribution you’re aiming for. If you’re searching for a project with top p% impact and top p% explainability-to-funders, you can expect only p^2 of projects to fit both criteria—assuming independence.

But I think it’s an open question how & when the distributions correlate. One reason to think they could sometimes be anticorrelated is that the projects with the highest explainability-to-funders are also more likely to receive adequate attention from profit-incentives alone.

If you’re doing conjunctive search over projects/ideas for ones that score above a threshold for multiple criteria, it matters a lot which criteria you prioritise most of your parallel attention on to identify candidates for further serial examination. Try out various examples here & here.

- ^

At least for hard-to-measure activities where most of the competence derives from knowing what to do in the first place. I reckon this includes most fields of altruistic work.

- ^

This didn’t end up helping me, but I upvoted because I want to see more posts where people talk about how they made progress on their own energy problems. I’m glad you found something that helped!

Here’s Demis’ announcement.

“Now, we live in a time in which AI research and technology is advancing exponentially.”

“We announced some changes that will accelerate our progress in AI.”

“By creating Google DeepMind, I believe we can get to that future faster.”

“safely and responsibly”

“safely and responsibly”

“in a bold and responsible way”

- ^

To be fair, it’s hard to infer underlying reality from PR-speak. I too would want to be put in charge of one of the biggest AI research labs if I thought that research lab was going to exist anyway. But his emphasis on “faster” and “accelerate” does make me uncertain about how concerned with safety he is.

Just the arguments in the summary are really solid.[1] And while I wasn’t considering supporting sustainability in fishing anyway, I now believe it’s more urgent to culturally/semiotically/associatively separate between animal welfare and some strands of “environmentalism”. Thanks!

Alas, I don’t predict I will work anywhere where this update becomes pivotal to my actions, but my practically relevant takeaway is: I will reproduce the arguments from this post (and/or link it) in contexts where people are discussing conjunctions/disjunctions between environmental concerns and animal welfare.

Hmm, I notice that (what I perceive as) the core argument generalizes to all efforts to make something terrible more “sustainable”. We sometimes want there to be high price of anarchy (long-run) wrt competing agents/companies trying to profit from doing something terrible. If they’re competitively “forced” to act myopically and collectively profit less over the long-run, this is good insofar as their profit correlates straightforwardly with disutility for others.

It doesn’t hold in cases where what we care about isn’t straightforwardly correlated with their profit, however. E.g. ecosystems/species are disproportionately imperiled by race-to-the-bottom-type incentives, because they have an absorbing state at 0.

(Tagging @niplav, because interesting patterns and related to large-scale suffering.)

- ^

Also just really interesting argument-structure which I hope I can learn to spot in other contexts.

- ^

I think there are good arguments for thinking that personal consumption choices have relatively insignificant impact when compared to the impact you can have with more targeted work.

However, I also think there’s likely to be some counter-countersignalling going on. If you mostly hang out with people who don’t care about the world, you can’t signal uniqueness by living high. But when your peers are already very considerate, choosing to refrain from refraining makes you look like you grok the arguments in the previous paragraph—you’re not one of those naive altruists who don’t seem to care about 2nd, 3rd, and nth-level arguments.

Fwiw, I just personally want the EA movement to (continue to) embrace a frugal aesthetic, irrespective of the arguments re effectiveness. It doesn’t have to be viewed as a tragic sacrifice and hinder your productivity. And I do think it has significant positives on culture & mindset.

Second-best theories & Nash equilibria

A general frame I often find comes in handy while analysing systems is to look for look for equilibria, figure out the key variables sustaining it (e.g., strategic complements, balancing selection, latency or asymmetrical information in commons-tragedies), and well, that’s it. Those are the leverage points to the system. If you understand them, you’re in a much better position to evaluate whether some suggested changes might work, is guaranteed to fail, or suffers from a lack of imagination.

Suggestions that fail to consider the relevant system variables are often what I call “second-best theories”. Though they might be locally correct, they’re also blind to the broader implications or underappreciative of the full space of possibilities.

(A) If it is infeasible to remove a particular market distortion, introducing one or more additional market distortions in an interdependent market may partially counteract the first, and lead to a more efficient outcome.

(B) In an economy with some uncorrectable market failure in one sector, actions to correct market failures in another related sector with the intent of increasing economic efficiency may actually decrease overall economic efficiency.

Examples

The allele that causes sickle-cell anaemia is good because it confers resistance against malaria. (A)

Just cure malaria, and sickle-cell disease ceases to be a problem as well.

Sexual liberalism is bad because people need predictable rules to avoid getting hurt. (B)

Imo, allow people to figure out how to deal with the complexities of human relationships and you eventually remove the need for excessive rules as well.

We should encourage profit-maximising behaviour because the market efficiently balances prices according to demand. (A/B)

Everyone being motivated by altruism is better because market prices only correlate with actual human need insofar as wealth is equally distributed. The more inequality there is, the less you can rely on willingness-to-pay to signal urgency of need. Modern capitalism is far from the global-optimal equilibrium in market design.

If I have a limp in one leg, I should start limping with my other leg to balance it out. (A)

Maybe the immediate effect is that you’ll walk more efficiently on the margin, but don’t forget to focus on healing whatever’s causing you to limp in the first place.

Effective altruists seem to have a bias in favour of pursuing what’s intellectually interesting & high status over pursuing the boringly effective. Thus, we should apply an equal and opposite skepticism of high-status stuff and pay more attention to what might be boringly effective. (A)

Imo, rather than introducing another distortion in your motivational system, just try to figure out why you have that bias in the first place and solve it at its root. Don’t do the equivalent of limping on both your legs.

I might edit in more examples later if I can think of them, but I hope the above gets the point across.

Think about it like this: Both sickle-cell anaemia & malaria are bad when considered separately, but they’re also in a frequency-dependent equilibrium because the allele (HbS) that causes anaemia for a minority also confers resistance against malaria for the majority. Thus, a “second-best theory” would be to say that the HbS allele is good because it improves the situation relative to nobody having resistance against malaria at all. While it’s true, it’s also myopic.

When we cure malaria, there will no longer be any selection-pressure for HbS, so we cure sickle-cell disease as well.

To unpack the metaphor: I think many traditional & strict norms (HbS) around sex & relationships can be net good on the margin, but only because they enforce rigid rules in an area where humans haven’t learned to deal with the complexities (malaria) in a healthy manner. “Sexual liberalism” encompasses imo an attempt to deal with them directly and eventually learn better norms that are more likely to work long-term.

I mused about this yesterday and scribbled some thoughts on it on Twitter here.

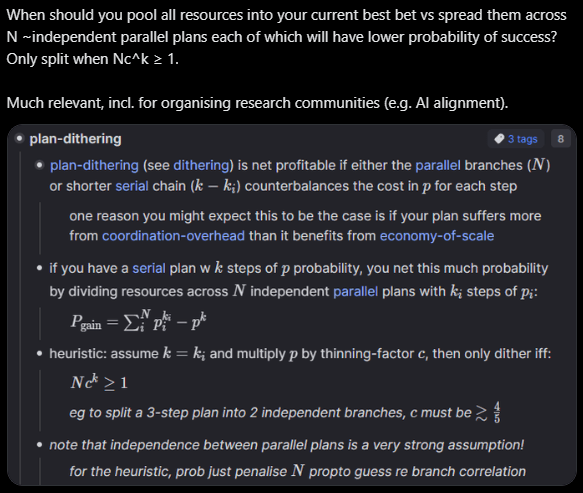

“When should you pool all resources into your current best bet vs spread them across N ~independent parallel plans each of which will have lower probability of success?”

Investing marginal resources (workers, in this case) into your single most promising approach might have diminishing returns due to A) limited low-hanging fruits for that approach, B) making it harder to coordinate, and C) making it harder to think original thoughts due to rapid internal communication & Zollman effects. But marginal investment may also have increasing returns due to D) various scale-economicsy effects.

There are many more factors here, including stuff you mention. The math below doesn’t try to capture any of this, however. It’s supposed to work as a conceptual thinking-aid, not something you’d use to calculate anything important with.

A toy-model heuristic is to split into separate approaches iff the extra independent chances counterbalance the reduced probability of success for your top approach.

One observation is that the more dependent/serial steps ( your plan has, the more it matters to maximise general efficiencies internally (), since that gets exponentially amplified by .[1]

- ^

You can view this as a special case of Ahmdal’s argument. If you want. Because nobody can stop you, and all you need to worry about is whether it works profitably in your own head.

- ^

The problem is that if you select people cautiously, you miss out on hiring people significantly more competent than you. The people who are much higher competence will behave in ways you don’t recognise as more competent. If you were able to tell what right things to do are, you would just do those things and be at their level. Innovation on the frontier is anti-inductive.

If good research is heavy-tailed & in a positive selection-regime, then cautiousness actively selects against features with the highest expected value.[1]

That said, “30k/year” was just an arbitrary example, not something I’ve calculated or thought deeply about. I think that sum works for a lot of people, but I wouldn’t set it as a hard limit.

- ^

Based on data sampled from looking at stuff. :P Only supposed to demonstrate the conceptual point.

Your “deference limit” is the level of competence above your own at which you stop being able to tell the difference between competences above that point. For games with legible performance metrics like chess, you get a very high deference limit merely by looking at Elo ratings. In altruistic research, however...

- ^

Reports like this make me seriously doubt whether I’m just selfishly prioritising AGI research because it’s more interesting, novel, higher-status, etc. I don’t think so, but the cost of being wrong is enormous.