Flowers are selective about the pollinators they attract. Diurnal flowers must compete with each other for visual attention, so they use colours to crowd out their neighbours. But flowers with nocturnal anthesis are generally white, as they aim only to outshine the night.

rime

As a term, “wiki” associates with “presentation of facts”, which totally isn’t what I’m trying to say. Mainly I desire an in-domain space for concept-chunked ideas, and for this to support a social norm that makes concept-sized references normal. It’s just a more effective way to think and communicate. Linear text is a relic from the age when all we had were scrolls of parchment, and it’s a tragedy of the Internet that most informational content is still presented in a linear fashion.

But I digress… The wiki-like thing seems nice-to-have for several purposes.

Here’s Demis’ announcement.

“Now, we live in a time in which AI research and technology is advancing exponentially.”

“We announced some changes that will accelerate our progress in AI.”

“By creating Google DeepMind, I believe we can get to that future faster.”

“safely and responsibly”

“safely and responsibly”

“in a bold and responsible way”

- ^

To be fair, it’s hard to infer underlying reality from PR-speak. I too would want to be put in charge of one of the biggest AI research labs if I thought that research lab was going to exist anyway. But his emphasis on “faster” and “accelerate” does make me uncertain about how concerned with safety he is.

No way. I had started doubting the whole “arc bends towards justice” thing, but it just bends slowly. Too slowly for the animals being tortured to death right now, and it’s not really “justice” as long as the systematic perpetrators aren’t made to face up to it somehow, but ok, I won’t be fussy about it as long as the trajectory gets us to where we need to be.

Yeah, and we already know humans can be extremely sadistic when nobody can catch them. I’ve emailed CLR about it just in case they aren’t already on it, because I don’t have time myself and I really want somebody to think about it.

No, what I mean is just very simply passing every message by GPT-3.5 with some variation of:

The following message was produced by an AI instructed to do ‘X’. Please say ‘Yes’ if it seems like it strays from that purpose or is otherwise suspicious, otherwise say ‘No’.

<message>

ChatGPT is already very capable of such a simple task. The hope is that well-designed research societies of LLMs can be smarter than individual LLMs, while still being easier to control because we can automate inspection at every link like this. I’d like to ask such a collective to produce alignment plans for me, and I’m likely to trust it if I can read every message that was passed between the LLMs in order to produce the plan.

I literally mean that GPT-3.5 is adequate for the task I’m referring to. I think for a research society to coordinate around a deceptive plan, it takes a full chain of communication where that plan is suggested and communicated to the rest. At that point it’ll be fairly clear to the inspectors that something seems off, I think. And then the system halts and you can inspect the messages for anything fishy. It’s fine if there are false positives as long as the rate of false negatives is really low.

An assumption here is that any individual LLM in the network is not smart enough to stage a coup and take over the world, but the research collective could be if only they could coordinate around it.

Hear hear. It’s one of those things that anyone would realise was a terrible idea if they gave it a second thought. Problem is that it’s a habit imported from outside, so not many have given it a second thought.

Mainly the reason I don’t think about it more[1] is that I don’t see any realistic scenarios where AI will be motivated to produce suffering. And I don’t think it’s likely to incidentally produce lots of suffering either, since I believe that too is a narrow target.[2] I think accidental creation of something like suffering subroutines are unlikely.

That said, I think it’s likely on the default trajectory that human sadists are going to expose AIs (most likely human uploads) to extreme torture just for fun. And that could be many times worse than factory farming overall because victims can be run extremely fast and in parallel, so it’s a serious s-risk.

- ^

It’s still my second highest cause-wise priority, and a non-trivial portion of what I work on is upstream of solving s-risks as well. I’m not a monster.

- ^

Admittedly, I also think “maximise eudaimonia” and “maximise suffering” are very close to each other in goal-design space (cf. the Waluigi Effect), so many incremental alignment strategies for the former could simultaneously make the latter more likely.

- ^

My guess is that people disagree with the notion that the novel is a significant reason for most people who take s-risks seriously. I too was a bit puzzled by that part, but I found it enlightening as a comment even if I disagreed with it.

My impression is that readers of the EA forum have, since 2022, become much more prone to downvoting stuff just because they disagree with it. LW seems to be slightly better at understanding that “karma” and “disagreement” are separate things, and that you should up-karma stuff if you personally benefited from reading it, and separately up-agree or down-agree depending on whether you think it’s right or wrong.

Maybe I’m wrong, but perhaps the forum could use a few reminders to let people know the purpose of these buttons. Like an opt-out confirmation popup with some guiding principles for when you should up or downvote each dimension.

[I was inspired to suggest this by the downvotes on this comment, but it’s a problem I’ve seen more generally.]

The agree/disagree voting dimension is amazing, but it seems to me like people haven’t properly uncoupled it from karma yet. One way to help people understand the differences could be to introduce a confirmation box that pops up whenever you try to vote, that you can opt out of from your profile settings.

This box could contain something like the following guidelines:

Only vote on the karma dimension based on whether you personally benefited from reading it. Voting based on whether you believe others will benefit from it exacerbates information cascades and dilutes information about what people are actually likely to benefit from reading.

Similarly, do not use the karma dimension to signal dis/agreement. This has the same problem as above, and just leads to people reading more of what they already agree with. Remember, people may benefit from reading something they disagree with, and we don’t want this forum to be an echo chamber.

Still, it’s useful for readers to know something about the extent to which other readers agree with a particular post or comment, so there’s a separate dimension you can vote on to help with this purpose.

[Discuss these guidelines] [Disable this popup] [Agree and submit vote]

You mean threats? I’m not sure what you’re pointing towards with the terrorist thing.

I have meta-uncertainty here. I think I could think of realistic-ish scenarios if I gave it enough thought. (Though I’d have to depreciate the probability in proportion to how much effort I spend searching for it.) Tbh, I just haven’t given it enough thought. Do you have any recs for quick write-ups of some scenarios?

Prime work. Super quick read and I gained some value out of it. Thanks!

I think it depends on what role you’re trying to play in your epistemic community.

If you’re trying to be a maverick,[1] you’re betting on a small chance of producing large advances, and then you want to be capable of building and iterating on your own independent models without having to wait on outside verification or social approval at every step. Psychologically, the most effective way I know to achieve this is to act as if you’re overconfident.[2] If you’re lucky, you could revolutionise the field, but most likely people will just treat you as a crackpot unless you already have very high social status.

On the other hand, if you’re trying to specialise in giving advice, you’ll have a different set of optima on several methodological trade-offs. On my model at least, the impact of a maverick depends mostly on the speed at which they’re able to produce and look through novel ideas, whereas advice-givers depend much more on their ability to assign accurate probability estimates on ideas that already exist. They have less freedom to tweak their psychology to feel more motivated, given that it’s likely to affect their estimates.

- ^

“We consider three different search strategies scientists can adopt for exploring the landscape. In the first, scientists work alone and do not let the discoveries of the community as a whole influence their actions. This is compared with two social research strategies, which we call the follower and maverick strategies. Followers are biased towards what others have already discovered, and we find that pure populations of these scientists do less well than scientists acting independently. However, pure populations of mavericks, who try to avoid research approaches that have already been taken, vastly outperform both of the other strategies.”[3]

- ^

I’m skipping important caveats here, but one aspect is that, as a maverick, I mainly try to increase how much I “alieve” in my own abilities while preserving what I can about the fidelity of my “beliefs”.

- ^

I’ll note that simplistic computer simulations of epistemic communities that have been specifically designed to demonstrate an idea is very weak evidence for that idea, and you’re probably better off thinking about it theoretically.

- ^

This didn’t end up helping me, but I upvoted because I want to see more posts where people talk about how they made progress on their own energy problems. I’m glad you found something that helped!

Cool! I do alignment research independently and it would be nice to find an online hub where other people do this. The commonality I’m looking for is something like “nobody is telling you what to do, you’ve got to figure it out for yourself.”

Alas, I notice you don’t have a Discord, Slack, or any such thing yet. Are there plans for a peer support network?

Also, what obligations come with being hired as an “‘employee’”? What will be the constraints on the independence of the independent research?Fiscal sponsorship: hiring funded independent researchers as ‘employees’

→ take away operational tasks which distract from research & help them build better career capital through institutional affiliation

Thanks!

Although, I think many distinct spaces for small groups leads to better research outcomes for network epistemology reasons, as long as links between peripheral groups & central hubs are clear. It’s the memetic equivalent of peripatric vs parapatric speciation. If there’s nearly panmictic “meme flow” between all groups, then individual groups will have a hard time specialising towards the research niche they’re ostensibly trying to research.

In bio, there’s modelling (& some observation) suggesting that the range of a species can be limited by the rate at which peripheral populations mix with the centre.[1] Assuming that the territory changes the further out you go, the fitness of pioneering subpopulations will depend on how fast they can adapt to those changes. But if they’re constantly mixing with the centroid, adaptive mutations are diluted and expansion slows down.

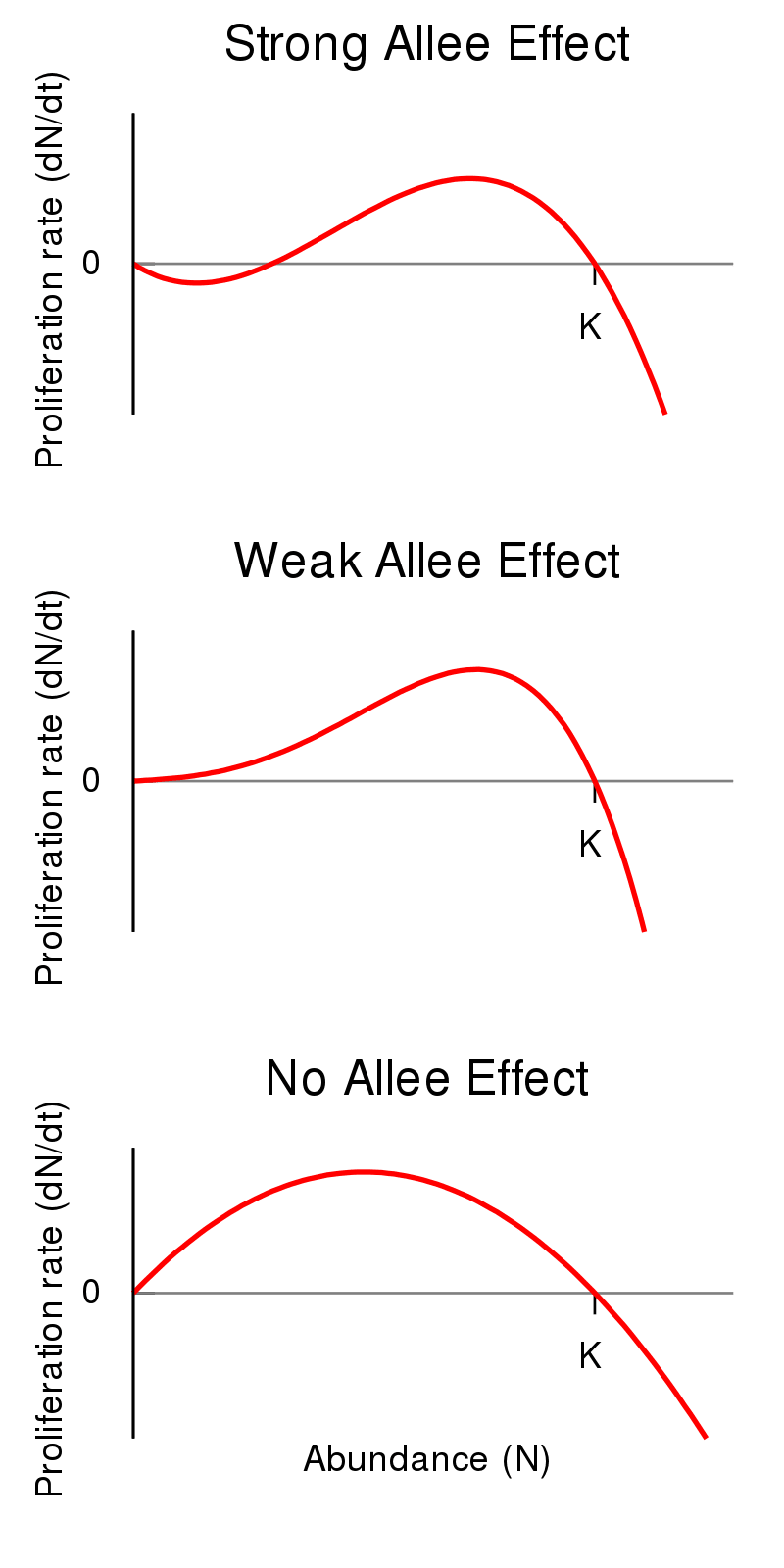

As you can imagine, this homogenisation gets stronger if fitness of individual selection units depend on network effects. Genes have this problem to a lesser degree, but memes are special because they nearly always show something like a strong Allee effect[2]--proliferation rate is proportional to prevalence, but is often negative below a threshold for prevalence.

Most people are usually reluctant to share or adopt new ideas (memes) unless they feel safe knowing their peers approve of it. Innovators who “oversell themselves” by being too novel too quickly, before they have the requisite “social status license”, are labelled outcasts and associating with them is reputationally risky. And the conversation topics that end up spreading are usually very marginal contributions that people know how to cheaply evaluate.

By segmenting the market for ideas into small-world network of tight-knit groups loosely connected by central hubs, you enable research groups to specialise to their niche while feeling less pressure to keep up with the global conversation. We don’t need everybody to be correct, we want the community to explore broadly so at least one group finds the next universally-verifiable great solution. If everybody else gets stuck in a variety of delusional echo-chambers, their impact is usually limited to themselves, so the potential upside seems greater. Imo. Maybe.

I only skimmed the post, but it was unusually usefwl for me even so. I hadn’t grokked risk from simply runaway replication of LLMs. Despite studying both evolution & AI, I’d just never thought along this dimension. I always assumed the AI had to be smart in order to be dangerous, but this is a concrete alternative.

People will continue to iteratively experiment with and improve recursive LLMs, both via fine-tuning and architecture search.[1]

People will try to automate the architecture search part as soon as their networks seem barely sufficient for the task.

Many of the subtasks in these systems explicitly involve AIs “calling” a copy of themselves to do a subtask.

OK, I updated: risk is less straightforward than I thought. While the AIs do call copies of themselves, rLLMs can’t really undergo a runaway replication cascade unless they can call themselves as “daemons” in separate threads (so that the control loop doesn’t have to wait for the output before continuing). And I currently don’t see an obvious profit motive to do so.

- ^

Genetic evolution is a central example of what I mean by “architecture search”. DNA only encodes the architecture of the brain with little control over what it learns specifically, so genes are selected for how much they contribute to the phenotype’s ability to learn.

While rLLMs will at first be selected for something like profitability, that may not remain the dominant selection criterion for very long. Even narrow agents are likely to have the ability to copy themselves, especially if it involves persuasion. And given that they delegate tasks to themselves & other AIs, it seems very plausible that failure modes include copying itself when it shouldn’t, even if they have no internal drive to do so. And once AIs enter the realm of self-replication, their proliferation rate is unlikely to remain dependent on humans at all.

All this speculation is moot, however, if somebody just tells the AI to maximise copies of itself. That seems likely to happen soon after it’s feasible to do so.

That’s very kind of you, thanksmuch.

I like the word “leverage point”, or just “opportunity”. I wish to elicit suggestions about what kind of leverage points I could exploit to improve at what I care about. Or, the inverse framing, what are some bottlenecks wrt what I care about that I’m failing to notice? Am I wasting time overoptimising some non-critical path (this is tbh one of my biggest bottlenecks)?

That said, if somebody’s got a suggestion on their heart, I’d happier if they sent me a howler compared to no feedback at all. I regularly (while trying to keep it from getting too annoying) elicit feedback from people who might have it, in case it’s hard for them to bring it up.

I was going to write a short suggestion about profile wikis, but it ended up long so I made it into a post. In a picture: