London GWWC group co-lead: https://www.givingwhatwecan.org/london

Organiser of the EY Effective Altruism workplace group and EA London Quarterly Review coworking sessions.

In my day job, I’m an accountant turned product person in tax technology.

London GWWC group co-lead: https://www.givingwhatwecan.org/london

Organiser of the EY Effective Altruism workplace group and EA London Quarterly Review coworking sessions.

In my day job, I’m an accountant turned product person in tax technology.

Really excited to see this post! I’ve written a very early draft of something similar so very pleased that it’s already been done (likely better than I would have) so thank you 🤓

My background is Transfer Pricing at a Big 4 but I’ve moved into tax tech. I’d be interested in coordinating tax nerds interested so anyone reading this that would be interested then please message my EA forum account (I’ll make something happen if there’s enough people but also very happy to just chat about tax).

I think this would be valuable since:

I’ve had some great conversations with other EAs interested in tax policy

I’d love to see more posts like this [1]and it’d be great to get help building out the Tax Policy wiki on the EA forum

Having a space like this would be a great gateway for mid career folks already in Tax to think about how to use their skills for good (or to start donating effectively)

My take is that, for those just starting their careers and looking to build career capital, a tax graduate scheme with a large international firm is a decent career first step.

Pay is solid for a fresh graduate meaning you can have an immediate impact by giving a % to effective charities.

Depending on the department, the hours are not crazy meaning you can do side projects and volunteer to keep engaged in EA stuff.

In particular, working on EA workplace groups in these key orgs seems valuable for broader community engagement

Large firms tend to have great learning and development opportunities. They will often pay (and give you time off to study) for professional qualification in accountancy and/or tax which is very transferable to an operations/organisation building skillset.

Other links readers might be interested in:

Tax Policy for Good talk from EAGx Virtual: https://www.youtube.com/live/N45Q1mAfIz4?si=QOx7AO1a9Lq3XR4m

@OECDtax is a good follow on twitter for updates on their analysis. https://x.com/OECDtax?t=_C_p0wS9XhTtq6WTU5ZZMQ&s=09

The international tax space was also an area that I thought might have been an interesting case study for AI Governance (there was a request for those here) given the conflicting incentives between countries. There’s potentially interesting overlap between International Tax Policy and AI Governance if the technology is as economically transformative as some think and how tax policy could be used to redistribute benefits globally.

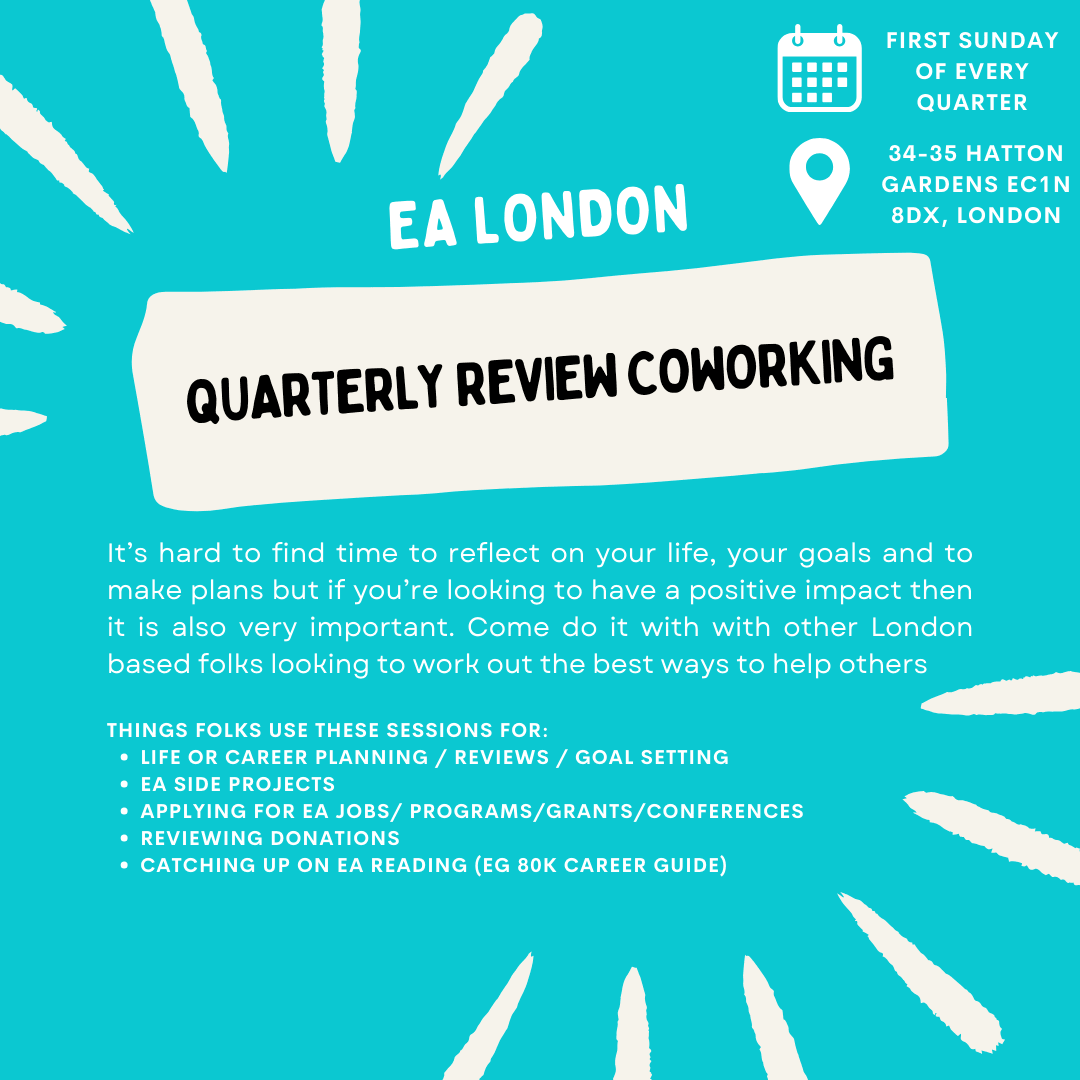

Since they were well received last year, I’m going to be hosting the EA London Quarterly Review Coworking sessions again for 2024.

You can register here: Q1 FY24 session sign up; Q2 FY24 session sign up; Q3 FY24 session sign up; Q4 FY24 session sign up

Thanks to Rishane for making this poster and to LEAH for hosting us.

Thanks for all your support!!! Very excited to have you join us in London ❤️

Absolutely agree—although I’m one of the other GWWC London co-leads so I am also biased here. I think low commitment in person socials are really important and tbh the social proof of meeting people like me who donated significantly was the most important factor for me personally.

I’d would like to see people be a lot more public with their pledges. I personally think Linkedin is underutilised here—adding pledges to the volunteering section your profile is low effort but sets a benchmark.

I’ve personally added my pledge to my email signature, but I think this depends a lot on the kind of role you have, the company you work for and if you think the personal reputation risk is worth the potential upside (influencing someone else to donate more to effective charities).

I think this could be especially powerful for senior people who have a lot of influence but equally I’ve had a few meaningful conversations with people off the back of it.

I’ve got a half-written post on this for this forum series and Alex from @Giving What We Can has created some fantastic banner images for LinkedIn profiles. Some resources from GWWC:

Donating anonymously: Should we be private or public about giving to charity? · Giving What We Can

Why you should mention the Pledge in your LinkedIn summary · Giving What We Can

Great comment—I’d add that usually GWWC pledges in the UK are based on pre tax so it wouldn’t actually cost the full £5k. Donations reduce your income for income tax purposes (but not NI) - Payroll Giving (UK) or GAYE—EA Forum (effectivealtruism.org)

ie.

£50k salary

£3.75k donation which is grossed up by 25% from your taxes with gift aid to £5k

If you actually donated £5k then that would be a £7.5k total donation when grossed up with gift aid.

However, the higher rate tax (40%) band starts at ~£50k a year so every £1 donated above that costs 60p

(Working on a longer explainer on this which updates this piece UK Income Tax & Donations — EA Forum (effectivealtruism.org) but you can check out the underlying spreadsheet which create these graphs here: UK Income tax (including NI) - Google Sheets)

Apologies for the delay in response—it has been a busy month at work!

Thank you for asking!! I have a lot of suggestions on this so have been trying to legibly structure my thoughts.

However, it has ended up turning into a bit of a monster answer and tbh replying to this comment is blocking me doing effective giving posts.

So I’m going to prioritise writing those this week and get back to you later.

Thanks for understanding!

Fantastic post and thank you for articulating this! I feel really similarly doing workplace organising—a lot of the value seems to be driven from connecting people to other people that take doing good seriously.

Some people struggle to work out what the EA community is supposed to do for them, or what the point of it all is. For what it’s worth, my experience has been that this confusion extends to all levels of seniority within the community. But for me, participating in the community was the obvious way to counter the attrition Brooks warned of. I tend to agree that you will tend to become more like those around you, but that applies to people other than your colleagues, and you can choose who those people are! Maybe those ‘EAs’ even find what you are doing praiseworthy, but a lot of the power is just in feeling less weird for trying.

I often feel like people working at core EA orgs forget how valuable this is for the vast majority of EAs, who do not work with other EAs. Almost everyone I know outside EA, from my parents to my colleagues to my neighbours, is not seeking to improve the wider world with any significant fraction of their resources. They’re just getting on with their lives and trying to do right by the people they meet. To the extent they are aware of my giving, their attitude is one of curious fascination.

Do you have thoughts on what you’d like to see more of in community building to support E2Gers? I’d be particularly curious about what you think made a difference when you were younger vs now

Oh wow!!! We’d love that!!!

I can arrange for there to be a physical certificate for you to sign.

Hi Mohammad—apologies for the delay in my response.

I understand how you feel. It was easy to get caught up in the opportunities to get lots of money to tackle the suffering and pressing problems in the world. But, retrospectively I think this was a big mistake on their part and everyone involved in EA needs to take a serious look at their approach to risk.

Hopefully, we can avoid falling into this trap again in the future.

Thank you! That’s very kind!

I feel similarly about finding EA later in my life—I heard about it when I was a few years into my career rather than in university. I’m glad I did because if I’d heard about it in uni, I could imagine it becoming my whole deal. I’ve got a lot of value from working a normie corporate job first and I’m glad a lot of my friends really don’t care about EA at all.

One of my other half-written drafts is about the benefits of doing graduate training at an employer that churns out dozens of graduates a year rather than a small EA organisation (where the quality of management, mentorship, training and support is more variable). I think the 80k advice on career capital for new grads is great and getting people to think about their long term output (thinking 20-30 years head rather than just 5) is excellent, but I think their ideas for initial first jobs are limited (and so obviously written by cerebral oxford grads who would have access to top of the range opportunities).

IMO they underrate graduates spending their first few years post-grad joining professions where there are existing networks and professional ethics requirements. Examples would be law/accountancy/engineering/medicine/teaching etc. I think there are downsides (time requirement, skills you might not use later) but I think there are benefits to having a more diverse non-academia EA talent pipeline and I want to spread effective giving into those spaces!! Having the pipeline mostly filled with early start up employees, policy people and management consultants is high risk—none of these roles are accountable to external ethical or professional standards. Plus, having worked in international tax, I now have opinions on potentially high impact tax policy work that isn’t obvious to people without that background—I like being able to bring a different perspective.

***

Good for you on bad criticisms! Keep at it 💪

Hmmm I’m not being as prescriptive as that. Maybe there is a better solution to this specific problem—maybe requiring someone with higher karma to confirm the suggestion? (original person gets the credit)

See also the Payroll Giving (UK) or GAYE—EA Forum (effectivealtruism.org) page which it is the top google result for “Effective Altruism Payroll Giving”. It made sense for me to update since I am an accountant and have experience trying to get this done at my workplace.

Did I need to make a post about something unrelated to do that?

Should we be making it so difficult for users with an EA forum account to make updates to the forum wikis?

I imagine the platform vision for the EA forum is to be the “Wikipedia for do-gooders” and make it useful as a resource for people working out the best ways to do good. For example, when you google “Effective Altruism AI Safety” on incognito mode—the first result is the forum topic on AI safety: AI safety—EA Forum (effectivealtruism.org)

I was chatting to @Rusheb about this who has spent the last year upskilling to transition into AI Safety from software development. He had some great ideas for links (ie. new 80k guides, site that had links for newbies or people making the transition from software engineering)

Ideally someone who had this experience and opinions on what would be useful on a landing page for AI Safety should be able to suggest this on the wiki page (like you can do on Wikipedia with the caveat that you can be overruled). However, he doesn’t have the forum karma to do that and the tooltip explaining that was unclear on how to get the karma to do it.

I have the forum karma to do it but I don’t think I should get the credit—I didn’t have the AI safety knowledge—he did. In this scenario, the forum has lost out on some free improvements to its wiki plus an engaged user who would feel “bought in”. Is there a way to “lend him” my karma?

I got it from posting about EA Taskmaster which shouldn’t make me an authority on AI Safety.

Agree and thanks for writing this up Nick!

I like 80k’s recent shift to pushing it’s career guide again (what initially brought me into EA—the writing is so good!) and focusing on skill sets.

Really appreciate those diagrams—thanks for making them! I agree and think there are serious risks from EA being taken over as a field by AI safety.

The core ideas behind EA are too young and too unknown by most of the world for them to be strangled by AI safety—even if it is the most pressing problem.

Pulling out a quote from MacAskill’s comment (since a lot of people won’t click)

I’ve also experienced what feels like social pressure to have particular beliefs (e.g. around non-causal decision theory, high AI x-risk estimates, other general pictures of the world), and it’s something I also don’t like about the movement. My biggest worries with my own beliefs stem around the worry that I’d have very different views if I’d found myself in a different social environment. It’s just simply very hard to successfully have a group of people who are trying to both figure out what’s correct and trying to change the world: from the perspective of someone who thinks the end of the world is imminent, someone who doesn’t agree is at best useless and at worst harmful (because they are promoting misinformation).

In local groups in particular, I can see how this issue can get aggravated: people want their local group to be successful, and it’s much easier to track success with a metric like “number of new AI safety researchers” than “number of people who have thought really deeply about the most pressing issues and have come to their own well-considered conclusions”.

One thing I’ll say is that core researchers are often (but not always) much more uncertain and pluralist than it seems from “the vibe”.

...

What should be done? I have a few thoughts, but my most major best guess is that, now that AI safety is big enough and getting so much attention, it should have its own movement, separate from EA. Currently, AI has an odd relationship to EA. Global health and development and farm animal welfare, and to some extent pandemic preparedness, had movements working on them independently of EA. In contrast, AI safety work currently overlaps much more heavily with the EA/rationalist community, because it’s more homegrown.

If AI had its own movement infrastructure, that would give EA more space to be its own thing. It could more easily be about the question “how can we do the most good?” and a portfolio of possible answers to that question, rather than one increasingly common answer — “AI”.

At the moment, I’m pretty worried that, on the current trajectory, AI safety will end up eating EA. Though I’m very worried about what the next 5-10 years will look like in AI, and though I think we should put significantly more resources into AI safety even than we have done, I still think that AI safety eating EA would be a major loss. EA qua EA, which can live and breathe on its own terms, still has huge amounts of value: if AI progress slows; if it gets so much attention that it’s no longer neglected; if it turns out the case for AI safety was wrong in important ways; and because there are other ways of adding value to the world, too. I think most people in EA, even people like Holden who are currently obsessed with near-term AI risk, would agree.

The OECD are currently hiring for a few potentially high-impact roles in the tax policy space:

The Centre for Tax Policy and Administration (CTPA)

Executive Assistant to the Director and Office Manager (closes 6th October)

Senior programme officer (closes 28th September)

Head of Division—Tax Administration and VAT (closes 5th October)

Head of Division—Tax Policy and Statistics (closes 5th October)

Head of Division—Cross-Border and International Tax (closes 5th October)

Team Leader—Tax Inspectors Without Borders (closes 28th September)

I know less about the impact of these other areas but these look good:

Trade and Agriculture Directorate (TAD)

Head of Section, Codes and Schemes—Trade and Agriculture Directorate (closes 25th September)

Programme Co-ordinator (closes 25th September)

International Energy Agency (IEA)

Clean Energy Technology Analysts (closes 24th September)

Modeller and Analyst – Clean Shipping & Aviation (closes 24th September)

Analyst & Modeller – Clean Energy Technology Trade (closes 24th September)

Data Analyst—Temporary (closes 28-09-2023)

Financial Action Task Force

I agree and this is why I’m in favour of a Big Tent approach to EA. This risk comes from a lack of understanding about the diversity of thought within EA and that it isn’t claiming to have all the answers. There is a danger that poor behaviour from one part of the movement can impact other parts.

Broadly EA is about taking a Scout Mindset approach to doing good with your donations, career and time. Individual EAs and organisations can have opinions on what cause areas need more resources at the margin but “EA” can’t—it isn’t a person, it’s a network.

I really liked this post How CEA’s communications team is thinking about EA communications at the moment — EA Forum (effectivealtruism.org) from @Shakeel Hashim and hope that whatever happens in terms of shake ups at CEA—communications and clarity around the EA brand are prioritised.

Hi—this seemed cool. Is there an update on where this got to?

Could you say more about the strong evidence beyond the statements by Bill Gates?

See my other comment

On the LMIC migration platform, I found this UK based for-profit doing what looks like a similar thing:

https://www.getborderless.io/about-us