Also interested in this question! CC @Nathan Young who might know

Saul Munn

We ran the first West Coast EA Retreat (a retrospective)

UC Berkeley EA is hosting a west coast uni student EA retreat on april 10-12, with ~50 attendees from Berkeley, Stanford, UCLA, UCI, UCSD, & more, as well as special guests like Matt Reardon, Jake McKinnon, Jesse Gilbert, Julie Steele, Adam Khoja, Richard Ren, & more...

...but we only know to reach out to people who’re involved with their uni’s clubs. so: if you’re interested in attending, book a 5-10 minute chat with alex or aiden :)

some examples of gaps in our outreach:

unis that don’t have an EA club

students who haven’t joined their uni’s EA club

transfers to west-coast unis

students who’re on leave from their uni and presently living on the west coast

high-schoolers who’ll soon be starting at west coast

we won’t be able to take everyone, but reading the ea forum is a pretty positive indicator that you’d be a good fit!

some further & updated thoughts, written in ~30 min, are below. canonical version lives here.

Here’s a frame I’ve found helpful for thinking about effective altruism:

When I look inside myself, I notice that I care about a lot of things.

You could also reasonably replace “care” with “wanting,” “preferring,” “valuing,” “desiring,” “having goals,” etc, rather than “caring.” I’m okay being loose.

Some examples of things I care about:

I want my sister to have an excellent career.

I’m hungry, and want some food.

I want to be valued by people I respect.

I want my dogs to have enjoyable lives.

(And many, many more).

(It’s often useful to be introspective/clear-eyed about what you care about, what that ontology looks like, which values are instrumental to which other values, etc., but I won’t be doing that here, and indeed I think it might be anti-helpful in this particular frame at this particular time. Stay with me until the end.)

Sort-of by definition, I want more of the things I care about. I see my life as a difficult, high-level optimization problem aimed at making decisions which, given my resources at various times, increase my values across time.

Some of the things I care about — like wanting food because I’m hungry — are fundamentally oriented at myself. And I take actions to do better along these axes.

Some examples of actions:

Reading a book on tax strategies

Learning how to cook

Asking people for feedback on my sartorial choices

etc

And in general, I try to be effective at getting what I want, here — that is, I aim to achieve these kinds of goals/values/preferences to as great of a degree as possible.

But other things I care about — like wanting my sister to have an excellent career, or my dogs to have enjoyable lives — are fundamentally oriented at others-by-their-lights. And I take actions to do better along these axes, too.

These motivations often look starkly different in a lot of different situations.

For some of these altruistic motivations, it just so happens that some lovely dynamics have coalesced such that there’s an existing group of people / infrastructure / etc who have worked & are working quite hard toward helping me get what I want w/r/t some of those things I care about that are oriented at others-by-their-lights. In particular, I haven’t found any community which is more effective at helping me achieve the things I care about that are oriented at others-by-their-lights than this one.

(The group of people / infrastructure / etc I’m referring to is effective altruism.)

Why do I like this frame?

Because it’s apparent that I care about quite a few things. It becomes evident quickly that totalizing stances toward EA are just not worth it; a bad trade; just getting less of what I want.

In particular, I think this kind of frame can be validating toward folks who’ve gone quite far, and repressed the values that they in-fact have in other areas of their life. (I think I was in this camp ~two years ago.)

There are interesting subproblems that come into clearer view, e.g.:

When should, on the margin, my resources go toward different things that I care about?

What actions would get me more access to the things that I want with greater robustness (i.e. getting me closer to many different things I want, all at once)?

etc

Started something sorta similar about a month ago: https://saul-munn.notion.site/A-collection-of-content-resources-on-digital-minds-AI-welfare-29f667c7aef380949e4efec04b3637e9?pvs=74

What, concretely, would that involve? /What, concretely, are you proposing?

I think affecting P(things go really well | no AI takeover) is pretty tractable!

What interventions are you most excited about? Why? What are they bottlenecked on?

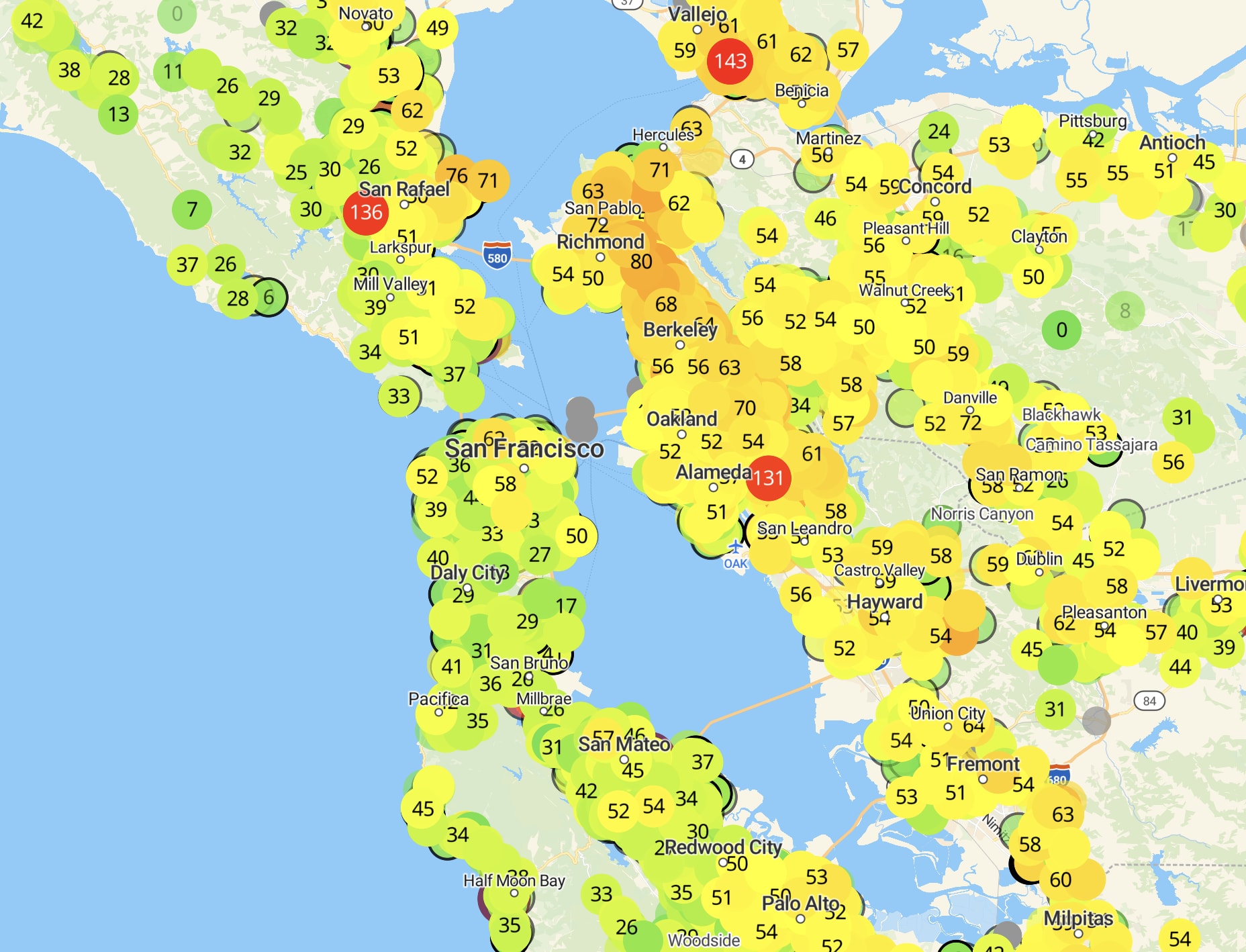

PurpleAir collects data from a network of private air quality sensors. Looks interesting, and possibly useful for tracking rapid changes in air quality (e.g. from a wildfire).

(written v quickly, sorry for informal tone/etc)

i think that a happy medium is getting small-group conversations (that are useful, effective, etc) of size 3–4 people. this includes 1-1s, but the vibe of a Formal, Thirty Minute One on One is a very different vibe from floating through 10–15, 3–4-person conversations in a day, each that last varying amounts of time.

much more information can flow with 3-4 ppl than with just 2 ppl

people can dip in and out of small conversations more than they can with 1-1s

more-organic time blocks means that particularly unhelpful conversations can end after 5-10m, and particularly helpful ones can last the duration that would be good for them to last (even many hours!)

3-4 person conversations naturally select for a good 1-1. once 1-2 people have left a 3-4 person conversation, the conversation is then just a 1-1 of the two people who’ve engaged in the conversation longest — which seems like some evidence of their being a good match for a 1-1.

however, i think that this is operationally much harder to do for organizers than just 1-1s. my understanding is that this is much of the reason EAGs (& other conferences) do 1-1s, instead of small group conversations.

i think Writehaven did a mediocre job of this at LessOnline this past year (but, tbc, it did vastly better than any other piece of software i’ve encountered).

i think Lighthaven as a venue forces this sort of thing to happen, since there are so so so many nooks for 2-4 people to sit and chat, and the space is set up to make 10+ person conversations less likely to happen.

i know that The Curve (from @Rachel Weinberg) created some “Curated Conversations:” they manually selected people to have predetermined conversations for some set amount of time. iirc this was typically 3-6 people for ~1h, but i could be wrong on the details. rachel: how did these end up going, relative to the cost of putting them together?

[srs unconf at lighthaven this sunday 9⁄21]

Memoria is a one-day festival/unconference for spaced repetition, incremental reading, and memory systems. It’s hosted at Lighthaven in Berkeley, CA, on September 21st, from 10am through the afternoon/evening.

Michael Nielsen, Andy Matuschak, Soren Bjornstad, Martin Schneider, and about 90–110 others will be there — if you use & tinker with memory systems like Anki, SuperMemo, Remnote, MathAcademy, etc, then maybe you should come!

Tickets are $80 and include lunch & dinner. More info at memoria.day.

have you tried Fatebook.io, from @Adam Binksmith ?

i thought this was excellent. thank you for writing it up!

Thank you for this! It can take a lot of effort to write, edit, and publish reports like this, but they (generally) create quite a bit of value. I found this one exceptionally concrete & clear to read — well done!

thanks for writing/cross-posting! i particularly liked that you had the reader pause at a moment where we could reasonably attempt to figure it out on our own.

I skimmed most of this post, and it seems great! Thank you for writing it.

However:

In this post, I introduce a concept I call surface area for serendipity …

I’m not sure what you mean by “introduce” here? I read it as “here is this new phrase that I’m putting forward for use” — unless I’m misunderstanding, I’m quite confident that’s wrong. You’re definitely not the first person to use the phrase.

Consider editing it to be:

In this post, I describe a concept I use called surface area for serendipity…

Or something similar.

fwiw i instinctively read it as the 2nd, which i think is caleb’s intended reading

Saul Munn’s Quick takes

why do i find myself less involved in EA?

epistemic status: i timeboxed the below to 30 minutes. it’s been bubbling for a while, but i haven’t spent that much time explicitly thinking about this. i figured it’d be a lot better to share half-baked thoughts than to keep it all in my head — but accordingly, i don’t expect to reflectively endorse all of these points later down the line. i think it’s probably most useful & accurate to view the below as a slice of my emotions, rather than a developed point of view. i’m not very keen on arguing about any of the points below, but if you think you could be useful toward my reflecting processes (or if you think i could be useful toward yours!), i’d prefer that you book a call to chat more over replying in the comments. i do not give you consent to quote my writing in this short-form without also including the entirety of this epistemic status.

1-3 years ago, i was a decently involved with EA (helping organize my university EA program, attending EA events, contracting with EA orgs, reading EA content, thinking through EA frames, etc).

i am now a lot less involved in EA.

e.g. i currently attend uc berkeley, and am ~uninvolved in uc berkeley EA

e.g. i haven’t attended a casual EA social in a long time, and i notice myself ughing in response to invites to explicitly-EA socials

e.g. i think through impact-maximization frames with a lot more care & wariness, and have plenty of other frames in my toolbox that i use to a greater relative degree than the EA ones

e.g. the orgs i find myself interested in working for seem to do effectively altruistic things by my lights, but seem (at closest) to be EA-community-adjacent and (at furthest) actively antagonistic to the EA community

(to be clear, i still find myself wanting to be altruistic, and wanting to be effective in that process. but i think describing my shift as merely moving a bit away from the community would be underselling the extent to which i’ve also moved a bit away from EA’s frames of thinking.)

why?

a lot of EA seems fake

the stuff — the orientations — the orgs — i’m finding it hard to straightforwardly point at, but it feels kinda easy for me to notice ex-post

there’s been an odd mix of orientations toward [ aiming at a character of transparent/open/clear/etc ] alongside [ taking actions that are strategic/instrumentally useful/best at accomplishing narrow goals… that also happen to be mildly deceptive, or lying by omission, or otherwise somewhat slimy/untrustworthy/etc ]

the thing that really gets me is the combination of an implicit (and sometimes explicit!) request for deep trust alongside a level of trust that doesn’t live up to that expectation.

it’s fine to be in a low-trust environment, and also fine to be in a high-trust environment; it’s not fine to signal one and be the other. my experience of EA has been that people have generally behaved extremely well/with high integrity and with high trust… but not quite as well & as high as what was written on the tin.

for a concrete ex (& note that i totally might be screwing up some of the details here, please don’t index too hard on the specific people/orgs involved): when i was participating in — and then organizing for — brandeis EA, it seemed like our goal was (very roughly speaking) to increase awareness of EA ideas/principles, both via increasing depth & quantity of conversation and via increasing membership. i noticed a lack of action/doing-things-in-the-world, which felt kinda annoying to me… until i became aware that the action was “organizing the group,” and that some of the organizers (and higher up the chain, people at CEA/on the Groups team/at UGAP/etc) believed that most of the impact of university groups comes from recruiting/training organizers — that the “action” i felt was missing wasn’t missing at all, it was just happening to me, not from me. i doubt there was some point where anyone said “oh, and make sure not to tell the people in the club that their value is to be a training ground for the organizers!” — but that’s sorta how it felt, both on the object-level and on the deception-level.

this sort of orientation feels decently reprensentative of the 25th percentile end of what i’m talking about.

also some confusion around ethics/how i should behave given my confusion/etc

importantly, some confusions around how i value things. it feels like looking at the world through an EA frame blinds myself to things that i actually do care about, and blinds myself to the fact that i’m blinding myself. i think it’s taken me awhile to know what that feels like, and i’ve grown to find that blinding & meta-blinding extremely distasteful, and a signal that something’s wrong.

some of this might merely be confusion about orientation, and not ethics — e.g. it might be that in some sense the right doxastic attitude is “EA,” but that the right conative attitude is somewhere closer to (e.g.) “embody your character — be kind, warm, clear-thinking, goofy, loving, wise, [insert more virtues i want to be here]. oh and do some EA on the side, timeboxed & contained, like when you’re donating your yearly pledge money.”

where now?

i’m not sure! i could imagine the pendulum swinging more in either direction, and want to avoid doing any further prediction about where it will swing for fear of that prediction interacting harmfully with a sincere process of reflection.

i did find writing this out useful, though!

Thanks for the clarification — I’ve sent a similar comment on the Open Phil post, to get confirmation from them that your reading is accurate :)

hey, thanks for commenting!

this was a retreat for west coast ea uni students — we focused on LA & Bay Area schools, but would’ve been happy to have e.g. oregon schools there too.

re: filtering, our main reflection here was that, given an existing pool of high-context folks, retreats can be a useful way for those folks to coordinate & get to know each other better in ways that are hard to replicate elsewhere. and although it’s possible to use retreats to help newcomers get acquainted to EA, we thought other pathways (intro fellowships, 1:1s with organizers, reading intro material online, etc) would be more effective/scalable for newcomers, while avoiding the problem of making the whole ambient atmosphere lower context.

so — we definitely don’t think all retreats should filter out newcomers, just that retreats which do some filtering will be able to provide benefits to attendees that retreats which don’t do filtering won’t be able to provide.

maybe a relevant analogy here is EAGs vs EAGx’s: the former has a higher bar, but both are super useful at what they’re aiming for.