Scriptwriter for RationalAnimations! Interested in lots of EA topics, but especially ideas for new institutions like prediction markets, charter cities, georgism, etc. Also a big fan of EA / rationalist fiction!

Jackson Wagner

EcoResilience Initiative (https://ecoresilienceinitiative.com) works on this, in particular on non-climate-related stuff. They agree with Giving Green that, counterintuitively, Good Food Institute (usually talked about by animal-welfare fans) might actually be one of the most promising charities in terms of protecting biodiversity and the environment, because if plant-based meat ever took off in a big way it would have massive effects on agricultural land use (ie much less deforestation & habitat destruction would happen).

They can keep their old branding if they just put an asterisk after “hours” and explain that 80,000 Hours* now refers to human-worker-hour-equivalent efforts spent on highly-effective cause areas (aka “effective compute”), not necessarily literal human labor hours.

Interesting and thoughtful post. However, I believe your Google Ngram result is potentially confounded by the imminent rise of the Antichrist and ensuing Tribulation of convulsive war and death that will rip like wildfire across all the nations of Earth. This event is projected to occur sometime in the early 2040s, c.f. Angela Cotra’s “Forecasting Transformative AC with Eschatological Anchors” (though more recent estimates like “AC 2027″ have argued for shorter timelines), which is spuriously boosting mentions of the “apocalypse”.

Once you correct for this confounding variable (ie, that each and every one of us is about to be plunged into a world of ceaseless conflict and violence, mercilessly hunted down by the Four Horsemen, moon turned to blood, etc), you’ll see that eschatologically-adjusted mentions of “apocalypse” are actually no higher than the historical base rate, proving that there’s nothing to worry about and everything will be fine.

Giving up on EA after 13 years

Talk to sperm whales --> the US military figures out how to pay them to covertly tail russian subs --> eventually more people find out about this and pretty soon thanks to whales everybody knows where everyone’s subs are at all times --> the assuredness of nations’ second-strike nuclear capability is eroded --> destabilized game-theoretic dynamics once again favor first-strike --> nuclear armageddon.

(This is 95% a joke, but if somebody would please research “do whales offer any notable advantages vs naval drones, satellite-based wake detection, or other techniques for tracking nuclear submarines” I would feel a bit more assured...)

“Articulate a stronger defense of why they’re good?”

I’m no expert on animal-welfare stuff, but just thinking out loud, here are some benefits that I could imagine coming from this technology (not trying to weigh them up versus potential harms or prioritize which seem largest or anything like that):You imagine negative PR consequences once we realize that animals might mostly be thinking about basic stuff like food and sex, but I picture that being only a small second-order consequence—the primary effect, I suspect, is that people’s empathy for animals might be greatly increased by realizing they think about stuff and communicate at all. The idea that animals (especially, like, whales) have sophisticated thoughts and communicate, and the intuition that they probably have valuable internal subjective experience, might both seem “obvious” to animal-welfare activists, but I think for most normal people globally, they either sorta believe that animals have feelings (but don’t think about this very much) or else explicitly believe that animals lack consciousness / can’t think like humans because they don’t have language / don’t have full human souls (if the person is religious) / etc. Hearing animals talk would, I expect, wake people up a little bit more to the idea that intelligence & consciousness exist on a spectrum and animals have some valuable experience (even if less so than humans).

In particular, I’m definitely picturing that the journalists covering such experiments are likely to be some combination of 1. environmentalists who like animals, 2. animal rights activists who like animals, 3. just think animals are cute and figure that a feel-good story portraying animals as sweet and cute will obviously do better numbers than a boring story complaining about how dumb animals are. So, with friendly media coverage, I expect the biggest news stories will be about the cutest / sweetest / most striking / saddest things that animals say, not the boring fact that they spend most of their time complaining about bodily needs just like humans do.

Compare for instance “news coverage” (and other cultural perceptions of) human children. To the extent that toddlers can talk, they are mostly just demanding things, crying, failing to understand stuff, etc. Yet, we find this really cute and endearing (eg, i am a father of a toddler myself, and it’s often very fun). I bet animal communication would similarly be perceived positively, even if (like babies) they’re really dumb compared to adult humans.

Talk-to-animals tech also seems potentially philosophically important in some longtermist, “sentient-futures” style ways:

What’s good versus bad for an animal? Right now we literally just have to guess, based on eyeballing whether the creature seems happy. And if you are less of a total-hedonic-utilitarian, more of a preference utilitarian, the situation gets even worse. It would be nice if we could just ask animals what their problems are, what kind of things they want, etc! Even a very small amount of communication would really increase what we are able to learn about animals’ preferences, and thus how well we are able to treat them in a best-case scenario.

Maybe we could use this tech to do scientific studies and learn valuable things about consciousness, language, subjective experience, etc, in a way that clarifies humanity’s thinking about these slippery issues and helps us better avoid moral catastrophes (perhaps becoming more sympathetic to animals as a result, or getting a better understanding of when AI systems might or might not be capable of suffering).

Perhaps humanity has some sort of moral obligation to (someday, after we solve more pressing problems like not destroying the world or creating misaligned AI) eventually uplift creatures like whales, monkeys, octopi, etc, so they too can explore and comprehend the universe together with us. Talk-to-animals tech might be an early first step toward such future goals, might set early precedents, might help us learn about some of the philosophical / moral choices we would need to make if we embarked on a path of uplifting other species, idk.

it also advocates for the government of California to in-house the engineering of its high-speed rail project rather than try to outsource it to private contractors

Hence my initial mention of “high state capacity”? But I think it’s fair to call abundance a deregulatory movement overall, in terms of, like… some abstract notion of what proportion of economic activity would become more vs less heavily involved with government, under an idealized abundance regime.

Sorry to be confusing by “unified”—I didn’t mean to imply that individual people like klein or mamdani were “unified” in toeing an enforced party line!

Rather I was speculating that maybe the reason the “deciding to win” people (moderates such as matt yglesias) and the “abundance” people, tend to overlap moreso than abundance + left-wingers, is because the abundance + moderates tend to share (this is what I meant by “are unified by”) opposition to policies like rent control and other price controls, tend to be less enthusiastic about “cost-disease-socialism” style demand subsidies since they often prefer to emphasize supply-side reforms, tend to want to deemphasize culture-war battles in favor of an emphasis on boosting material progress / prosperity, etc. Obviously this is just a tendency, not universal in all people, as people like mamdani show.

FYI, I’m totally 100% on board with your idea that abundance is fully compatible with many progressive goals and, in fact, is itself a deeply progressive ideology! (cf me being a huge georgist.) But, uh, this is the EA Forum, which is in part about describing the world truthfully, not just spinning PR for movements that I happen to admire. And I think it’s an appropriate summary of a complex movement to say that abundance stuff is mostly a center-left, deregulatory, etc movement.

Imagine someone complaining—it’s so unfair to describe abundance as a “democrat” movement!! That’s so off-putting for conservatives—instead of ostracising them, we should be trying to entice them to adopt these ideas that will be good for the american people! Like Montana and Texas passing great YIMBY laws, Idaho deploying modular nuclear reactors, etc. In lots of ways abundance is totally coherent with conservative goals of efficient government services, human liberty, a focus on economic growth, et cetera!!

That would all be very true. But it would still be fair to summarize abundance as primarily a center-left democrat movement.

To be clear I personally am a huge abundance bro, big-time YIMBY & georgist, fan of the Institute for Progress, personally very frustrated by assorted government inefficiencies like those mentioned, et cetera! I’m not sure exactly what the factional alignments are between abundance in particular (which is more technocratic / deregulatory than necessarily moderate—in theory one could have a “radical” wing of an abundance movement, and I would probably be an eager member of such a wing!) and various forces who want the Dems to moderate on cultural issues in order to win more (like the recent report “Deciding to Win”). But they do strike me as generally aligned (perhaps unified in their opposition to lefty economic proposals which often are neither moderate nor, like… correct).

A couple more “out-there” ideas for ecological interventions:

“recording the DNA of undiscovered rainforest species”—yup, but it probably takes more than just DNA sequences on a USB drive to de-extinct a creature in the future. For instance, probably you need to know about all kinds of epigenetic factors active in the embryo of the creature you’re trying to revive. To preserve this epigenetic info, it might be easiest to simply freeze physical tissue samples (especially gametes and/or embryos) instead of doing DNA sequencing. You might also need to use the womb of a related species—bringing back mammoths is made a LOT easier by the fact that elephants are still around! -- and this would complicate plans to bring back species that are only distantly related to anything living. I want to better map out the tech tree here, and understand what kinds of preparation done today might aid what kinds of de-extinction projects in the future.

Normal environmentalists worry about climate change, habitat destruction, invasive species, pollution, and other prosaic, slow-rolling forms of mild damage to the natural environment. Not on their list: nuclear war, mirror bacteria, or even something as simple as AGI-supercharged economic growth that sees civilization’s economic footprint doubling every few years. I think there is a lot that we could do, relatively cheaply, to preserve at least some species against such catastrophes.

For example, seed banks exist. But you could possibly also save a lot of insects from extinction by maintaining some kind of “mostly-automated insect zoo in a bunker”, a sort of “generation ship” approach as oppoed to the “cryosleep” approach that seedbanks can use. (Also, are even today’s most hardcore seed banks hardened against mirror bacteria and other bio threats? Probably not! Nor do many of them even bother storing non-agricultural seeds for things like random rainforest flowers.)

Right now, land conservation is one of the cheapest ways of preventing species extinctions. But in an AGI-transformed world, even if things go very well for humanity, the economy will be growing very fast, gobbling up a lot of land, and probably putting out a lot of weird new kinds of pollution. (Of course, we could ask the ASI to try and mitigate these environmental impacts, but even in a totally utopian scenario there might be very strong incentives to go fast, eg to more quickly achieve various sublime transhumanist goods and avoid astronomical waste.) By contrast, the world will have a LOT more capital, and the cost of detailed ecological micromanagement (using sensors to gather lots of info, using AI to analyze the data, etc) will be a lot lower. So it might be worth brainstorming ahead of time what kinds of ecological interventions might make sense in such a world, where land is scarce but capital is abundant and customized micro-attention to every detail of an environment is cheap. This might include high-density zoos like described earlier, or “let the species go extinct for now, but then reliably de-extinct them from frozen embryos later”, or “all watched over by machines of loving grace”-style micromanaged forests that achieve superhumanly high levels of biodiversity in a very compact area (and minimizing wild animal suffering by both minimizing the necessary population and also micromanaging the ecology to keep most animals in the population in a high-welfare state).

A lot of today’s environmental-protection / species-extinction-avoidance programs aren’t even robust to, like, a severe recession that causes funding for the program to get cut for a few years! Mainstream environmentalism is truly designed for a very predictable, low-variance future… it is not very robust to genuinely large-scale shocks.

It’s kind of fuzzy and unclear what’s even important about avoiding species extinctions or preserving wild landscapes or etc, since these things don’t fit neatly into a total-hedonic-utilitarian framework. (In this respect, eco-value is similar to a lot of human culture and art, or values like “knowledge” or “excellence” and so forth.) But, regardless of whether or not we can make philosophical progress clarifying exactly what’s important about the natural world, maybe in a utopian future we could find crazy futuristic ways of generating lots more ecological value? (Obviously one would want to do this while avoiding creating lots of wild-animal suffering, but I think this still gives us lots of options.)

Obviously stuff like “bringing back mammoths” is in this category.

But maybe also, like, designing and creating new kinds of life? Either variants of earth life (what kinds of interesting things might dinosaurs have evolved into, if they hadn’t almost all died out 65 million years ago?), or totally new kinds of life that might be able to thrive on, eg, Titan or Europa (though obviously this sort of research might carry some notable bio-risks a la mirror bacteria, thus should perhaps only be pursued from a position of civilizational existential security).

Creating simulated, digital life-forms and ecologies? In the same way that a culture really obsessed with cool crystals, might be overjoyed to learn about mathematics and geometry, which lets them study new kinds of life.

There is probably a lot of exciting stuff you could do with advanced biotech / gene editing technologies, if the science advances and if humanity can overcome the strong taboo in environmentalism against taking active interventions in nature. (Even stuff like “take some seeds of plants threatened by global warming, drive them a few hours north, and plant them there where it’s cooler and they’ll survive better” is considered controversial by this crowd!)

Just like gene drives could help eradicate / suppress human scourges like malaria-carrying mosquitoes, we could also use gene drives to do tailored control of invasive species (which are something like the #2 cause of species extinctions, after #1 habitat destruction). Right now, the best way to control invasive species is often “biocontrol” (introducing natural predators of the species that’s causing problems) -- biocontrol actually works much better than its terrible reputation suggests, but it’s limited by the fact that there aren’t always great natural predators available, it takes a lot of study and care to get it right, etc.

Possibly you could genetically-engineer corals to be tolerant of slightly higher temperatures, and generally use genetic tech to help species adapt more quickly to a fast-changing world.

EcoResilience Inititative is working on applying EA principles (ITN analysis, cost-effectiveness, longtermist orientation, etc) to ecological conservation. But right now it’s just my wife Tandena and a couple of her friends doing research on a part-time volunteer basis, no funding or anything, lol.

Here are two recent posts of theirs describing their enthusiasm for precision fermentation technologies (already a darling of the animal-welfare wing of EA) due to its potentially transformative impact on land use if lots of people ever switch from eating meat towards eating more precision-fermentation protein. And here are some quick takes of theirs on deep ocean mining (investigating the ecological benefits of mining the seabed and thereby alleviating current economic pressures to mine in rainforest areas) and biobanking (as a cheap way of potentially enabling future de-extinction efforts, once de-extinction technology is further advanced).

There are also some bigger, more established EA groups that focus mostly on climate interventions (Giving Green, Founder’s Pledge, etc); most of these have at least done some preliminary explorations into biodiversity, although there is not really much published work yet. Hannah Ritchie at OurWorldInData has compiled some interesting information about various ecological problems, and her book “Not The End of the World” is great—maybe the best starting place for someone who wants to get involved to learn more?

There is a very substantial “abundance” movement that (per folks like matt yglesias and ezra klein) is seeking to create a reformed, more pro-growth, technocratic, high-state-capacity democratic party that’s also more moderate and more capable of winning US elections. Coefficient Giving has a big $120 million fund devoted to various abundance-related causes, including zoning reform for accelerating housing construction, a variety of things related to building more clean energy infrastructure, targeted deregulations aimed at accelerating scientific / biomedical progress, etc. https://coefficientgiving.org/research/announcing-our-new-120m-abundance-and-growth-fund/

You can get more of a sense of what the abundance movement is going for by reading “the argument”, an online magazine recently funded by Coefficient giving and featuring Kelsey Piper, a widely-respected EA-aligned journalist: https://www.theargumentmag.com/

I think EA the social movement (ie, people on the Forum, etc) try to keep EA somewhat non-political to avoid being dragged into the morass of everything becoming heated political discourse all the time. But EA the funding ecosystem is significantly more political, also does a lot of specific lobbying in connection to AI governance, animal welfare, international aid, etc.

Yup, I think there’s a lot of very valuable research / brainstorming / planning that EA (and humanity overall) hasn’t yet done to better map out the space of ways that we could create moral value far greater than anything we’ve seen in history so far.

In EA / rationalist circles, discussion of “flourishing futures” often focuses on “transhumanist goods”, like:

extreme human longevity / immortality through super-advanced medical science

intelligence amplification

reducing suffering, and perhaps creating new kinds of extremely powerful positive emotions

you also hear a bit about AI welfare, and the idea that maybe we could create AI minds experiencing new forms of valuable subjective experience

But there are perhaps a lot of other directions worth exploring:

various sorts of spiritual attainment that might be possible with advanced technology / digital minds / etc

things that are totally out of left field to us because we can’t yet imagine them, like how the value of consciousness would be a bolt from the blue to a planet that only had plants & lower life forms.

instantiating various values, like beauty or cultural sophistication or ecological richness, to extreme degrees

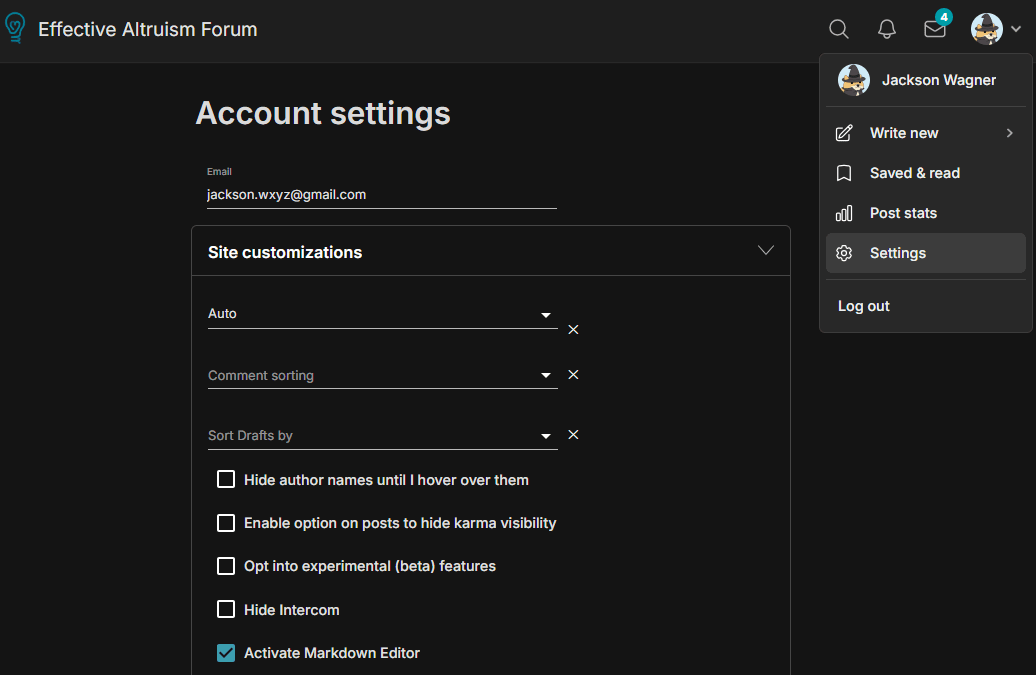

On a more practical note, the Forum does support markdown headings if you enable the “activate markdown editor” feature on the EA Forum profile settings! This would turn all your ##headings into a much more beautiful, readable structure (and it would create a little outline in a sidebar, for people to jump around to different sections).

[content warning: buncha rambly thoughts that might not make much sense]

certainly—see my bit about how my preferred solution would be to run a volunteer army even if that takes ruinously high taxes on the rest of the population. (The United States, to its credit, has indeed run an all-volunteer army ever since the end of the Vietnam War in 1973! But having an immense population makes this relatively easy; smaller countries face sharper trade-offs and tend to orient more towards conscription. See for instance the fact that Russia’s army is less reliant on conscripts than Ukraine’s.)

but also, almost every policy in society has unequal benefits, perhaps helping a small group at the expense of more diffuse harm to a larger group, or vice versa. For example, greater investment in bike lanes and public transit (at the expense of simply building more roads) helps cyclists and public-transit users at the expense of car-drivers. Using taxes to fund a public-school system is basically ripping off people who don’t have children and subsidizing those that do; et cetera. at some point, instead of trying to make sure that every policy comes out even for everyone involved, you have to just kind of throw up your hands, hope that different policies pointing in different directions even out in the end, and rely on some sense of individual willingness to sacrifice for the common good to smooth over the asymmetries.

One could similarly say it’s unfair that residents of Lviv (who are very far from the Ukranian front line, and would almost certainly remain part of a Ukrainian “rump state” even in the case of dramatic eventual Russian victory) are being asked to make large sacrifices for the defense of faraway eastern Ukraine. (And why are residents of southeastern Poland, so near to Lviv, asked to sacrifice so much less than their neighbors?!)

Perhaps there is some galaxy-brained solution to problems like this, where all of Europe (or all of Ukraine’s allies, globally) could optimally tax themselves some fractional percent in accordance with how near or far they are to Ukraine itself? Or one could be even more idealistic and imagine a unified coalition of allies where everyone decides centrally which wars to support and then contributes resources evenly to that end (such that the armies in eastern Ukraine would have a proportionate number of frenchmen, americans, etc). But in practice nobody has figured out how a scheme like that would possibly work, or why countries would be motivated to adopt it, how it could be credibly fair and neutral and immune to various abuses, etc.

Another weakness to the idea of democratic feedback is simply that it isn’t very powerful—every couple of years you get essentially a binary choice between the leading two coalitions, so you can do a reasonably good job expressing your opinion on whatever is considered the #1 issue of the day, but it’s very hard to express nuanced views on multiple issues through the use of just one vote. So, in this sense, democracy isn’t really a guarantee of representation across many issues, so much as a safety valve that will hopefully fix problems one-by-one as they rise to the position of #1 most-egregiously-wrong-thing in society.

I think that today’s “liberal democracy” is pretty far from some kind of ethically ideal world with optimally representative governance (or optimally pursuing-the-welfare-of-the-population governance, which might be a totally different system)! Whatever is the ideal system of optimal governance, it would probably seem pretty alien to us, perhaps extremely convoluted in parts (like the complicated mechanisms for Venice selecting the Doge) and overly-financialized in certain ways (insofar as it might rely on weird market-like mechanisms to process information).

But conscription doesn’t stand out to me as being especially worse than other policy issues that are similarly unfair in this regard (maybe it’s higher-stakes than those other issues, but it’s similar in kind) -- it’s a little unfair and inelegant and kind of a blunt instrument, just like all of our policies are in this busted world where nations are merely operating with “the worst form of government, except for all the others that have been tried”.

People also talked about “astronomical waste” (per the nick bostrom paper) -- the idea that we should race to colonize the galaxy as quickly as possible because we’re losing literally a couple galaxies every second we delay. (But everyone seemed to agree that this wasn’t practical, racing to colonize the galaxy soonest would have all kinds of bad consequences that would cause the whole thing to backfire, etc)

People since long before EA existed have been concerned about environmentalist causes like preventing species extinctions, based on a kind of emotional proto-longtermist feeling that “extinction is forever” and it isn’t right that humanity, for its short-term benefit, should cause irreversible losses to the natural world. (Similar “extinction is forever” thinking applies to the way that genocide—essentially seeking the extinction of a cultural / religious / racial / etc group, is considered a uniquely terrible horror, worse than just killing an equal number of randomly-selected people.)

A lot of “improving institutional decisionmaking” style interventions make more and more sense as timelines get longer (since the improved institutions and better decisions have more time to snowball into better outcomes).

With your FTX thought experiment, the population being defrauded (mostly rich-world investors) is different from the population being helped (people in poor countries), so defrauding the investors might be worthwhile in a utilitarian sense (the poor people are helped more than the investors are harmed), but it certainly isn’t in the investors’ collective interest to be defrauded!! (Unless you think the investors would ultimately profit more by being defrauded and seeing higher third-world economic growth, than by not being defrauded. But this seems very unlikely & also not what you intended.)

I might be in favor of this thought experiment if the group of people being stolen from was much larger—eg, the entire US tax base, having their money taken through taxes and redistributed overseas through USAID to programs like PEPFAR… or ideally the entire rich world including europe, japan, middle-eastern petro-states, etc. The point being that it seems more ethical to me to justify coercion using a more natural grouping like the entire world population, such that the argument goes “it’s in the collective benefit of the average human, for richer people to have some of their money transferred to poorer people”. Verus something about “it’s in the collective benefit of all the world’s poor people plus a couple of FTX investors, to take everything the FTX investors own and distribute it among the poor people” seems like a messier standard that’s much more ripe for abuse (since you could always justify taking away anything from practically anyone, by putting them as the sole relatively-well-off member of a gerrymandered group of mostly extremely needy people).

It also seems important that taxes for international aid are taken in a transparent way (according to preexisting laws, passed by a democratic government, that anyone can read) that people at least have some vague ability to give democratic feedback on (ie by voting), rather than being done randomly by FTX’s CEO without even being announced publicly (that he was taking their money) until it was a fait accompli.

Versus I’m saying that various forms of conscription / nationalization / preventing-people-and-capital-from-fleeing (ideally better forms rather than worse forms) seems morally justified for a relatively-natural group (ie all the people living in a country that is being invaded) to enforce, when it is in the selfish collective interest of the people in that group.

huw said “Conscription in particular seems really bad… if it’s a defensive war then defending your country should be self-evidently valuable to enough people that you wouldn’t need it.”

I’m saying that huw is underrating the coordination problem / bank-run effect. Rather than just let individuals freely choose whether to support the war effort (which might lead the country to quickly collapse even if most people would prefer that the country stand and fight), I think that in an ideal situation:

1. people should have freedom of speech to argue for and against different courses of action—some people saying we should surrender because the costs of fighting would be too high and occupation won’t be so bad, others arguing the opposite. (This often doesn’t happen in practice—places like Ukraine will ban russia-friendly media, governments like the USA in WW2 will run pro-war support-the-trooops propaganda and ban opposing messages, etc. I think this is where a lot of the badness of even defensive war comes from—people are too quick to assume that invaders will be infinitely terrible, that surrender is unthinkable, etc.)

2. then people should basically get to occasionally vote on whether to keep fighting the war or not, what liberties to infringe upon versus not, etc (you don’t necessarily need to vote right at the start of the war, since in democracy there’s a preexisting social contract including stuff like “if there’s a war, some of you guys are getting drafted, here’s how it works, by living here as a citizen you accept these terms and conditions”)

IMO, under those conditions (and as long as the burdens / infringements-of-liberty of the war are reasonably equitably shared throughout society, not like people voting “let’s send all the ethnic-minority people to fight while we stay home”), it is ethically justifiable to do quite a lot of temporarily curtailing individual liberties in the name of collective defense.Back to finance analogy: sometimes non-fraudulent banks and investment funds do temporarily restrict withdrawals, to prevent bank-runs during a crisis. Similarly, stock exchanges implement “circuit-breakers” that suspend trading, effectively freezing everyone’s money and preventing them from selling their stock, when markets crash very quickly. These methods are certainly coercive, and they don’t always even work well in practice, but I think the reason they’re used is because many people recognize that they do a better-than-the-alternative job of looking out for investors’ collective interests.

This isn’t part of your thought experiment, but in the real world, even if FTX had spent a much higher % of their ill-gotten treasure on altruistic endeavors, the whole thing probably backfired in the end due to reputational damage (ie, the reputational damage to the growth of the EA movement hurt the world much more than the FTX money donated in 2020 − 2022 helped).

And in general this is true of unethical / illegal / coercive actions—it might seem like a great idea to earn some extra cash on the side is beating up kids for their lunch money, but actually the direct effect of stealing the money will be overriden by the second-order effect of your getting arrested, fined, thrown in jail, etc.

But my impression is that most defensive wars don’t backfire in this way?? Ukraine or Taiwan might be making an ethical or political mistake if they decide to put up more of a fight by fighting back against an invader, but it’s not like conscripting more people to send to the front is going to paradoxically result in LESS of a fight being put up! Nations siezing resources & conscripting people in order to fight harder, generally DOES translate straightforwardly into fighting harder. (Except on the very rare occasion when people get sufficiently fed up that they revolt in favor of a more surrender-minded government, like Russia in 1918 or Paris in 1871.)

To be clear, I am not saying that conscription is always justified or that “it’s solving a coordination problem” is a knockdown argument in all cases. (If I believed this, then I would probably be in favor of some kind of extreme communist-style expropriation and redistribution of economic resources, like declaring that the entire nation is switching to 100% Georgist land value taxes right now, with no phase-in period and no compensating people for their fallen property values. IRL I think this would be wrong, even though I’m a huge fan of more moderate forms of Georgism.) But I think it’s an important argument that might tip the balance in many cases.

Finally, to be clear, I totally agree with you that conscription is a very intense infringement on individual human liberty! I’m just saying that sometimes, if a society is stuck between a rock and a hard place, infringements on liberty can be ethically justifiable IMO. (Ideally IMO I’d like it if countries, even under dire circumstances, should try to pay their soldiers at least something reasonably close to the “free-market wage”, ie the salary that would get them to willingly volunteer. If this requires extremely high taxes on the rest of the populace, so be it! If the citizens hate taxes so much, then they can go fight in the war and get paid instead of paying the taxes! And thereby a fair equilibrium can be determined, whereby the burden of warfighting is being shared equally between citizens & soldiers. But my guess is that most ordinary people in a real-world scenario would probably vote for traditional conscription, rather than embracing my libertarian burden-sharing scheme, and I think their democratic choice is also worthy of respect even if it’s not morally optimal in my view.)

Also agreed that societies in general seem a little too rearing-to-go to get into fights, likely make irrational decisions on this basis, etc. It would be great if everyone in the world could chill out on their hawkishness by like 50% or more… unfortunately there are probably weird adversarial dynamics where you have to act freakishly tough & hawkish in order to create credible deterrence, so it’s not obvious that individual countries should “unilaterally disarm” by doving-out (although over the long arc of history, democracies have generally sort of done this, seemingly to their great benefit). But to the extent anybody can come up with some way to make the whole world marginally less belligerent, that would obviously be a huge win IMO.

But there’s clearly a coordination problem around defense that conscription is a (brute) solution to.

Suppose my country is attacked by a tyrranical warmonger, and to hold off the invaders we need 10% the population to go fight (and some of them will die!) in miserable trench warfare conditions. The rest need to work on the homefront, keeping the economy running, making munitions etc. Personally I’d rather work on the homefront (or just flee the country, perhaps)! But if everyone does that, nobody will head to the trenches, the country will quickly fold, and the warmonger invader will just roll right on to the next country (which will similarly fold)!

It seems almost like a “run on the bank” dynamic—it might be in everyone’s collective interests to put up a fight, but it’s in everyone’s individual interests to simply flee. So, absent some more elegant galaxy-brained solution (assurance contracts, prediction markets, etc??) maybe the government should defend the collective interests of society by stepping in to prevent people from “running on the bank” by fleeing the country / dodging the draft.

(If the country being invaded is democratic and holds elections during wartime, this decision would even have collective approval from citizens, since they’d regularly vote on whether to continue their defensive war or change to a government more willing to surrender to the invaders.)

Of course there are better and worse forms of conscription: paying soldiers enough that you don’t need conscription is better than paying them only a little (although in practice high pay might strain the finances of an invaded country), which is better than not paying them at all.

The OP seems to be viewing things entirely from the perspective of individual rights and liberties, but not proposing how else we might solve the coordination problem of providing for collective defense.

Eg, by his own logic, OP should surely agree that taxes are theft, any governments funded by such flagrant immoral confiscation are completely illegitimate, and anarcho-capitalism is the only ethically acceptable form of human social relations. Yet I suspect the OP does not believe this, even though the analogy to conscription seems reasonably strong (albeit not exact).

Wow, sounds like a really fun format to have different philosophers all come and pitch their philosophy as the best approach to life! I’d love to take a class like that.

Reposting here a recent comment of mine listing socialist-adjacent ideas that at least I personally am a lot more excited about than socialism itself.

* * *FYI, if you have not yet heard of “Georgism” (see this series of blog posts on Astral Codex Ten), you might be in for a really fun time! It’s a fascinating idea that aims to reform capitalism by reducing the amount of rent-seeking in the economy, thus making society fairer and more meritocratic (because we are doing a better job of rewarding real work, not just rewarding people who happen to be squatting on valuable assets) while also boosting economic dynamism (by directing investment towards building things and putting land to its most productive use, rather than just bidding up the price of land).

A few other weird optimal-governance schemes that have socialist-like egalitarian aims but are actually (or at least partially) validated by our modern understanding of economics:using prediction markets to inform institutional decision-making (see this entertaining video explainer), and the wider field of wondering if there are any good ways to improve institutions’ decisions

using quadratic funding to optimally* fund public goods without relying on governments or central planning. (*in theory, given certain assumptions, real life is more complicated, etc etc)

pigouvian taxes (like taxes on cigarrettes or carbon emissions). Like georgist land-value taxes, these attempt to raise funds (for providing public goods either through government services or perhaps quadratic funding) in a way that actually helps the economy (by properly pricing negative externalities) rather than disincentivizing work or investment.

various methods of trying to improve democratic mechanisms to allow people to give more useful, considered input to government processes—approval voting, sortition / citizen’s assemblies, etc

conversation-mapping / consensus-building algorithms like pol.is & community notes

not exactly optimal governance, but this animated video explainer lays out GiveDirectly’s RCT-backed vision of how it’s actually pretty plausible that we could solve extreme poverty by just sending a ton of money to the poorest countries for a few years, which would probably actually work because 1. it turns out that most poor countries have a ton of “slack” in their economy (as if they’re in an economic depression all the time), so flooding them with stimulus-style cash mostly boosts employment and activity rather than just causing inflation, and 2. after just a few years, you’ll get enough “capital accumulation” (farmers buying tractors, etc) that we can taper off the payments and the countries won’t fall back into extreme poverty + economic depression

the dream (perhaps best articulated by Dario Amodei in sections 2, 3, and 4 of his essay “machines of loving grace”, but also frequently touched on by Carl Schulman) of future AI assistants that improve the world by actually making people saner and wiser, thereby making societies better able to coordinate and make win-win deals between different groups.

the concern (articulated in its negative form at https://gradual-disempowerment.ai/, and in its positive form at Sam Altman’s essay Moore’s Law for Everything), that some socialist-style ideas (like redistributing control of capital and providing UBI) might have to come back in style in a big way, if AI radically alters humanity’s economic situation such that the process of normal capitalism starts becoming increasingly unaligned from human flourishing.

Reposting here a recent comment of mine listing socialist-adjacent ideas that at least I personally am a lot more excited about than socialism itself.

* * *FYI, if you have not yet heard of “Georgism” (see this series of blog posts on Astral Codex Ten), you might be in for a really fun time! It’s a fascinating idea that aims to reform capitalism by reducing the amount of rent-seeking in the economy, thus making society fairer and more meritocratic (because we are doing a better job of rewarding real work, not just rewarding people who happen to be squatting on valuable assets) while also boosting economic dynamism (by directing investment towards building things and putting land to its most productive use, rather than just bidding up the price of land). Check it out; it might scratch an itch for “something like socialism, but that might actually work”.

A few other weird optimal-governance schemes that have socialist-like egalitarian aims but are actually (or at least partially) validated by our modern understanding of economics:using prediction markets to inform institutional decision-making (see this entertaining video explainer), and the wider field of wondering if there are any good ways to improve institutions’ decisions

using quadratic funding to optimally* fund public goods without relying on governments or central planning. (*in theory, given certain assumptions, real life is more complicated, etc etc)

pigouvian taxes (like taxes on cigarrettes or carbon emissions). Like georgist land-value taxes, these attempt to raise funds (for providing public goods either through government services or perhaps quadratic funding) in a way that actually helps the economy (by properly pricing negative externalities) rather than disincentivizing work or investment.

various methods of trying to improve democratic mechanisms to allow people to give more useful, considered input to government processes—approval voting, sortition / citizen’s assemblies, etc

conversation-mapping / consensus-building algorithms like pol.is & community notes

not exactly optimal governance, but this animated video explainer lays out GiveDirectly’s RCT-backed vision of how it’s actually pretty plausible that we could solve extreme poverty by just sending a ton of money to the poorest countries for a few years, which would probably actually work because 1. it turns out that most poor countries have a ton of “slack” in their economy (as if they’re in an economic depression all the time), so flooding them with stimulus-style cash mostly boosts employment and activity rather than just causing inflation, and 2. after just a few years, you’ll get enough “capital accumulation” (farmers buying tractors, etc) that we can taper off the payments and the countries won’t fall back into extreme poverty + economic depression

the dream (perhaps best articulated by Dario Amodei in sections 2, 3, and 4 of his essay “machines of loving grace”, but also frequently touched on by Carl Schulman) of future AI assistants that improve the world by actually making people saner and wiser, thereby making societies better able to coordinate and make win-win deals between different groups.

the concern (articulated in its negative form at https://gradual-disempowerment.ai/, and in its positive form at Sam Altman’s essay Moore’s Law for Everything), that some socialist-style ideas (like redistributing control of capital and providing UBI) might have to come back in style in a big way, if AI radically alters humanity’s economic situation such that the process of normal capitalism starts becoming increasingly unaligned from human flourishing.

Fooming Shoggoths! (And, more generally, much of the secular-solstice music—there’s already a database full of this somewhere.)