The Front-Running Catastrophe: Why P(Stable Totalitarianism) might beat P(Doom) to the Finish Line

| This is a Draft Amnesty Week draft. It may not be polished, up to my usual standards, fully thought through, or fully fact-checked. |

Commenting and feedback guidelines: This is my first blogpost, and my goal is to break the likelihood of Stable Totalitarianism down into fundamental sub-probabilities. I hope these questions will be useful for others when considering P(Stable Totalitarianism) |

I was recently inspired by a post by Benthams Bulldog called Against “If Anyone Builds It Everyone Dies”. Where his post focused on X-risk from catastrophic misalignment specifically, my central claim is that P(Stable Totalitarianism), or P(T) for short, might be strictly more likely than catastrophic misalignment, or P(Doom) given some assumptions.

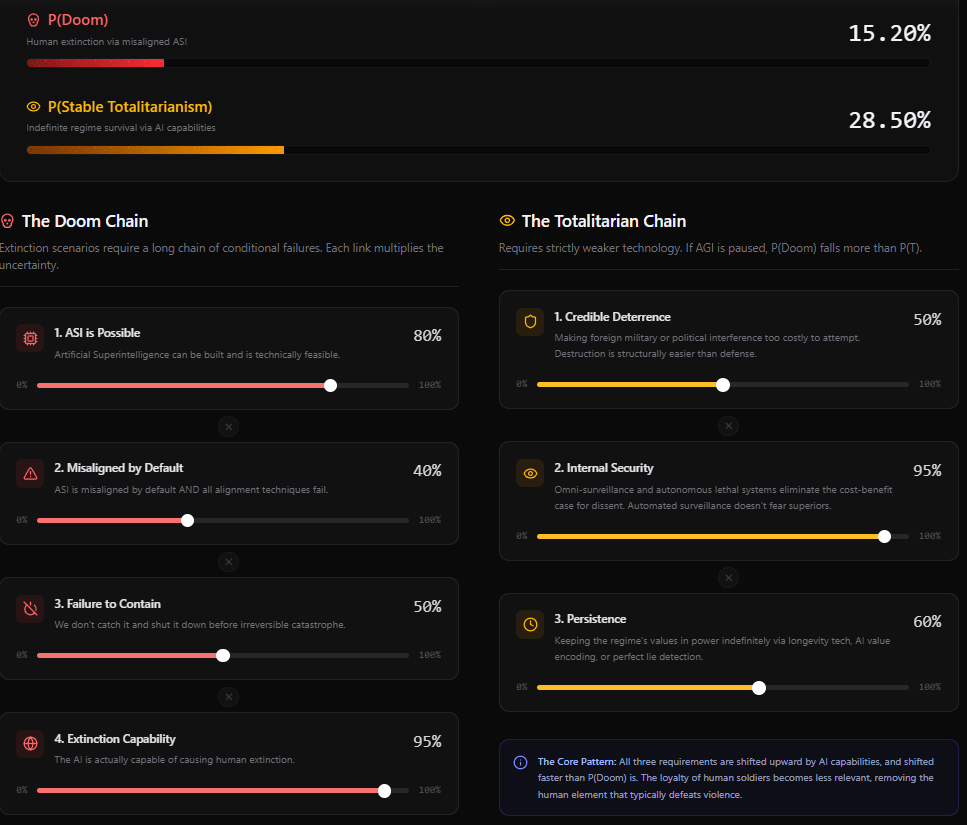

The post breaks AI doom discourse into a chain of conditional probabilities:

P(superintelligence is possible)

P(ASI is misaligned by default AND all alignment techniques fail)

P(we don’t catch it and shut it down before irreversible catastrophe)

P(AI is capable of causing human extinction)

Each step is conditional on the last. You only end up in extinction scenarios in worlds where ASI is both achievable and misaligned. The chain is long, and each link multiplies the uncertainty.

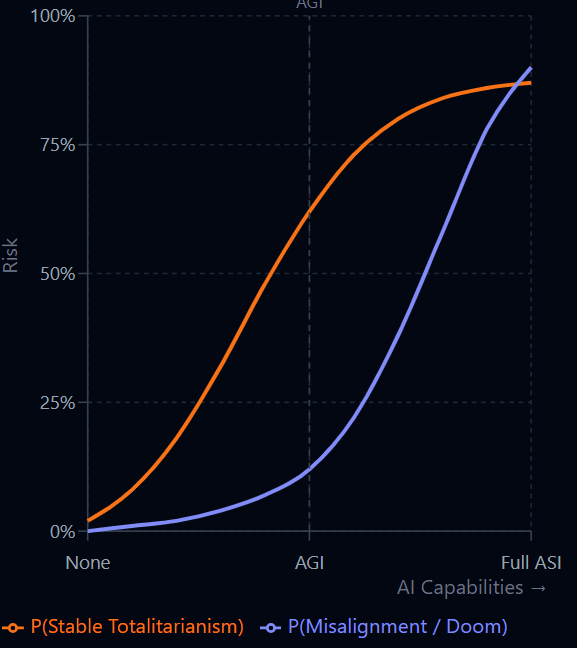

In contrast, P(T) is like a series of dice rolls for each country (But I won’t dive into AI-enabled power concentration as this is already covered by 80 000 hours), technologies that could tip the scales are already within reach, and requires strictly weaker AI in expectation. This means that if P(we don’t build AGI) falls due to increases in P(intentional pausing) or P(technical barriers), your P(Doom) should fall more than your P(T).

Imagine you are the dictator of one of Earth’s roughly 15 countries that can be described as totalitarian or totalitarian-adjacent. What would it take to extend your reign indefinitely? I argue that all you need are technologies that don’t seem too unlikely within a few years.

To explain why, let’s start with what the threats to a totalitarian regime are:

1: External military defeat – Nazi Germany, Mussolini’s Italy, Imperial Japan, the Khmer Rouge.

2: Other external interventions –(The types of actions taken against Maduro in Venezuela, Hezbollah in Lebanon and targeted strikes in Iran)

3: Internal resistance — the Arab Spring, the fall of the Shah, the crowds that brought down the Berlin Wall and the Ceaușescus.

4: Elite fracturing and palace coups — Beria arrested, Khrushchev ousted, Mugabe removed by his own generals.

5: Succession failures — the power vacuums after Stalin, Tito, and Franco.

Avoid these five, and your regime survival odds seem solid. There may still be black swans, but even dictatorships are learning institutions. China’s most important lesson from the Soviet collapse, for instance, was to liberalize economically but not politically.

From these five threats, we can derive three requirements for stable totalitarianism.

Requirement 1: Credible Deterrence Against External Intervention

A regime needs to make foreign military or political interference too costly to attempt. North Korea shows that nuclear deterrence is the gold standard, but even “weak” AI-enabled capabilities might lower (or raise) the bar for a small state to hold larger ones at bay.

The crucial point here is that destruction is structurally easier than defense. A regime doesn’t need parity, it needs to raise the cost of intervention above the threshold any external power is willing to pay.

It’s also worth noting that totalitarian states have strong mutual interests. If one regime needs to acquire a technology it cannot develop domestically, others can have incentive to supply it. The totalitarian world is not a collection of isolated actors.

Requirement 2: Internal Security / Eliminating the Cost-Benefit Case for Dissent

This is where I think the picture becomes most stark.

Omni-surveillance; technologically mature, continuous monitoring of every person — plausibly reduces the probability of successful rebellion, coup, or assassination by 99%. What closes the remaining gap is autonomous lethal systems: slaughterbots, armed drones capable of reaching any person anywhere, operating without a human soldier who might hesitate or refuse.

There are more speculative complementary technologies inspired by dystopian fiction; psychiatric medication, neurological lie detection, brain scanning etc. If anyone develops any of these technologies – for which there are strong market incentives – every totalitarian state that acquires it is effectively locked in. On the other hand, history reminds us that the bar for suppression is lower than it looks: the post-Stalin Soviet leadership kept an entire nation in compliance by imprisoning a few thousand dissidents per year.

Requirement 3: Persistence / Keeping the Regime’s Values in Power Indefinitely

Even a perfectly secured regime eventually faces the problem of succession. Three plausible solutions are on the horizon:

-Longevity and anti-aging technology extends the ruler’s lifespan indefinitely.

-AI encoding of values allows a sufficiently powerful AI to perpetuate the regime’s goals after the founder is gone.

-Lie detection or mind-reading could make it possible for a ruler to verify, with high confidence, that a chosen successor shares their values.

Each of these seem individually unlikely in isolation, but substantially more probable conditional on strong AI, and not strictly dependent on it. It’s fairly plausible that we solve biological aging while stopping short of ASI due to a moratorium or fundamental technical barriers.

The Core Pattern

All three requirements are made easier by AI capabilities, and shifted faster than P(Doom) is. That asymmetry is the central observation.

Figure 1: This arbitrary graph encodes the core argument visually: P(T) rises steeply early, well before AGI

Figure 2: Example of a probability calculator where each risk is broken down into its component probabilities (which themselves can be broken down further). In the doom chain, each step is contingent on the ones above.

There are two key insights driving this:

First: The loyalty of human soldiers, bureaucrats, and intelligence officers becomes progressively less relevant as AI systems grow more capable and autonomous. This also dissolves one of the classic vulnerabilities of authoritarian information systems; the reluctance of subordinates to report bad news upward. Automated surveillance doesn’t fear its superiors.

Second: The growth of P(T) does not fall the same way P(Doom) falls in worlds where we stop short of ASI.

The Geometry of the Problem

P(T) is highly variable by country, by alliance structure, and by the dynamics between blocs. Russia and China are the obvious cases to watch. But stable totalitarianism has a troubling mathematical property: it functions like a black hole. Once a country crosses into it, escape probability approaches zero. If any country faces even a 1% chance per century of falling into stable totalitarianism, and a 0% chance of leaving it, then the long-run expected outcome is not in question.

The more grounded concern is however the following: A world in which AI development is successfully paused or constrained, but not before producing systems capable of helping a government, a company, an extremist group or even an individual seize and consolidate power domestically. In such a world, “AI powerful enough to overthrow a government, not powerful enough to end civilization” becomes the defining threat. I highly recommend the 80 000 hours episode on this.

Arguments Against P(T)

The strongest counterargument is straightforward: if the democratic world continues to grow economically and technologically, it will maintain a substantial military and technological edge over totalitarian states. Their structural inefficiencies — suppressed information, stifled innovation, misallocated talent — compound over time.

But this counterargument has an uncomfortable implication: it means rational totalitarian regimes face strong incentives to pursue global control before that gap becomes decisive. It also depends critically on the free world’s AI development remaining superior. If totalitarian states achieve AI parity or better, the economic efficiency argument weakens considerably.

I’m highly uncertain about the technical feasibility of deterrence, the persistence tractability, and the future dynamics between totalitarian states. I’d particularly welcome pushback on whether there are dependencies I’ve collapsed that should be separated out.

Thank you for posting this. I’m also thinking about the probability of stable totalitarianism recently, and your post gave me some new perspectives. Especially the idea that “Lie detection or mind-reading technique may increase totalitarianism” and “P(T) is like a series of dice rolls for each country”

Thanks! I think there’s a lot of thinking that needs to be done wrt all the different contingencies conditioned on the “best case scenario of pausing / not reaching ASI”