I have a master’s in Information Science. Before switching to the master’s, I was a Ph.D. student in Planetary Science where I used optimization models to physically characterize asteroids (including potentially hazardous ones).

Historically, my most time-intensive EA involvement has been organizing Tucson Effective Altruism, the EA university group at the University of Arizona. If you are a movement builder, let’s get in touch!

I am broadly interested in economic growth, abundant futures, and earning-to-give for animal welfare. Always happy to chat about anything EA!

akash 🔸

2026, the year vegan baking was solved!

On a more serious note:

“At $24 for the equivalent of 45 egg whites ($0.53 each) it’s more expensive than buying conventional ($0.21 each) or organic ($0.33) egg whites, but not massively so.”

I would be interested in an econ person’s take on how they predict the price to change over time. Intuitively, I feel that $0.53 is a promising number for a newly launched product, and if demand increases, the price would plummet and make it the de-facto choice for many products that contain egg whites?

I hope they do a good job of marketing it, an increasing number of people are negatively primed towards cultivated products.

Ok but I unironically think that a 2D debate slider could be useful!

So, who is this?

(Not a solution, but a general observation about people who engage in bashing EA.)

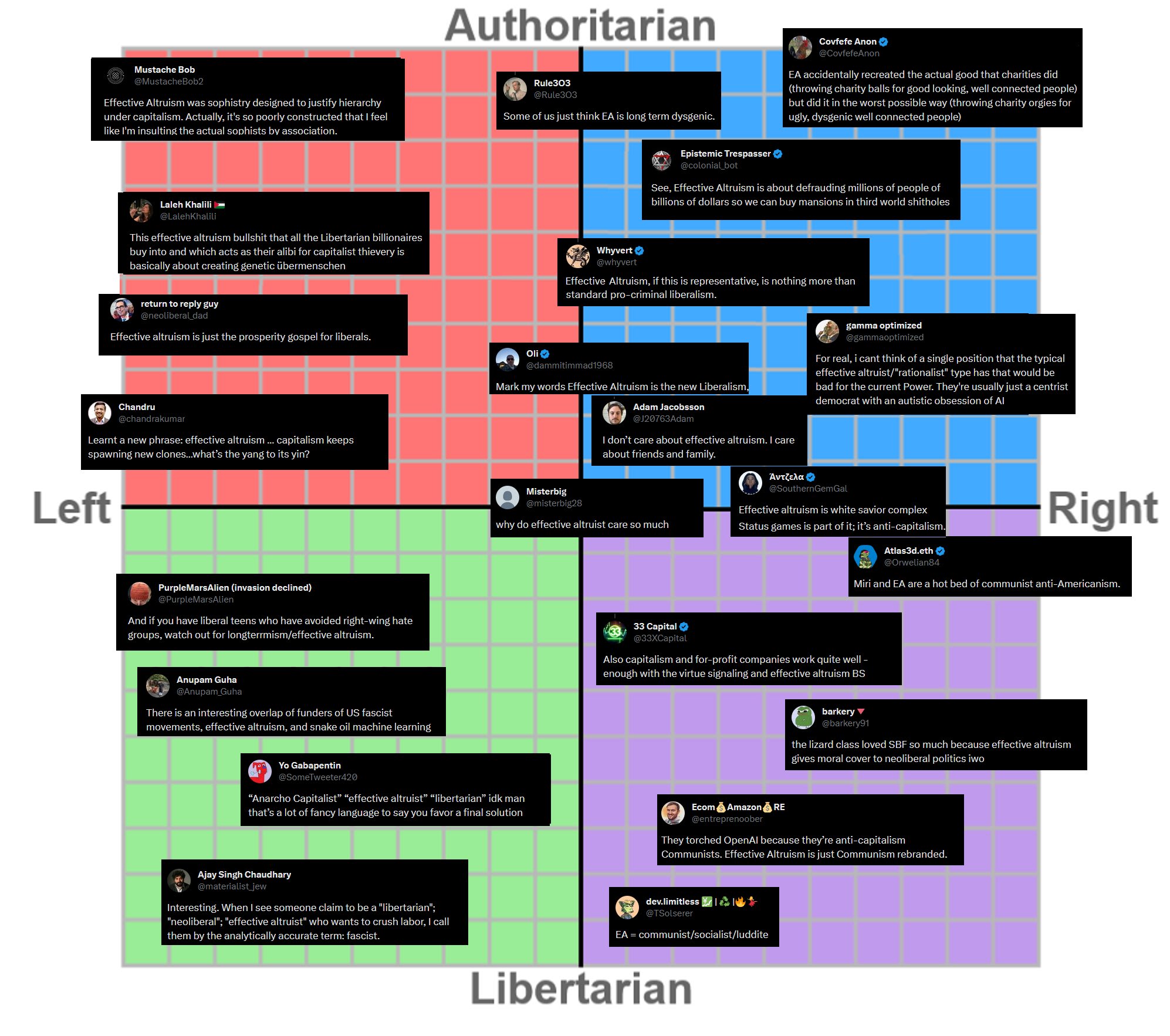

The “dot connectors” will always connect the dots, infer or invent nefarious motivations, and try to bucket you as they like. The problem is that you can’t neatly map EAs onto the political spectrum—yes, there are dominant trends, but the variance in views is sufficiently high that commentators have genuinely no clue where EAs belong. This makes sense because most major movements in history have been political ones, so when assessing EA, most people pull out their internal political philosophy detector and you end up with a mess like the chart below!

But EA is a moral philosophy movement, and the chain of thinking is genuinely different. Instead of thinking how to organize society and labor, EAs unanimously agree on beneficentrism and deal with questions like, “What morally matters? To what degree? Which interventions are most effective? How do you even assess what is most effective?” When you organize a movement around these set of questions, you end up with:

Some people who want to automate software engineering, some who want to pause it entirely, and others who think we should defensively accelerate progress

At least two frontier AI labs: let’s not forget OpenAI received $30 million in philanthropic money during its inception!

Some EAs who think that AI will be a big deal for {their cause area}, others who are skeptical of the whole AI bundle

Some EAs passionately dislike AI writing, some are fine with methodical use of AI in writing, and some are even more liberal about it

One particular EA who is the loudest voice combatting the data center water usage myth

(At least) one person from the EA-sphere who has large holdings in AI infrastructure

And conservative AI Safetyists like you and liberal long timeline accelerationists like me

I don’t know what the best solution for combatting EA bashing is, but spreading the idea that EA is more politically and intellectually diverse than people think should help.

… other brain regions (accessory lobes) have shown to compensate these integrative processes in this taxon, which has not yet been demonstrated for Penaeidae. It’s thus still a low rating for lack of data, not for proof of failing this criterion.

This reminds me of two things:

I am forgetting the precise terms here, but for a while in the 1800s through most of the 1900s, researchers thought that birds weren’t intelligent because they were essentially comparing human and avian brains 1:1, but later, others found that while birds lacked that specific component (neocortex?), some other regions of their brain were functionally similar and that birds were indeed smart rather than instinct-driven biological machines.

I recall watching Dustin Crummett’s presentation on insect sentience a while back, and when talking about lack of evidence of sentience in certain insects, he emphasized that the besides black soldier fly and honeybees, most insects aren’t that well-studied.

I am a little surprised that evidence for integrative brain regions is very high for all but the Penaeidae. Do we know to what extent this is the case because direct/proxy studies on Penaeidae sentience haven’t been performed vs. studies were performed but results showed low evidence of sentience?

And answering some of your questions:

Which criterions do you think are the most convincing to update your confidence?

Criteria 2 ≈ 3 > 4 ≈ 5

Do you have other types of evidence that better influence your confidence?

Not evidence, but a heuristic I use when thinking about sentience is that any organism that performs reinforcement learning, i.e., making on-the-fly decisions informed by environmental stimuli is most likely sentient.

I lean towards a yes butI am uncertain because I don’t know how the stimuli is fed and I would imagine that the simulated brain, unlike an embodied fruit fly, isn’t perpetually processing information and taking actions. If the latter is true and if it replaces the need for … processing … billions of life fruit flies in labs worldwide, seems like a huge animal welfare win to me.EDIT: Eon, the company behind this development published a blog post explaining their research, and after reading it, I am much less confident in my lean. This doesn’t seem to be a whole fly brain emulation / a full copy:

First, the Shiu et al. model is a simplified neuron model. It uses leaky integrate-and-fire dynamics rather than morphologically detailed multicompartment neurons, and it relies on inferred neurotransmitter identity and simplified synapse models. This means that dendritic nonlinearities, biophysical channel diversity, and many specific dynamics are not represented. This is enough to recover some sensorimotor transformations, but clearly does not capture the full range of neural activity. Further, internal state, plasticity, learning, hormonal changes are largely missing. Biological flies do not respond to the same sensory input the same way in all contexts. Hunger, satiety, arousal, mating state, egg-laying state, recent sensory history, neuromodulators, and learning all reshape sensorimotor transformations.

Is this primarily meant for people who are already veg*n/sympathetic or a wider audience?

If the latter, it is worth rethinking if the word “vegan” should be used at all, as there are a bunch of studies that show that the public is negatively biased towards the term and alternate terms are received more positively (see this, for instance).

I just emailed him, close to zero chance he will see it but if he does 🤞

but its very possible that many fish that we kill after catching (yes with a bad death) have net positive lives.

Doesn’t this imply that even a theoretical painless death of a fish is really really bad because your taking away all the good moments trillions of fish could have experienced? You could argue that the utility experienced by those who consume the fish is higher, but it probably doesn’t compare to the utility those unimaginably large amount of creatures could have experienced had they continued their natural lives.

(I agree with the more important point that non-adversarial messaging matters and these sorts of comparisons are practically useless.)

Some of the proposed interventions neatly align with practices followed by cities that have “dark sky laws.” Uncertain, but maybe there is a feed two birds with one scone solution here.

“Problem is that rarely in the world of public engagement, media and comms does everything go right.”

“But if you’re going to go ahead, be VERY sure you’re doing it right.”

Doesn’t statement 1 imply that statement 2 is an impossibly high standard to reach?

There are clearly mistakes here which could have been avoided, but it is really hard to predict the counterfactual; it is possible that even if those steps were taken, the level of infighting or the amount of clickbait journalism would have been about the same. Maybe not, but who knows!

I was annoyed with all the clickbait-y articles and my fellow EAs are far too deferential and being against diet change is currently the trendy view within the movement. At the same time, I think it would be healthy for the broader animal movement to build a stronger culture of cooperation and that involves a higher degree of charitability and a lower bar of what’s acceptable when trying something new.

Posting this here for a wider reach: I’m looking for roommates in SF! Interested in leases that begin in January.

Right now, I know three others who are interested and we have a low-key signal group chat. If you are interested, direct message me here or on one my linked socials and we will hop on a 15-minute call to determine if we would be a good match!

Hank Green should attend an EAG next year.

+1 to this, I would be disappointed if EAG merch was super generic. The sweatshirt from EAG Bay (which I do not have) had a fantastic design, and I liked the birds on the EAG NYC t-shirt.

But I am also someone who has a bright teal colored backpack with pink straps and my laptop has 50,000 stickers so …

By my count, barring Trajan House, it now appears that EA has officially been annexed from Oxford.

Forethought, AI Gov at Oxford Martin, and EA Oxford operate out of Oxford. I am sure Uehiro has EA/adjacent philosophers? GPI’s closure is a shame, of course.

It’s OK to eat honey

I am quite uncertain because I am unsure to what extend a consumption boycott affects production; however, I lean slightly on the disagree side because boycotting animal-based foods is important for:

Establishing pro-animal cultural norms

Incentivizing plant-based products (like Honee) that already face an uphill climb towards mass adoption

Sounds like patient philanthropy? See @trammell’s 80K episode from four years ago.

Pete Buttigieg just published a short blogpost called We Are Still Underreacting on AI.

He seems to believe that AI will be cause major changes in the next 3-5 years and thinks that AI poses “terrifying challenges,” which make me wonder if he is privately sympathetic to the transformative AI hypothesis. If yes, he might also take catastrophic risks from AI quite seriously. While not explicitly mentioned, at the end of his piece, he diplomatically affirms:

The coming policy battles won’t be over whether to be “for” or “against” AI. It is developing swiftly no matter what. What we can do is take steps to ensure that it leads to more abundant prosperity and safety rather than deprivation and danger. Whether it does one or the other is, at its core, not a technology problem but a social and political problem. And that means it’s up to us.

Even if Buttigieg doesn’t win, he will probably find himself on the presidential cabinet and could be quite influential on AI policy. The international response to AI depends a lot on which side wins the 2028 election.

Some thoughts:

Youtubers are rarely impartial and thorough, so it shouldn’t be surprising that a person with finite time to investigate a movement they likely already felt negatively about would do a so-so job and not go beyond the reddit-level understanding of EA and EAs.

Adding on to your observation, the youtuber’s conclusion doesn’t capture how the vast majority of EAs who are into specific causes and varying levels of certainty about their beliefs. It doesn’t capture the friendship and antagonism between rats and EA, between EA and silicon valley people, between EAs and the left, and EAs and the right …

Youtubers also need to youtube—the algorithm also rewards inflammatory language, so “xyz is literally the worst thing in the world” will always do better than “I have mixed feelings about xyz.”

I don’t know if it is productive to engage with every video of this nature, but it definitely made me think about EA’s public “persona.” Barring the narrow cluster of possible futures where the world goes exactly how a few dozen east bay rationalists think it will, I feel cause-neutral community building and comms still has value.

Definitely not! Reminder that a minority have heard about EA and have some basic understanding of the movement (and the basic EA pitch is well-received): https://forum.effectivealtruism.org/posts/CwKiAt54aJjcqoQDh/are-1-in-5-americans-familiar-with-ea

There are 8+ billion humans, and only 10-15K EAs. We are simply not reaching out to the millions of proto-EAs out there! We also don’t have the capacity or institutional intent to accommodate that many people.